About a couple of month ago, I was wondering what's 5K and why it was mentioned in official GTX TITAN Z

presentation at GTC 2014. In the presentation I'd watched, the GTX TITAN Z is described as "the first graphics

card that is 5K-Ready". From this, I planned to test several highest-end graphics cards at super-high resolution

above QHD. First, I designed the test scenarios as follow:

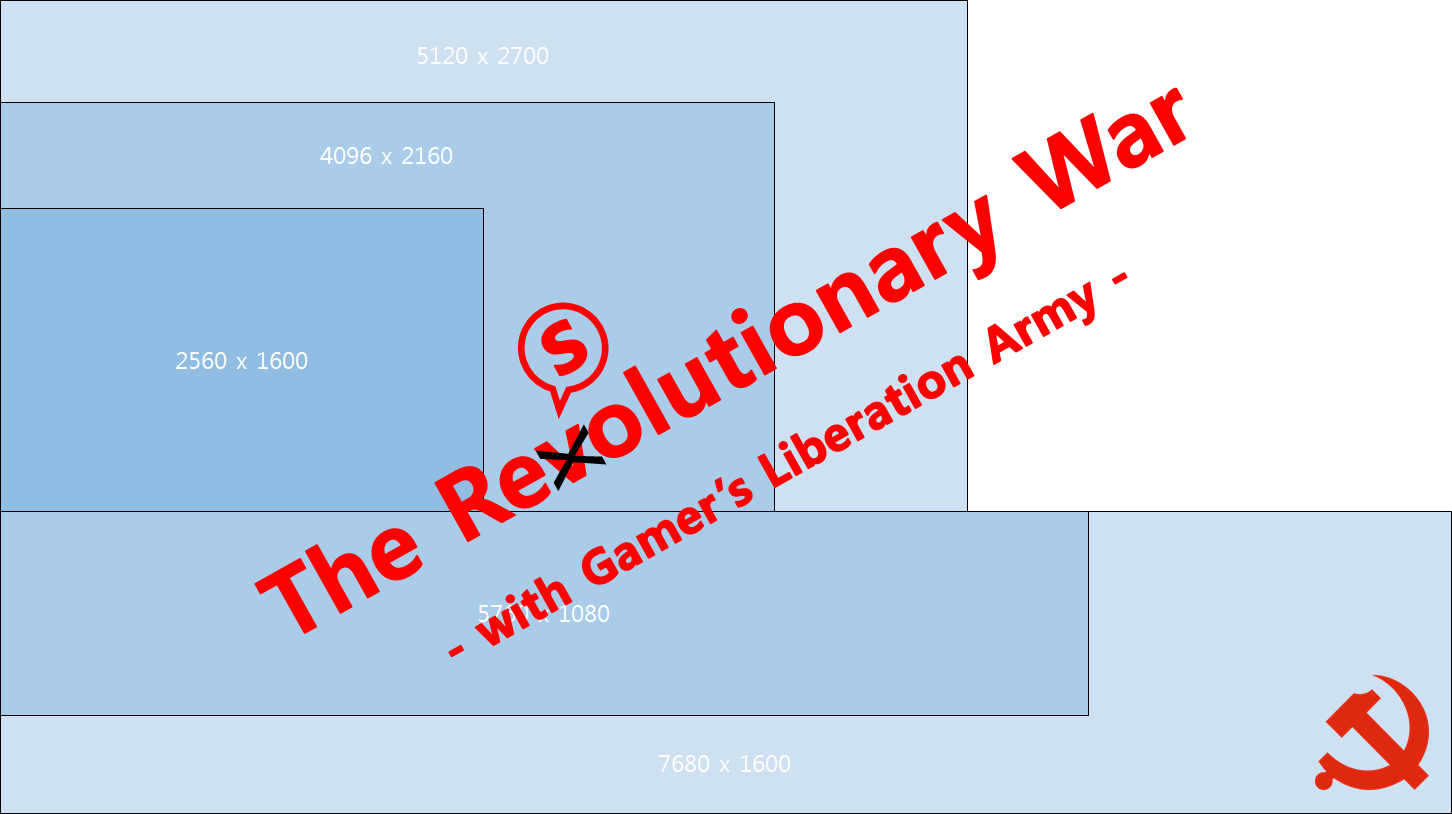

- 2560 x 1600 (4 million pixels)

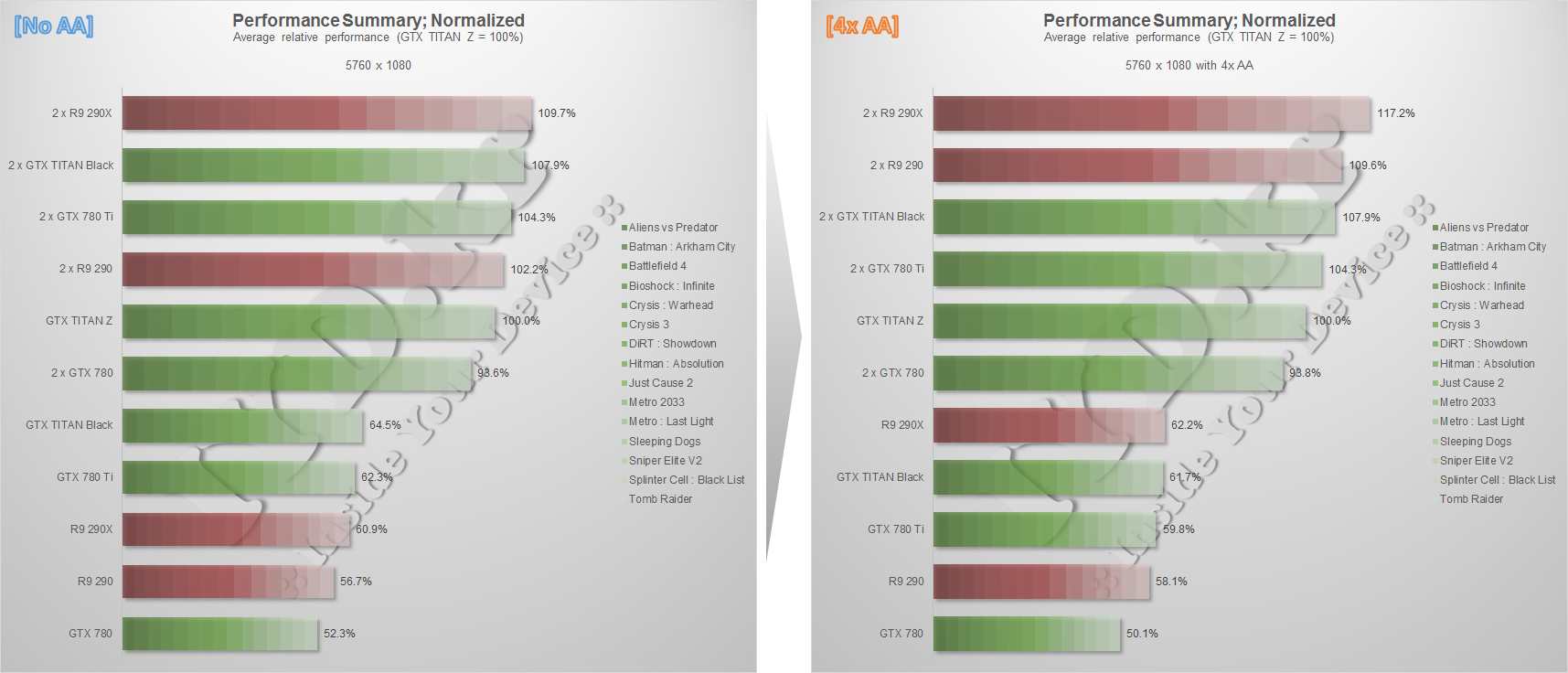

- 5760 x 1080 (3-way surround of 1920 x 1080, 6 million pixels)

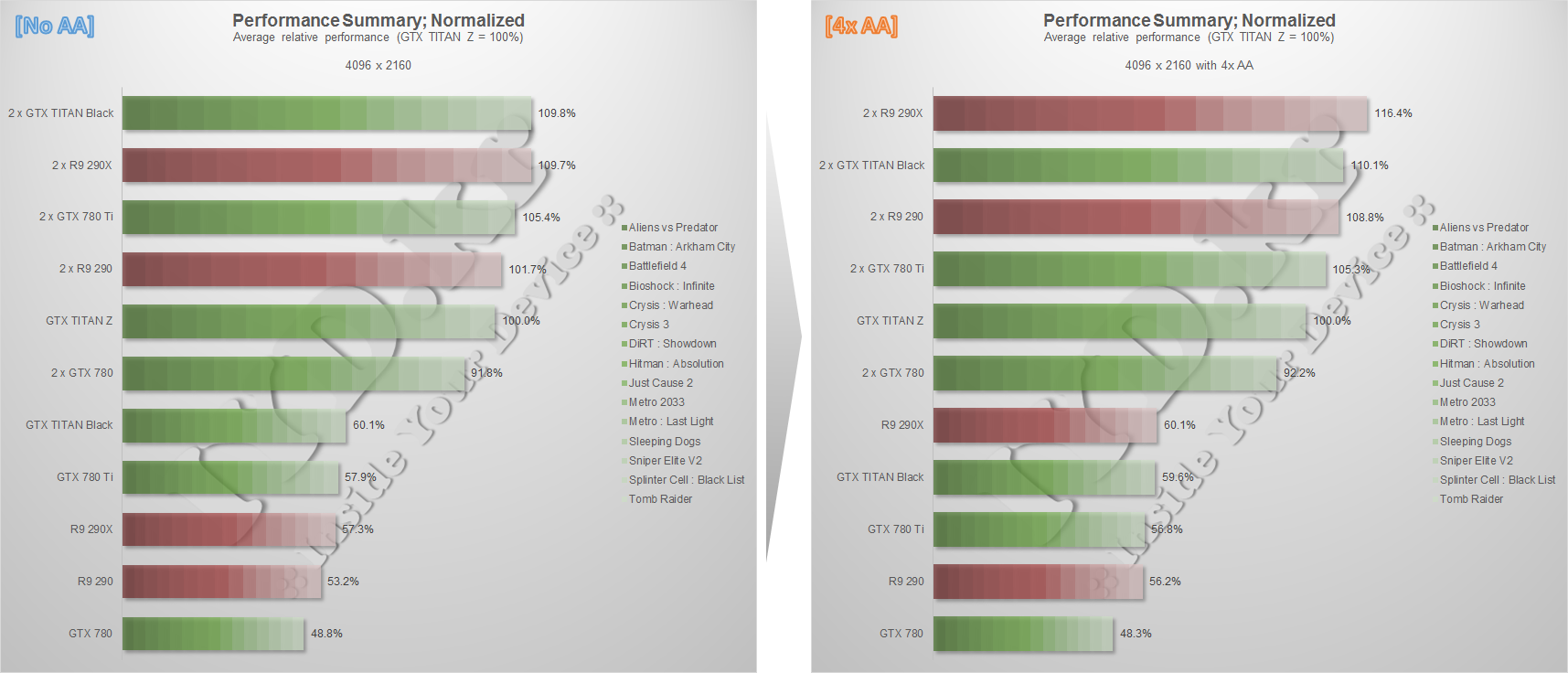

- 4096 x 2160 (4K, 8 million pixels)

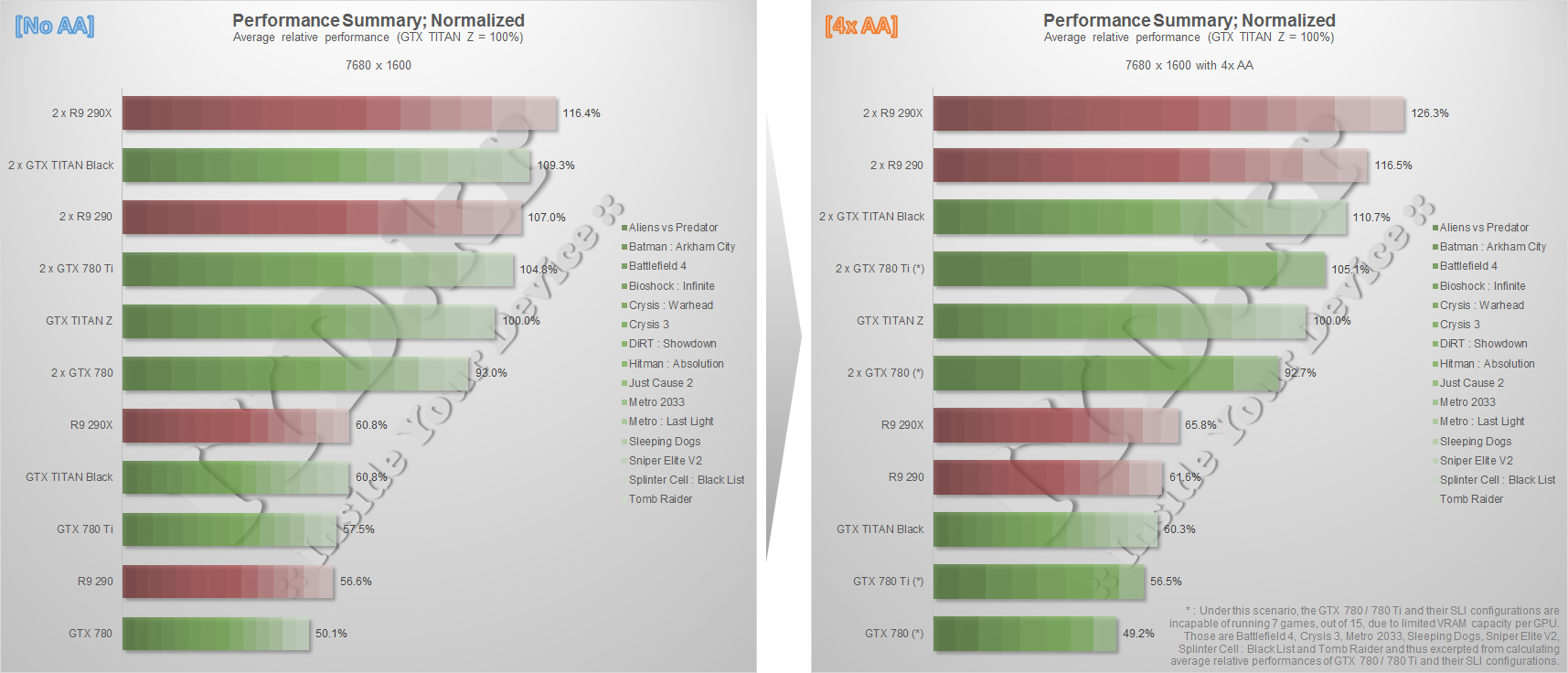

- 7680 x 1600 (3-way surround of 2560 x 1600, 12 million pixels)

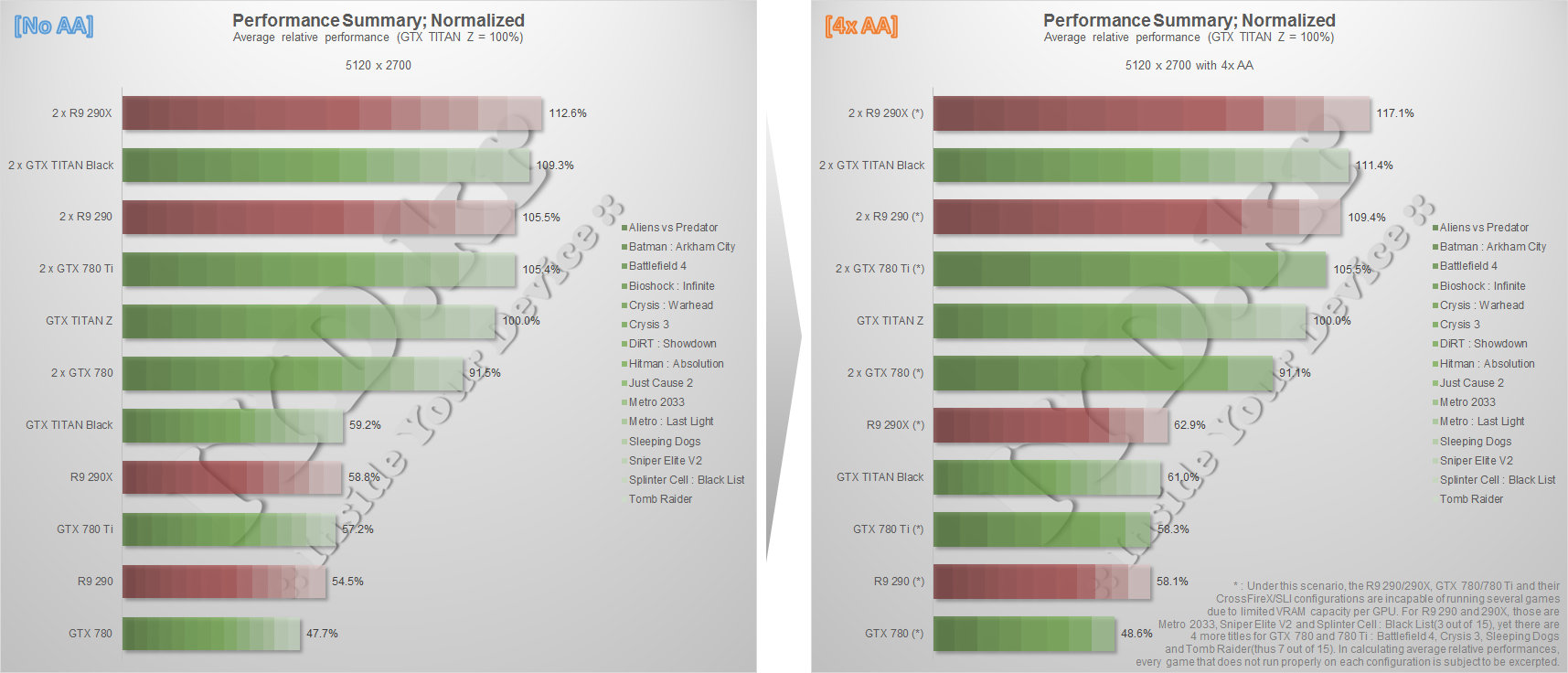

- 5120 x 2700 (5K, 14 million pixels)

For the test, all games are to be set at their highest graphics quality option except anti-aliasing : Speaking

anti-aliasing, I seperate all test scenarios (except for Bioshock : Infinite) into two groups - one for without

AA, another for with 4x AA. For Bioshock, I assumed its DX11+DDOF preset as "with 4x AA" as well as DX11 preset as

"without AA" since the official benchmark tool didn't offer such options.

And to adopt super-high resolutions in ordinary display(s), I used Eyefinity and NV Surround as well as "User

Defined Resolution" in NV Control Panel (for NVIDIA, of course). Specifically as follow:

- 2560 x 1600 : Nothing special

- 5760 x 1080 : AMD - Eyefinity / NVIDIA - NV Surround (both 3 x 1920x1080)

- 4096 x 2160 : AMD - Eyefinity (2 x 2048x2160) / NVIDIA - NVCP User Define (single display, upscaled)

- 7680 x 1600 : AMD - Eyefinity / NVIDIA - NV Surround (both 3 x 2560x1600)

- 5120 x 2700 : AMD - Eyefinity (4 x 2560x1350) / NVIDIA - NVCP User Define (single display, upscaled)

(for detailed info, see Ch2 and Ch3 of this article : http://iyd.kr/649)

For 4K and 5K, AMD and NVIDIA are not perfectly variable-controlled. (at least the # of display used are

different!) With keeping it in mind, I'm going to analyze how this -AMD's to control multiple display while

NVIDIA's to control just a single display- affect the overall performance at the end of the article.

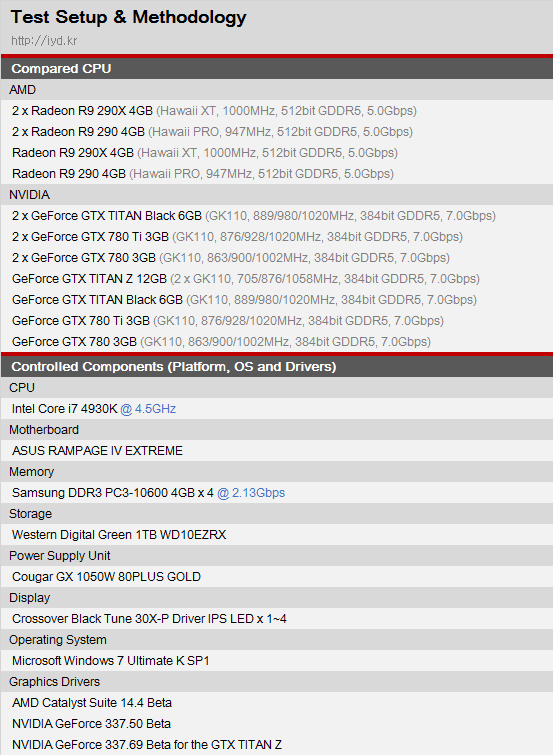

Well, here's the system setting.

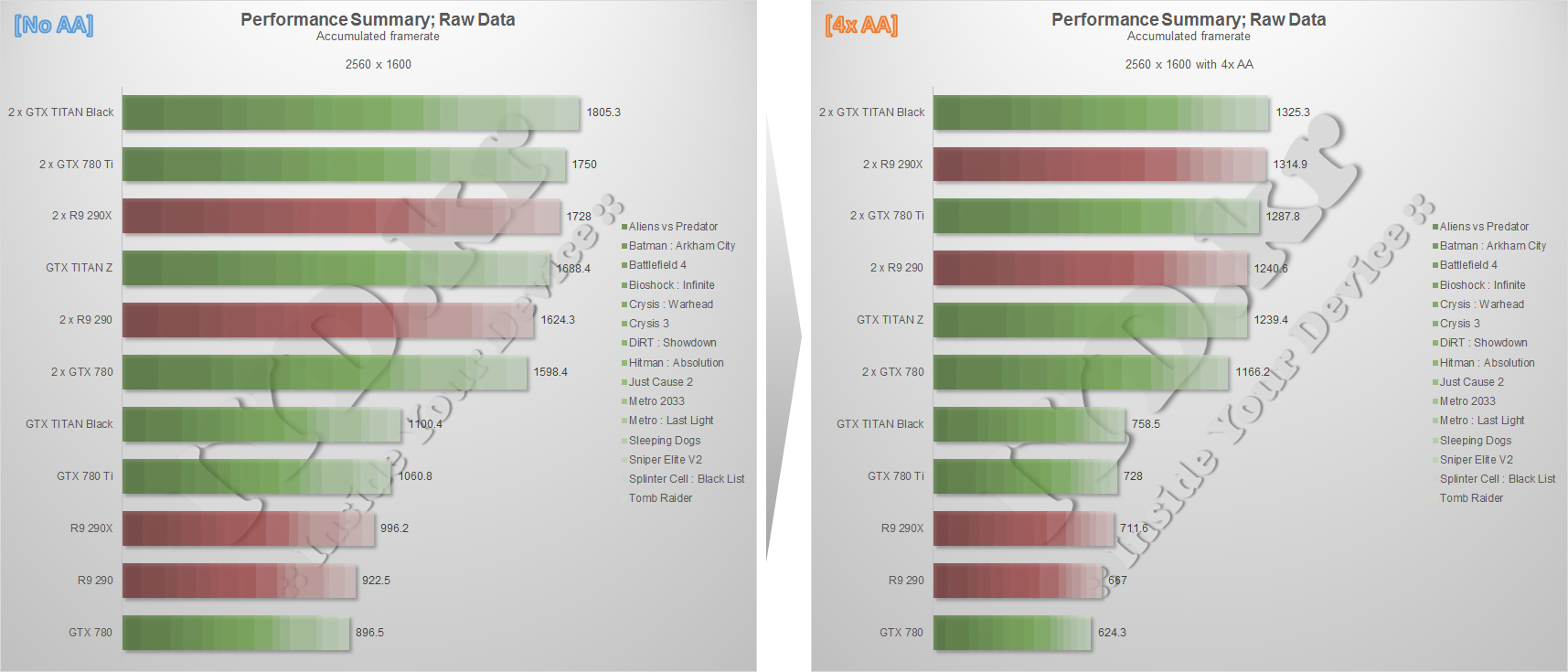

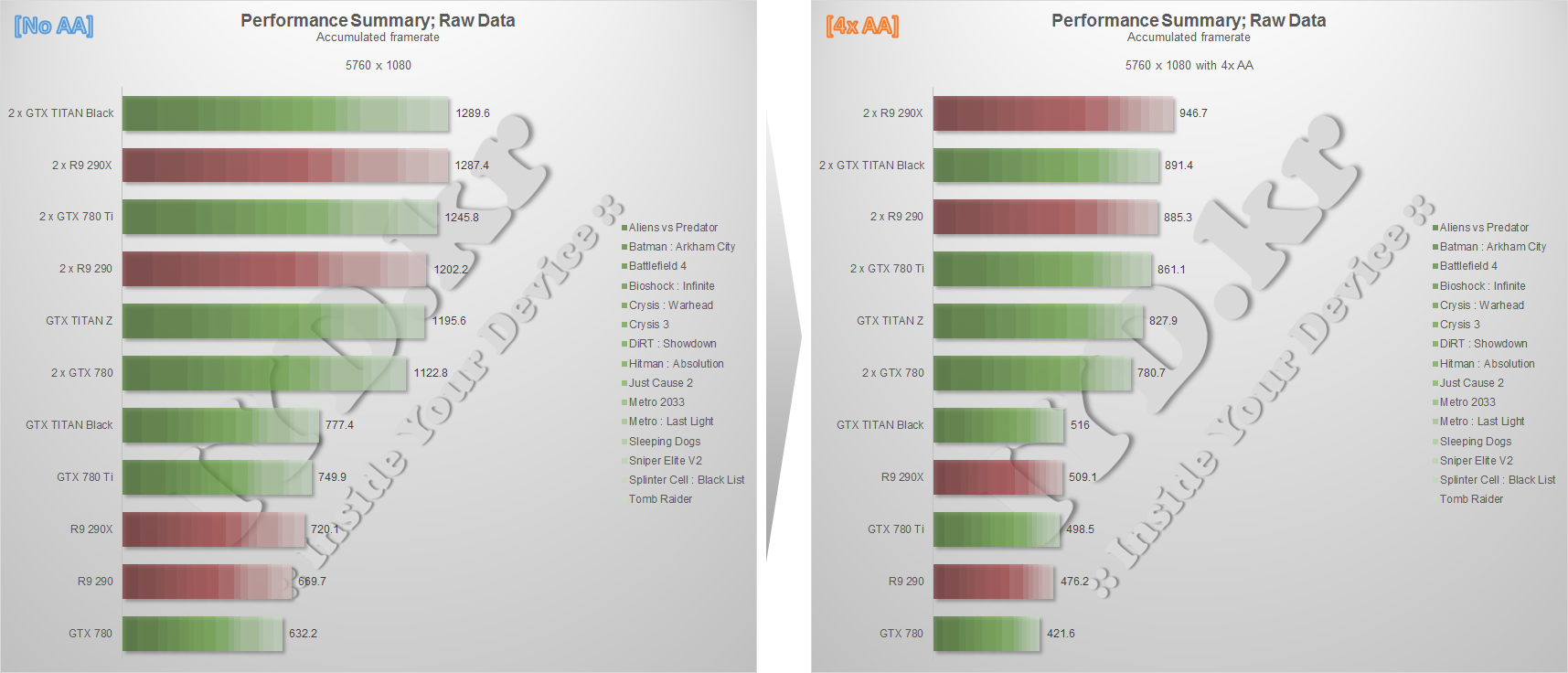

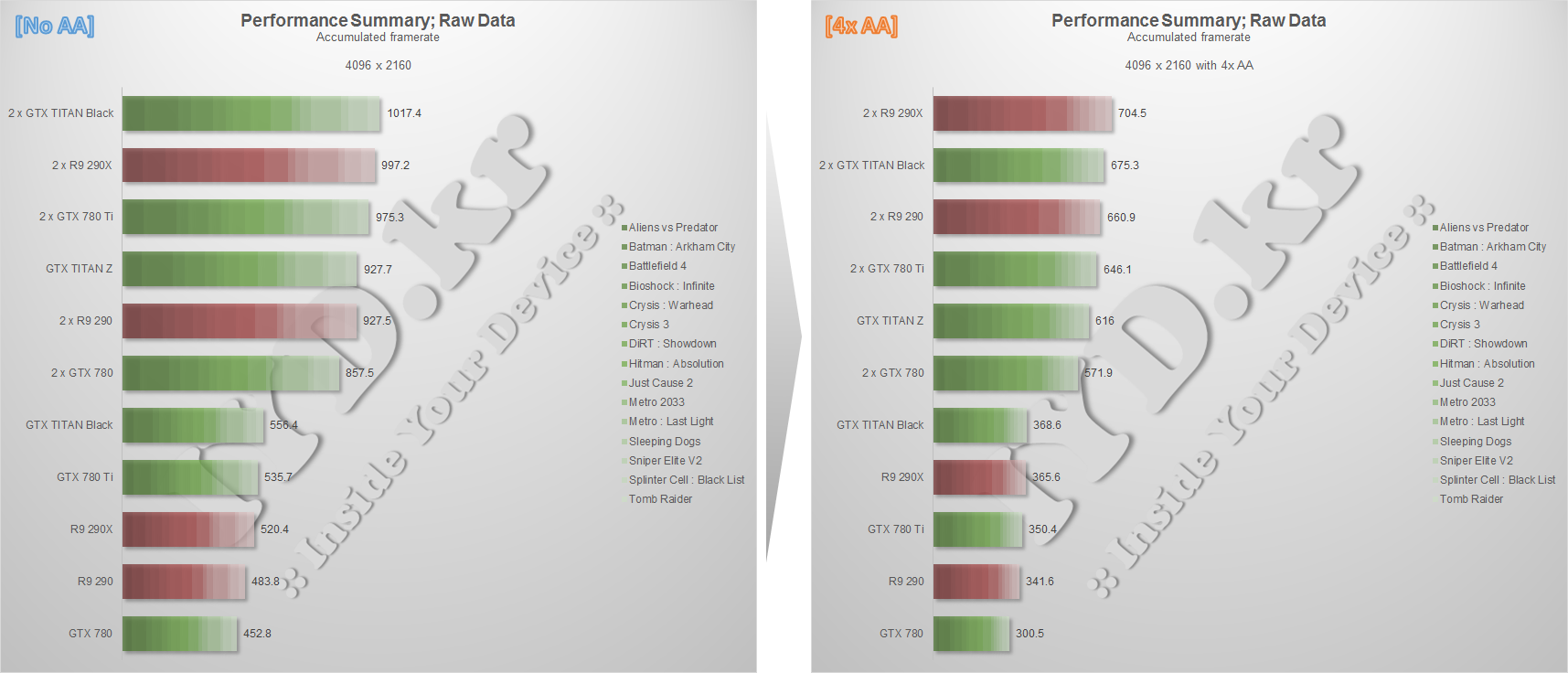

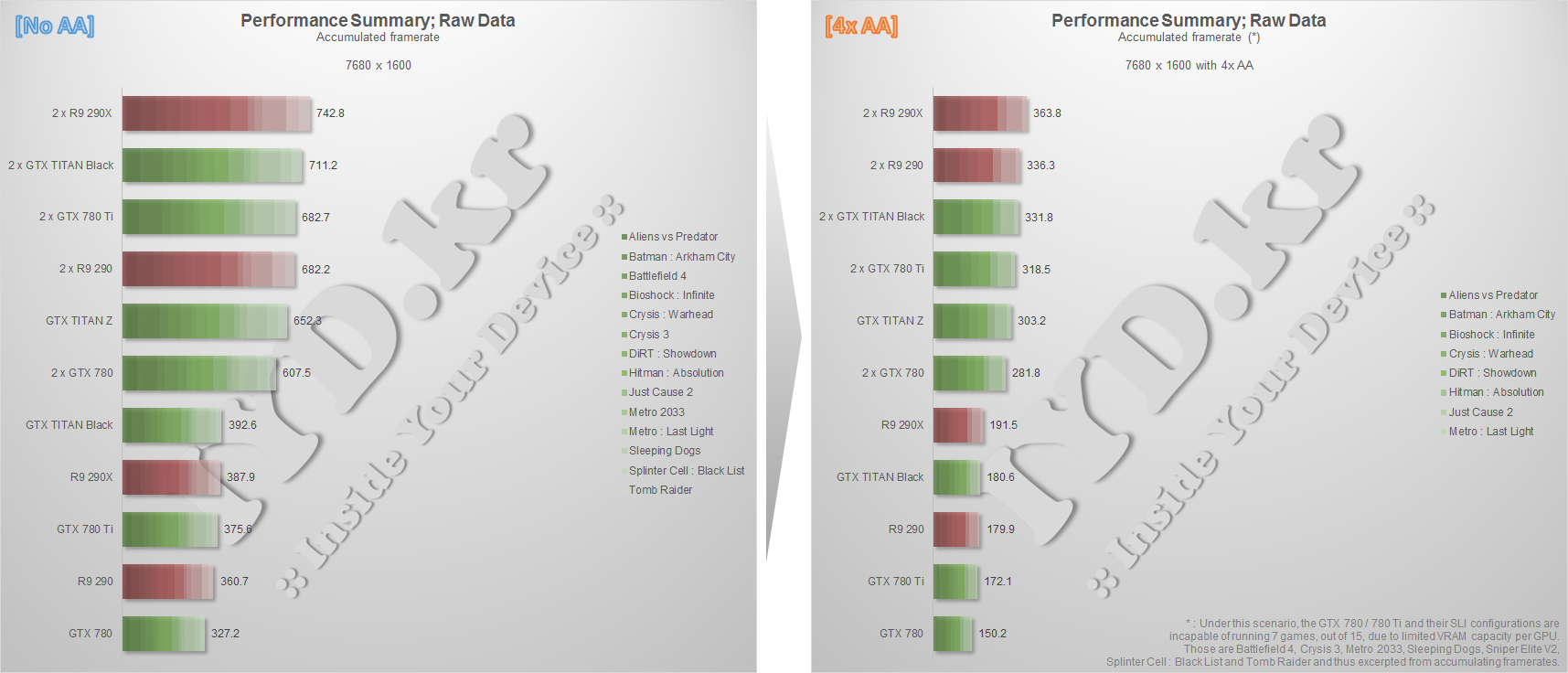

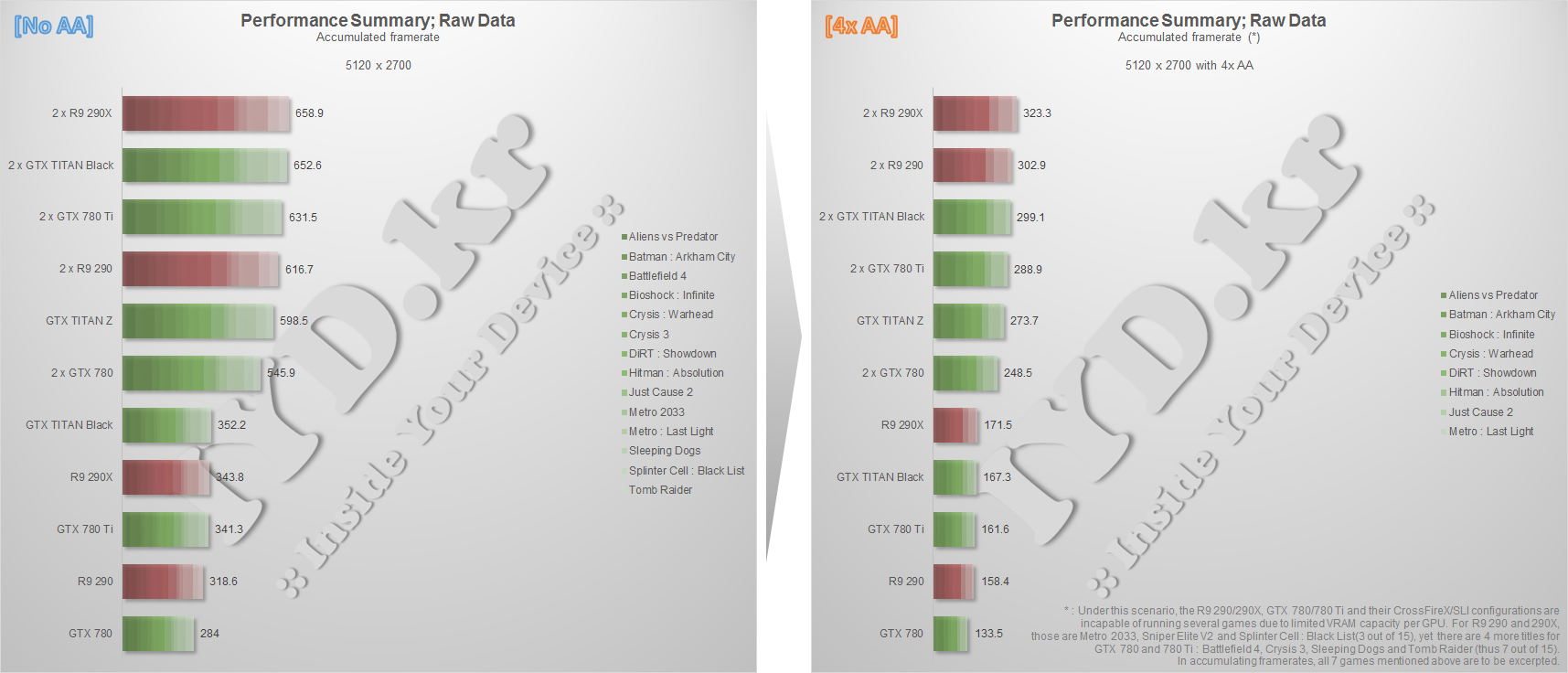

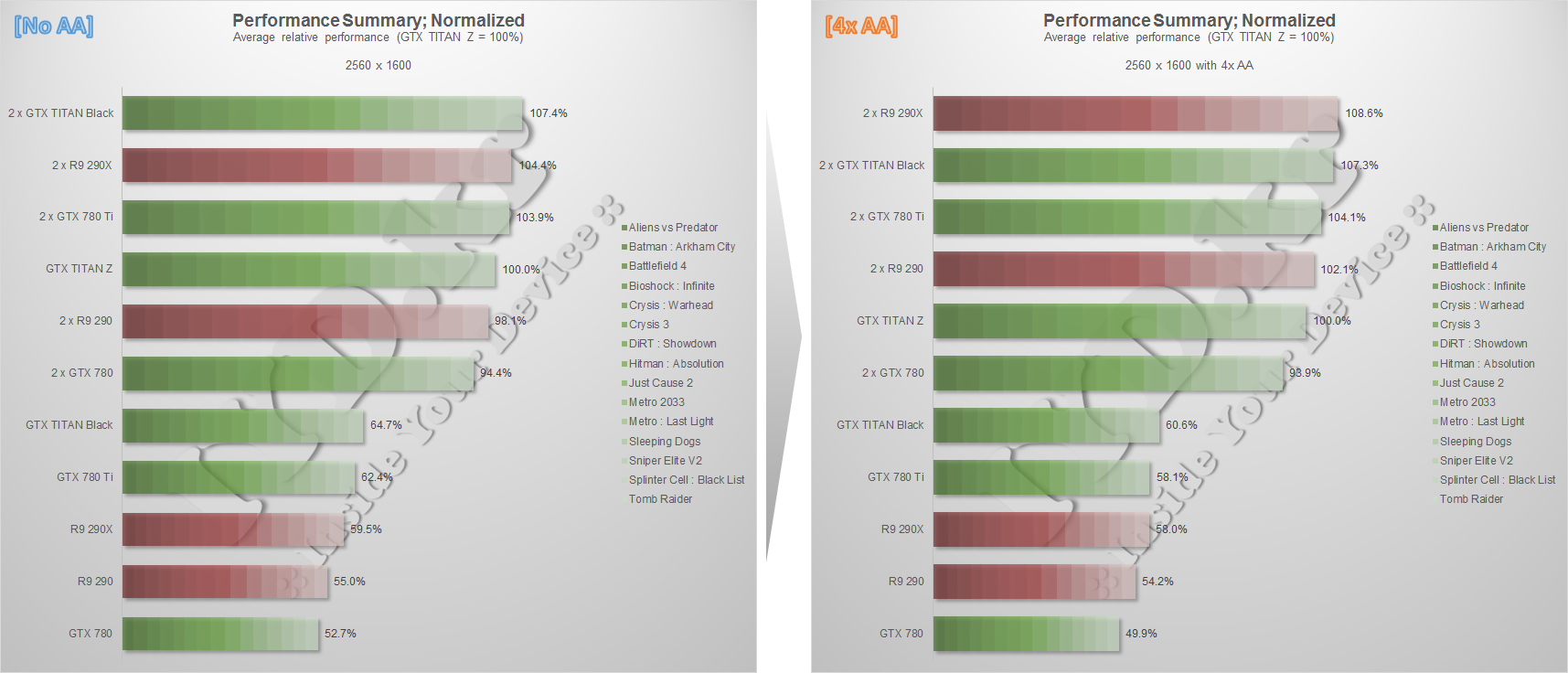

Here's the performance summaries. Note that the "Raw Data" graphs actually analyze nothing but show accumulated

framerates, and I guess everybody knows that just summing up framerates from all different games are virtually

incomparable. I intentionally put these graphs for two reasons : one is to emphasize that, for a single data set,

there is no such "only way" to analyze it. Two is to show that, by comparing "Raw Data" graphs and "Normalized"

graphs, how an "AMD/NVIDIA-freidnly" game affects and actually distorts the results. (For example, Batman -a true

friend of NVIDIA graphics card- gives a massive plus to NVIDIA graphics cards so that NVIDIA keeps relatively-

higher rank than AMD in Raw Data graphs, while we can see the contrary in Normalized graphs : AMD yields small but

many surpluses from many other game, and such "small but many" surpluses eventually exceeds the amount AMD loses

from Batman.)

(Full test results are here : http://iyd.kr/649)

As a summary, I think we all agree these three conclusions:

- Gaming at 5K isn't really tangible. After all, framerates from highest graphics solution are terrible.

- (Despite above,) a pair of 290X offers best relative performance at super-high resolutions.

- (Despite above two,) the GTX TITAN Z is the only single graphics card that is "capable" of 5K gaming.

Well, the test is over. Thanks for reading!

(Just because I'm Korean and I'm not that fluent in English, it takes a couple of days to translate my article

into English. Hope that I could do this faster, so that I could provide a better understanding.)