(I know that I'm linking a lot of stuff from Wikipedia, but it comes handy as a starting point to people who wants to know more about the stuff talked about)

Science agrees that for silicon higher temperature = shorter lifespan, but I haven't seen any significant cases of GPUs randomly dying after a certain time due to high heat. You'd see a gaussian distribution of such cases, which would quickly draw everyone's attention.

The distribution I think you are referring to is

this one. When microchips are made, one of the lasts steps before testing them at the wafer I think (I cannot remember the exact step right now, sorry), they are introduced in a furnace at a high (high for humans but low to the processes of making a microchip -impurities annealing, etc) temperature (I think it was around 150ºC) in order to age the chips. By this step, most of the chips that are prone to early failure, they fail. Then the exponential fall down you see at the beginning, is displaced to the left; so the chips that they sell us are a little bit 'old' but the manufacturer will assure you that the chips will have a low failure rate (maybe 5% or 2% of a batch? -sorry I cannot provide more data).

Usually the product becomes obsolete first (which happens in just a few years).

This is called

programmed obsolescence, which began in electronics maybe around 1990-1995? (more info on this

video with Annie Leonard -the others I know are in Spanish, sorry for not to linking them). This is related to

economies of scale, value engineering, etc. Hence, I somehow comprehend that this is engineered towards sub 100-150$ GPUs (~less than 100-120€) but if you pay, lets say, like two-three steps of 20-30% of the best performance/price product, then you are paying a premium for obtaining the high tech (you are on the right point of an

exponential curve of price on the horizontal axis and performance on the vertical axis). So, for this niche which is spending +350-400$ (+350-400€), you could expect two things: they are rich and prone to update with your high tier products (maybe 20% of the total) and the rest (80%) they are buying this relying on "price / durability". Then, this last sector is eager to expend a 'little fortune' on a premium product desiring that this product will last longer (in FPS -performance wise- and reliability), and will hold to it for 4-5 years (of a 2 year standard in Europe at least guarantee for any electronics). As for my personal experience I had a faulty Asus 4850 (due to the chip and a compact block of pre-applied thermal paste), then a replacement with some whining at the coils of the VRMs, then another replacement which was also an Asus 5770 (with some whining too) and after failing it, I'm settling on a XFX 5770 with an aftermarket cooler (and yes, I know what I am doing and I am careful doing it). The 4850 was back then ~200€ (1GB version) so it was a top-notch product and I was then thinking over the reviews and "price /durability /performance" curve, but I definitely had bad luck. Now with more info and knowledge, I am eager to pull the trigger for a 350-400€ GPU but also based on the temperatures of it to at least avoid a 2 year but less than 1 year (24/7) used product. This is why I love so much the reviews that look "under the hood": GPU, VRMs (quality and number), etc.

Also thermal expansion of solder joints leads to damage on the PCB itself first. Have you seen any scientific research on the topic of silicon lifespan vs temperature (on real shipping products)?

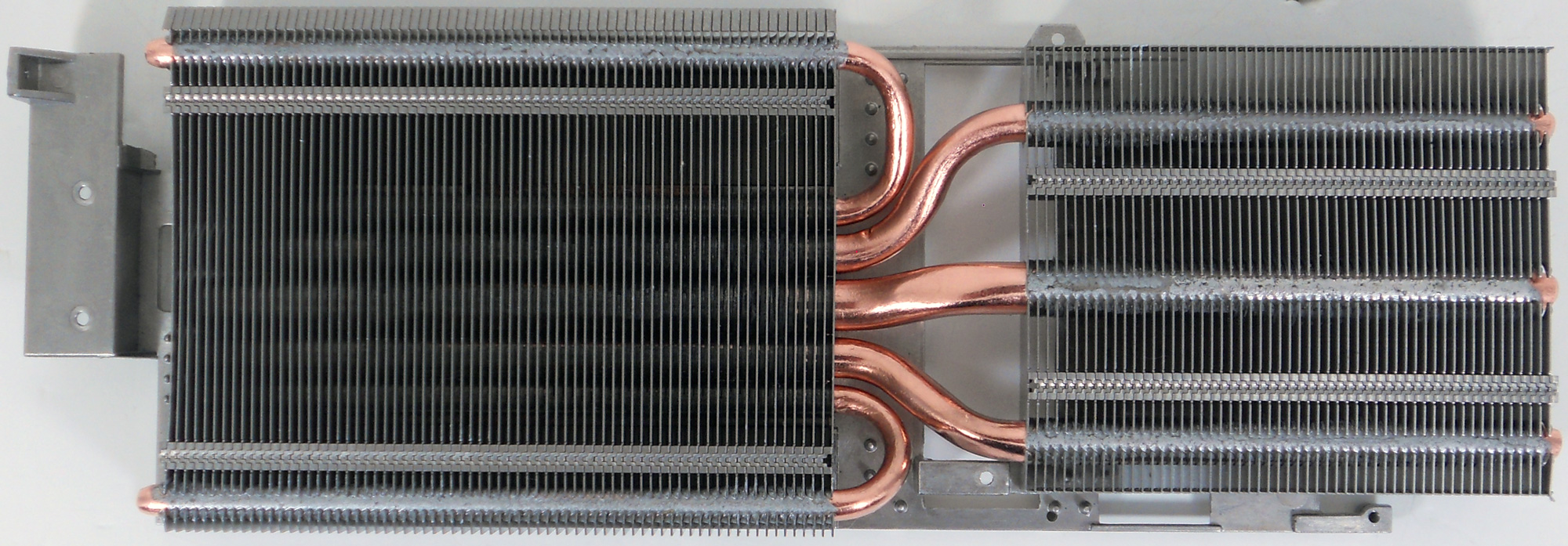

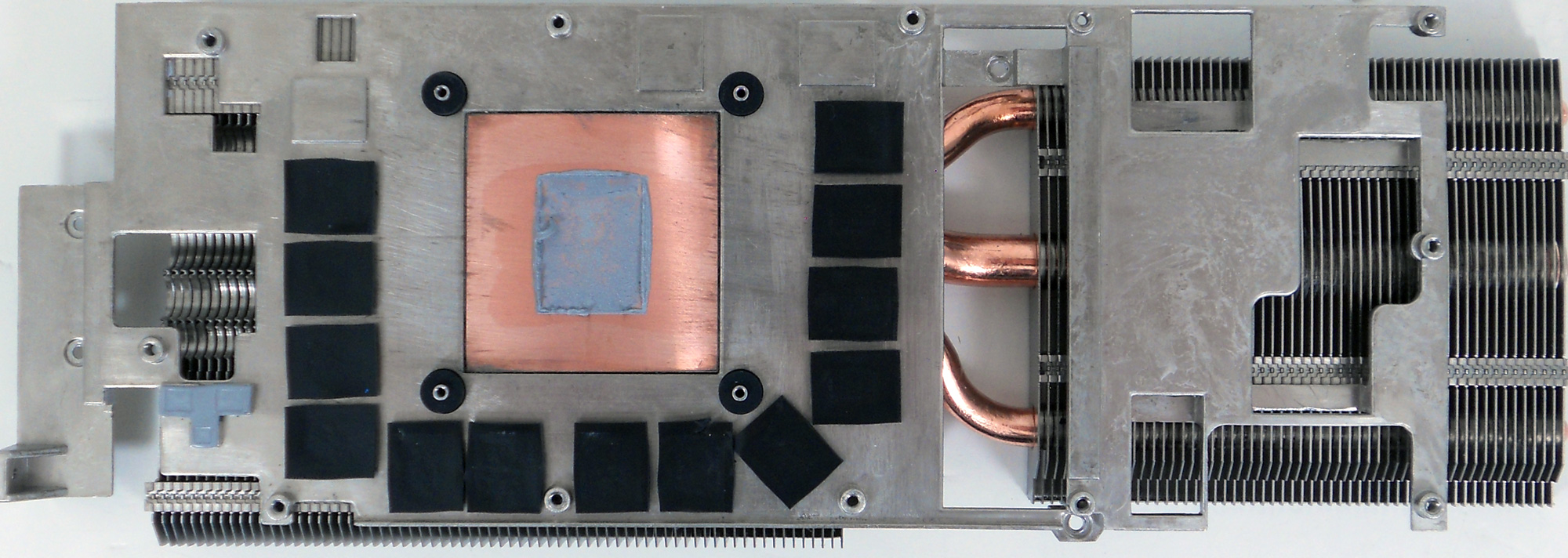

This is why there are some techniques that apply heat to the chip again in an attempt to resolder the balls again (due to thermal expansion-contraction) to make that contact again, or just apply cold with an air can (P·V = n·R·T, if you pull out too much air out the can, the air remaining will drastically go down its temperature)... or apply heat to desolder the chip, clean the soldering material from the PCB and chip and then, put a new mask of balls to resolder it. This is why I should recommend to GPU team to include in the reviews some data about other temperatures among the PCB, the back of it, VRMs etc (yes, I know it will cost money to find out and buy a reasonable IR thermo-probe maybe, but I think that definitely you will pay it off); and why I am so careful with reviews and temperatures of the whole GPU (not only the chip itself).

On the second point, I'm making my dissertation with the

Electronics Department of the

Technical University of Madrid. They have several papers + publications with a broad knowledge about aging (in fact, a colleague is making a sensor to detect aging on-chip and provide counter measures) + bast knowledge in other areas (i.e, quantification in which I am working on with CUDA). Right know, I cannot provide those papers as it is not my main area of knowledge, but if you have an IEEE account or maybe a Research Gate account, you could easily access to them. Anyhow, I will ask about them. Do you want to learn about general lifespan vs temperature or more focused on aging and use of the device?

I think you mean power gating, which has been used extensively over the last few years. Moderns GPUs reduce clock and voltage whenever they can, but as far as I know they do not randomly shut off shaders when full performance is needed. When idling, they shut off almost everything, even their 2D rendering acceleration units, by detecting whether the displayed image is static or not.

Yes and no

Yes because it is true that power gating is used widely among microchips (not only GPUs, think about the states of a CPU -RISC, CISC, whatever), but no because I am not stating the randomness of waking / sleeping them (just based on a plan and power states in order to reduce the power drawn by the microchip -which maybe it would be not efficient if not designed carefully) but the fact that you cannot power all the transistors / blocks / whatever at the same time due to the power phases and the power density. The more you shrink a process, the more stuff you could put into that 'box'. At the same time, the faster you would like to work for a transistor, the more voltage you have to apply on it (as long as this voltage is between some limits), and the more you shrink, another challenges appear as you scale down the processes of making a chip and making it work properly. Think on how to power, i.e, 1000 transistors of 50nm of gate length (using basic maths, at least (50x10)^2 nm^2 area, which is far more due to other several facts as they form a block or a logic cell in the chip). Then scale this with parallelization techniques in pipelines and stages of the data process, and you will have billions of transistors. Have you ever wondered how to power up this whole thing? There arguments were given by a professor that explained the basics of the power draw by a chip and by other professor which subject was about growing a transistor from a Si wafer. Sorry I cannot provide more evidence on this or the name in English; I'll try to ask about it too.

Because of all this points (yeah, the word you are thinking is stuff

), I wonder how AMD and specially Asus manages to cope with the same performance (FPS) at different temperatures, with more or less the same (~5W difference) power drawn. To be honest, I was thinking more of a ~20W difference + the fact of GPU throttling on AMD design.

PS: thanks for reading W1zzard! (and anyone interested in the topic

). Everyday we learn something new

Yes because it is true that power gating is used widely among microchips (not only GPUs, think about the states of a CPU -RISC, CISC, whatever), but no because I am not stating the randomness of waking / sleeping them (just based on a plan and power states in order to reduce the power drawn by the microchip -which maybe it would be not efficient if not designed carefully) but the fact that you cannot power all the transistors / blocks / whatever at the same time due to the power phases and the power density. The more you shrink a process, the more stuff you could put into that 'box'. At the same time, the faster you would like to work for a transistor, the more voltage you have to apply on it (as long as this voltage is between some limits), and the more you shrink, another challenges appear as you scale down the processes of making a chip and making it work properly. Think on how to power, i.e, 1000 transistors of 50nm of gate length (using basic maths, at least (50x10)^2 nm^2 area, which is far more due to other several facts as they form a block or a logic cell in the chip). Then scale this with parallelization techniques in pipelines and stages of the data process, and you will have billions of transistors. Have you ever wondered how to power up this whole thing? There arguments were given by a professor that explained the basics of the power draw by a chip and by other professor which subject was about growing a transistor from a Si wafer. Sorry I cannot provide more evidence on this or the name in English; I'll try to ask about it too.

Yes because it is true that power gating is used widely among microchips (not only GPUs, think about the states of a CPU -RISC, CISC, whatever), but no because I am not stating the randomness of waking / sleeping them (just based on a plan and power states in order to reduce the power drawn by the microchip -which maybe it would be not efficient if not designed carefully) but the fact that you cannot power all the transistors / blocks / whatever at the same time due to the power phases and the power density. The more you shrink a process, the more stuff you could put into that 'box'. At the same time, the faster you would like to work for a transistor, the more voltage you have to apply on it (as long as this voltage is between some limits), and the more you shrink, another challenges appear as you scale down the processes of making a chip and making it work properly. Think on how to power, i.e, 1000 transistors of 50nm of gate length (using basic maths, at least (50x10)^2 nm^2 area, which is far more due to other several facts as they form a block or a logic cell in the chip). Then scale this with parallelization techniques in pipelines and stages of the data process, and you will have billions of transistors. Have you ever wondered how to power up this whole thing? There arguments were given by a professor that explained the basics of the power draw by a chip and by other professor which subject was about growing a transistor from a Si wafer. Sorry I cannot provide more evidence on this or the name in English; I'll try to ask about it too. ). Everyday we learn something new

). Everyday we learn something new  -supposing the taxes for importing won't be high). Windforce is at 509€ (688$) and Sapphire Tri-X 519€ (701$). I don't know what will be the prices in other countries and with other money

-supposing the taxes for importing won't be high). Windforce is at 509€ (688$) and Sapphire Tri-X 519€ (701$). I don't know what will be the prices in other countries and with other money  .

.