- Joined

- Jul 13, 2016

- Messages

- 2,840 (1.00/day)

| Processor | Ryzen 7800X3D |

|---|---|

| Motherboard | ASRock X670E Taichi |

| Cooling | Noctua NH-D15 Chromax |

| Memory | 32GB DDR5 6000 CL30 |

| Video Card(s) | MSI RTX 4090 Trio |

| Storage | Too much |

| Display(s) | Acer Predator XB3 27" 240 Hz |

| Case | Thermaltake Core X9 |

| Audio Device(s) | Topping DX5, DCA Aeon II |

| Power Supply | Seasonic Prime Titanium 850w |

| Mouse | G305 |

| Keyboard | Wooting HE60 |

| VR HMD | Valve Index |

| Software | Win 10 |

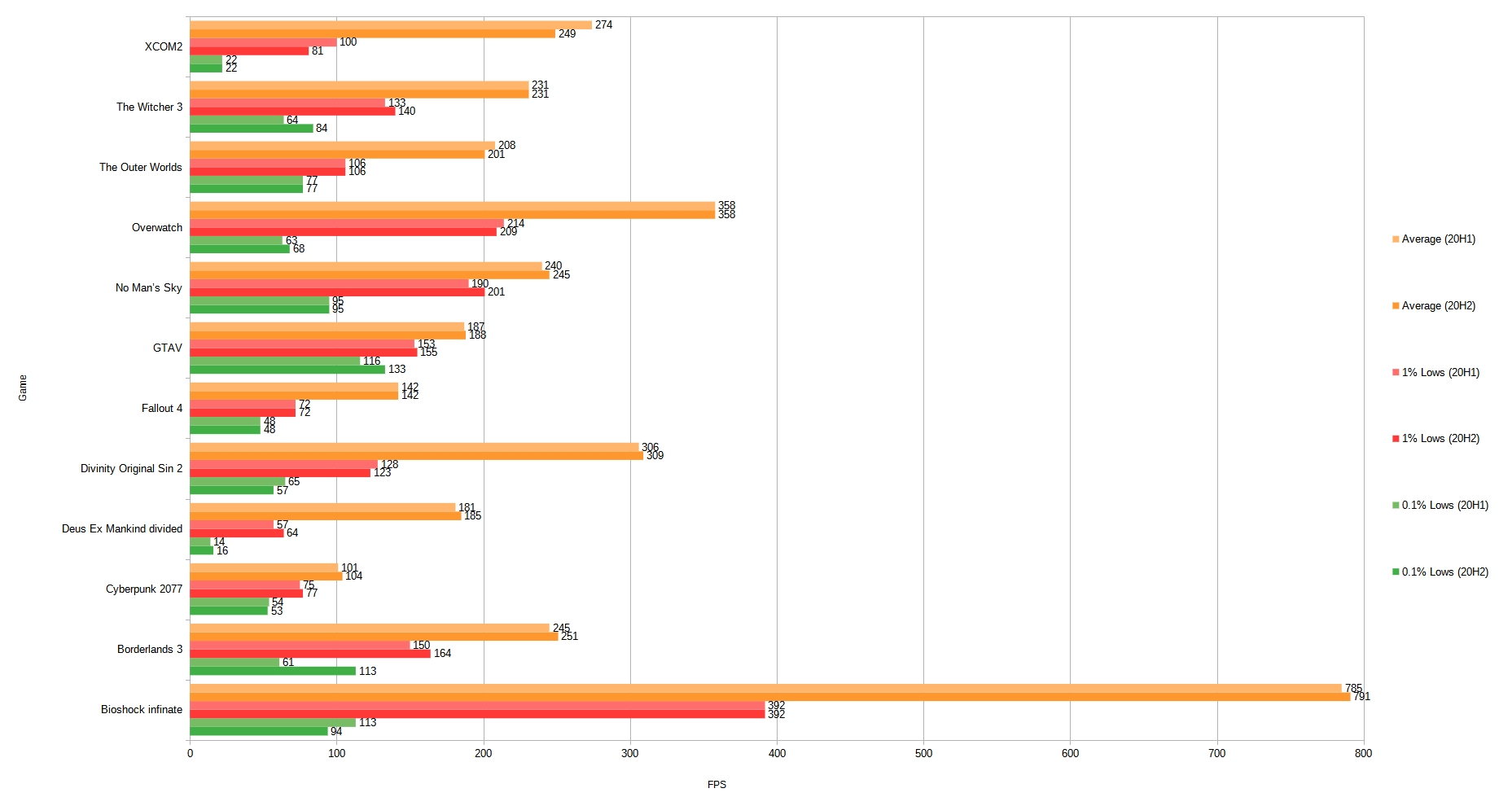

This took awhile to complete so hopefully someone can find it useful. The intent of this benchmark is to test differences, if any, between the two windows versions.

System specs:

Testing Methodology:

- Data gathered using MSI Afterburner.

- Each game was benchmarked 3 times with Windows version 20H1 and 3 times with 20H2.

- Every run is identical to ensure results are comparable.

- Locations were selected based on how intensive they are.

- Each run consists of a least 90 seconds of data.

- The game is restarted between each run.

- No other game updates or software was changed at any point between benchmarks.

Nvidia driver version 461.72, all unnecessary background processes disabled, windows game mode disabled, windows hardware scheduler disabled.

The following settings were used for each game: (a * indicates a game was run on a SSD, everything else is HDD)

Bioshock - Very low, 1080p Location: Welcome center / Rafael Square

Borderlands 3 - Very low, 1080p, Athenas *

Cyberpunk 2077 - Low, 1080p, Watson Market *

Deus Ex Mankind divided - Low, 1080p, DX11, Prague (city)

Divinity Original Sin 2 - Very low, 1080p, Arx

Fallout 4 - Low, 1080p, Diamond City

GTAV - Normal (all optionals disabled), 1080p, city *

No Man's Sky - Standard, 1080p, damp planet with flora *

Overwatch - Low, 1080p, reduced buffering, 400 FPS Cap, Grandmaster competitive replay (Hanamura)

The Outer Worlds - Low, 1080p, the groundbreaker

The Witcher 3 - Low, 1080p Location: Novigrad - Night

XCOM2 - Minimal, 1080p

The results:

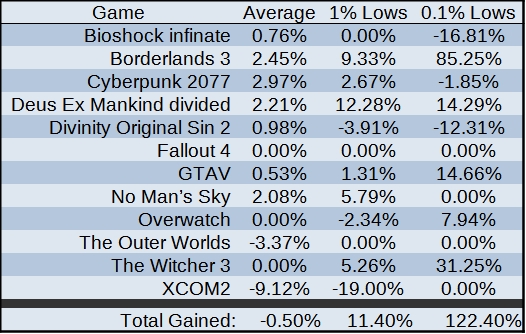

Average is well within margin of error. What we do see that's interesting though is that 1% and 0.1% lows benefited, specifically in Borderlands 3 and The Witcher 3. I saw a significant reduction in the number of FPS spikes in windows version 20H2 with borderlands 3. The runs performed for the game were done using an end game character as well so it should have been as punishing as possible. On 20H1 0.1% minimums were between 45 and 85 FPS, on 20H2 they were between 93 and 133. The witcher 3 was very much the same, 0.1% minimums were pushed up and the variation between the minimum value and max value was reduced.

EDIT**

Corrected some chart color errors. LibreOffice was appling different colors to Bioshock even though all colors were to be applied the same to all data points.

System specs:

| Processor | Ryzen 5800X |

|---|---|

| Motherboard | ASRock X570 Taichi |

| Cooling | Le Grand Macho |

| Memory | 32GB DDR4 3600 CL16 |

| Video Card(s) | EVGA 1080 Ti |

| Storage | Too much |

| Display(s) | Acer 144Hz 1440p IPS 27" |

| Case | Thermaltake Core X9 |

| Audio Device(s) | JDS labs The Element II, Dan Clark Audio Aeon II |

| Power Supply | EVGA 850w P2 |

- Data gathered using MSI Afterburner.

- Each game was benchmarked 3 times with Windows version 20H1 and 3 times with 20H2.

- Every run is identical to ensure results are comparable.

- Locations were selected based on how intensive they are.

- Each run consists of a least 90 seconds of data.

- The game is restarted between each run.

- No other game updates or software was changed at any point between benchmarks.

Nvidia driver version 461.72, all unnecessary background processes disabled, windows game mode disabled, windows hardware scheduler disabled.

The following settings were used for each game: (a * indicates a game was run on a SSD, everything else is HDD)

Bioshock - Very low, 1080p Location: Welcome center / Rafael Square

Borderlands 3 - Very low, 1080p, Athenas *

Cyberpunk 2077 - Low, 1080p, Watson Market *

Deus Ex Mankind divided - Low, 1080p, DX11, Prague (city)

Divinity Original Sin 2 - Very low, 1080p, Arx

Fallout 4 - Low, 1080p, Diamond City

GTAV - Normal (all optionals disabled), 1080p, city *

No Man's Sky - Standard, 1080p, damp planet with flora *

Overwatch - Low, 1080p, reduced buffering, 400 FPS Cap, Grandmaster competitive replay (Hanamura)

The Outer Worlds - Low, 1080p, the groundbreaker

The Witcher 3 - Low, 1080p Location: Novigrad - Night

XCOM2 - Minimal, 1080p

The results:

Average is well within margin of error. What we do see that's interesting though is that 1% and 0.1% lows benefited, specifically in Borderlands 3 and The Witcher 3. I saw a significant reduction in the number of FPS spikes in windows version 20H2 with borderlands 3. The runs performed for the game were done using an end game character as well so it should have been as punishing as possible. On 20H1 0.1% minimums were between 45 and 85 FPS, on 20H2 they were between 93 and 133. The witcher 3 was very much the same, 0.1% minimums were pushed up and the variation between the minimum value and max value was reduced.

EDIT**

Corrected some chart color errors. LibreOffice was appling different colors to Bioshock even though all colors were to be applied the same to all data points.

Last edited: