AsRock

TPU addict

- Joined

- Jun 23, 2007

- Messages

- 18,876 (3.07/day)

- Location

- UK\USA

| Processor | AMD 3900X \ AMD 7700X |

|---|---|

| Motherboard | ASRock AM4 X570 Pro 4 \ ASUS X670Xe TUF |

| Cooling | D15 |

| Memory | Patriot 2x16GB PVS432G320C6K \ G.Skill Flare X5 F5-6000J3238F 2x16GB |

| Video Card(s) | eVga GTX1060 SSC \ XFX RX 6950XT RX-695XATBD9 |

| Storage | Sammy 860, MX500, Sabrent Rocket 4 Sammy Evo 980 \ 1xSabrent Rocket 4+, Sammy 2x990 Pro |

| Display(s) | Samsung 1080P \ LG 43UN700 |

| Case | Fractal Design Pop Air 2x140mm fans from Torrent \ Fractal Design Torrent 2 SilverStone FHP141x2 |

| Audio Device(s) | Yamaha RX-V677 \ Yamaha CX-830+Yamaha MX-630 Infinity RS4000\Paradigm P Studio 20, Blue Yeti |

| Power Supply | Seasonic Prime TX-750 \ Corsair RM1000X Shift |

| Mouse | Steelseries Sensei wireless \ Steelseries Sensei wireless |

| Keyboard | Logitech K120 \ Wooting Two HE |

| Benchmark Scores | Meh benchmarks. |

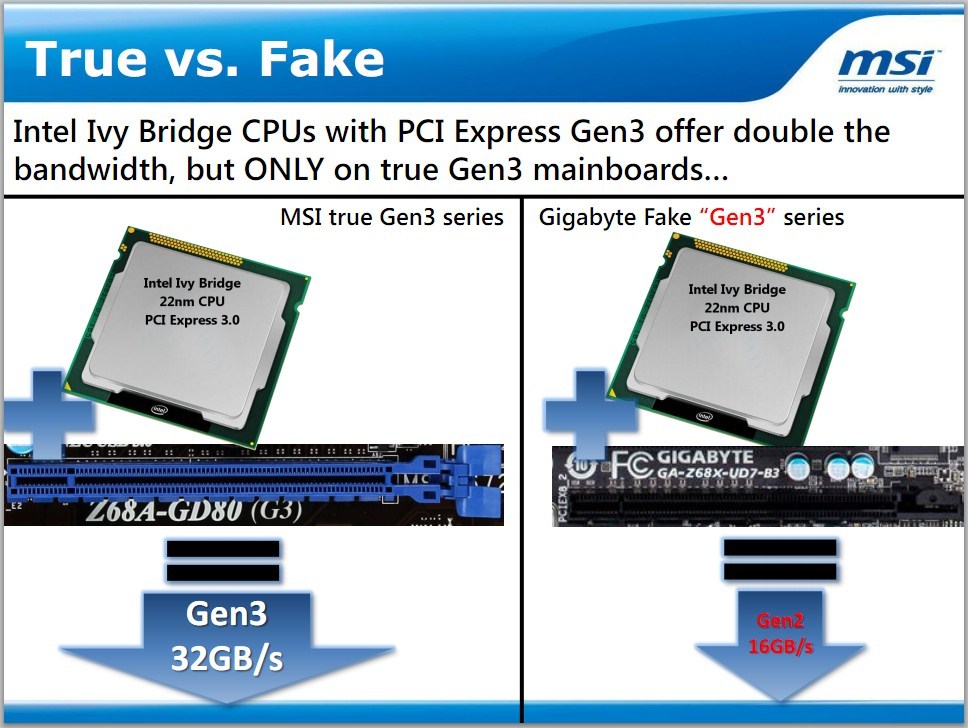

False advertising is pretty serious in the States. Can't the FTC look into this? It might suck to be Gigabyte pretty soon. I hope not though, they make good stuff.

Yes...

Isn't Gen 3 like Gen 1 to Gen 2 for performance gains ?. Maybe it's why Gigabyte ( if they did that is ) skimped out as the bandwidth is not used most of the time anyways.

I meant a more detailed summary

I meant a more detailed summary