- Joined

- Mar 23, 2012

- Messages

- 569 (0.13/day)

| Processor | Intel i9-9900KS @ 5.2 GHz |

|---|---|

| Motherboard | Gigabyte Z390 Aorus Master |

| Cooling | Corsair H150i Elite |

| Memory | 32GB Viper Steel Series DDR4-4000 |

| Video Card(s) | RTX 3090 Founders Edition |

| Storage | 2TB Sabrent Rocket NVMe + 2TB Intel 960p NVMe + 512GB Samsung 970 Evo NVMe + 4TB WD Black HDD |

| Display(s) | 65" LG C9 OLED |

| Case | Lian Li O11D-XL |

| Audio Device(s) | Audeze Mobius headset, Logitech Z906 speakers |

| Power Supply | Corsair AX1000 Titanium |

You confusing value in a debate about performance, not the same thing at all, nor valid in any way.:shadedshu

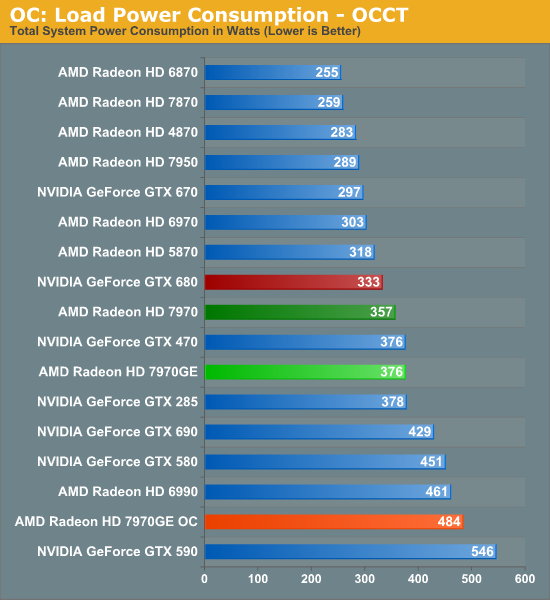

Except that your 680 isn't going to outperform an overclocked 7970. They'll be about the same.

And I'm not confusing anything... the 7970 and 670 are about the same, and the 7970GE and 680 are about the same. If you overclock the 7970 or 7970GE, they'll match an overclocked 680 - trading blows depending on the game/test and overall being about the same.

I know you have to defend your silly purchase of an overclocking-oriented voltage locked card, though

the context switching is simply also convenient and Kepler has context switching vastly improve so at some point 2 cards would not be required.

the context switching is simply also convenient and Kepler has context switching vastly improve so at some point 2 cards would not be required.

j/k)

j/k)