To my understanding, there is no way to actually measure VRAM usage .... just VRAM allocation. The way this is often explained is this... you have a Visa Card w/ a $5,000 limit. You bought a new GFX card for $500, so at this point $500 is the amount of credit "used". However, when you apply for a car loan next week and they run a credit check, the credit agencies will report:

"Visa - $5,000"

So it's measuring RAM "allocated", not used. So if you play a game on a 4 GB card, it may allocate 3 GB for the game ... if you do the same on an 8GB card, it might allocate 6 GB ... why ? As the saying goes "cause it's there". We have seen this tested over an over again from the 7xx series till current and, outside of 4k, the performance usually identical. When it isn't, it's at 4K and settings have to be set so high, so as to how a significant difference in performance, that performance is in the unplayable (15 - 25 fps) range.

https://www.extremetech.com/gaming/...y-x-faces-off-with-nvidias-gtx-980-ti-titan-x

We spoke to Nvidia’s Brandon Bell on this topic, who told us the following: “None of the GPU tools on the market report memory usage correctly, whether it’s GPU-Z, Afterburner, Precision, etc. They all report the amount of memory requested by the GPU, not the actual memory usage. Cards will larger memory will request more memory, but that doesn’t mean that they actually use it. They simply request it because the memory is available.”

First, there’s the fact that out of the fifteen games we tested, only four of could be forced to consume more than the 4GB of RAM. In every case, we had to use high-end settings at 4K to accomplish this.

So far outside odd instances like poor console ports, I have yet to see a card that comes in 2 VRAM flavors do better with the higher RAM at 1080 or 1440p

680 -

https://www.pugetsystems.com/labs/articles/Video-Card-Performance-2GB-vs-4GB-Memory-154/

770 -

http://alienbabeltech.com/main/gtx-770-4gb-vs-2gb-tested/3/ (Dead Link) *

960 -

http://www.guru3d.com/articles_pages/gigabyte_geforce_gtx_960_g1_gaming_4gb_review,12.html

Regarding the dead link in the 770 test, you can turn the sound off as likely wont understand the language but can see results here with the 770 2GB / 4 GB up to 5760 x 1080.

What this article showed is that:

- at 1080p and 1440p performance was virtually identical with the 2GB card sometimes being faster.

- where they were able to show 4GB did better (5760 x 1080), does it really matter if 20 fps is 33% faster than 15 fps ?

- When they could not install Max Payne on the 2 GB card at 5760 x 1080 (not enogh memory message), it installed fine on the 4FB card, but once installed, fps and image quality was identical when the switched the 2GB card in. With game already installed, it didn't catch the switch.

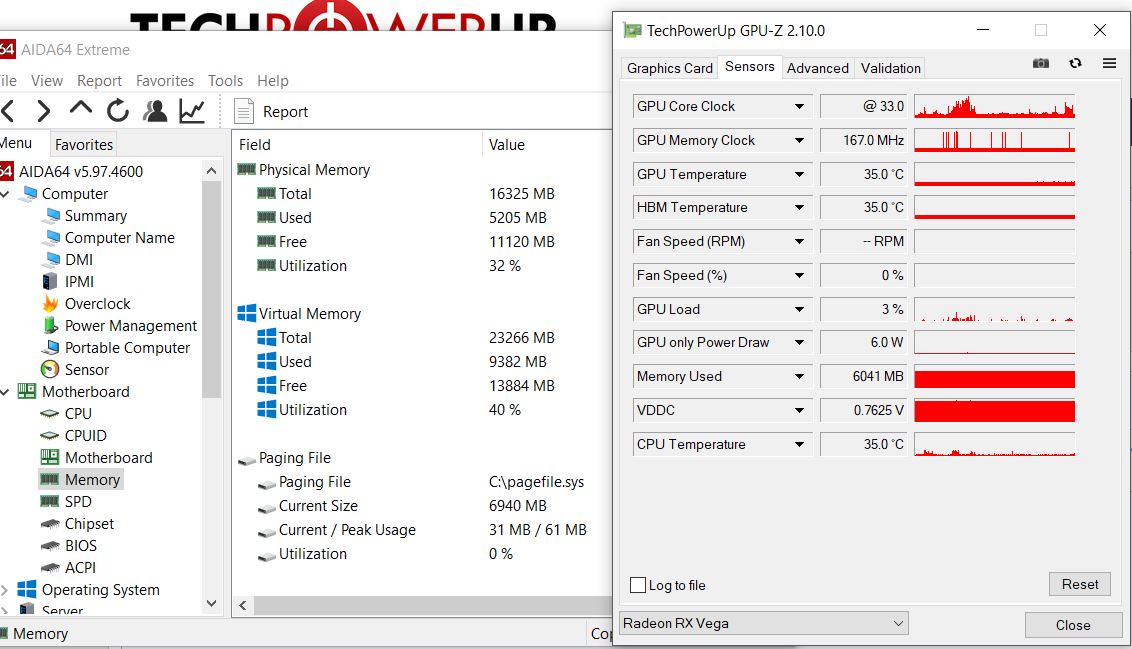

I initially thought that the TPU reviews on the two MSI 1060s finally showed a difference, but the "aha moment" comes when you realize that the 6GB 1060 has 11% more shaders, so of course it's faster. But if VRAM had an impact on performance, then we should be able to compare the performance difference by looking at 1080p and 1440p results and if VRAM mattered, the gap between th 3Gb and 6g=Gb should widen at the higher res ... it didn't. Right now for example, the 3 GB MSI Gaming X is $288 and the 6 GB is $328. At 1080p, Id choose the 3GB, as ^GB will never bee needed. For $40 I'd be inclined to spring for the 6 GB at 1440p .... as a "just in case future" consideration but wasn't long ago that price difference was $100. So it sure would be useful if we could measure see"'usage" vs "allocation"

I'd love to hear ... well see

... Wizard's take on this allocation versus / usage topic and whether we will ever really know how much VRAM the game is actually using. Im not sure manufacturers want us to know ...