I didn't use fstab via terminal (don't know how). The drive is about 10 days old: a bit too soon to be failing, no?

Drive failures tend to happen really often at 2 particular times, soon after getting it brand new, and after several years of use or more. Two of the 4 WD Blacks I have in my tower died within a couple days of having them (damn near lost all my data on my RAID 5,) so it wouldn't surprise me if it's failing early to be honest. SMART should be able to tell you if that's the case.

I didn't use fstab via terminal (don't know how).

So, fstab is a file that's at /etc/fstab, it stands for "File System Table," and it describes your mount points at boot. I just opened it in vim and that's how my vim setup looks (I use vim for development.)

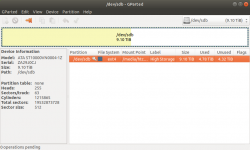

I'll give you a quick crash course on disks in Linux real quick, but the quick version is that ext4 doesn't know what to do with sdb because that's not a partition. That's the entire disk.

So, you have sda, sda1, and sdb.

sda is the disk disk in your system and sda1 is the first partition of the disk sda. This is likely your boot disk and sda1 is likely your root partition.

sdb is the second disk, since there are no numbered partitions, there could be a couple of things going on:

- The ext4 file system was created directly on the block device and not the partition (A little weird but completely valid, but you would have had to gone out of your way to do this.)

- The ext4 file system was put on to a LVM volume using sdb, if this is the case, you should find something with "ls -l /dev/mapper" other than just "control". This is also weird because typically LVM gets put on to a partition, much like ext4.

- The partition simply doesn't exist.

- The drive is dying.

I would see what is in /dev/mapper first. I have things in here for dmraid for my RAID-0 and 5:

Code:

jdoane@Kratos:~$ ls -l /dev/mapper

total 0

crw------- 1 root root 10, 236 May 26 05:37 control

brw-rw---- 1 root disk 253, 0 May 26 05:37 isw_cfaabaebjb_HDD

lrwxrwxrwx 1 root root 7 May 26 05:37 isw_cfaabaebjb_HDD1 -> ../dm-4

brw-rw---- 1 root disk 253, 1 May 26 05:37 isw_feffbdib_SSD

lrwxrwxrwx 1 root root 7 May 26 05:37 isw_feffbdib_SSD1 -> ../dm-2

lrwxrwxrwx 1 root root 7 May 26 05:37 isw_feffbdib_SSD2 -> ../dm-3

If there is nothing there, I would see what the partition table of /dev/sdb looks like with fdisk:

Code:

jdoane@Kratos:~$ sudo fdisk -l /dev/dm-0

Disk /dev/dm-0: 2.6 TiB, 2850567094272 bytes, 5567513856 sectors

Units: sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 512 bytes

I/O size (minimum/optimal): 65536 bytes / 196608 bytes

Disklabel type: gpt

Disk identifier: A5F066E9-57BB-4C63-BA6C-76DD37D432FF

Device Start End Sectors Size Type

/dev/mapper/isw_cfaabaebjb_HDD1 384 5567513471 5567513088 2.6T Linux filesystem

jdoane@Kratos:~$ sudo fdisk -l /dev/dm-1

Disk /dev/dm-1: 212.4 GiB, 228061347840 bytes, 445432320 sectors

Units: sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 512 bytes

I/O size (minimum/optimal): 16384 bytes / 32768 bytes

Disklabel type: gpt

Disk identifier: 88041F70-AB31-4AD9-88D2-E59B104D8D9A

Device Start End Sectors Size Type

/dev/mapper/isw_feffbdib_SSD1 2048 194559 192512 94M EFI System

/dev/mapper/isw_feffbdib_SSD2 194560 445431807 445237248 212.3G Linux filesystem

jdoane@Kratos:~$ sudo fdisk -l /dev/nvme0

nvme0 nvme0n1 nvme0n1p1

jdoane@Kratos:~$ sudo fdisk -l /dev/nvme0n1

Disk /dev/nvme0n1: 477 GiB, 512110190592 bytes, 1000215216 sectors

Units: sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 512 bytes

I/O size (minimum/optimal): 512 bytes / 512 bytes

Disklabel type: gpt

Disk identifier: A80068C3-BCCF-4880-B661-E01D693D2630

Device Start End Sectors Size Type

/dev/nvme0n1p1 2048 1000214527 1000212480 477G Linux filesystem

Edit: You might want to check SMART on that drive as well:

Code:

sudo smartctl --all /dev/sdb