I really don't understand all the doom and gloom this announcement is producing. If you really think about it, it was going to have to happen eventually. Let's take a long look at the events that have brought AMD to this point.

Amd has historically always suffered under Intel's shadow. The only times they've been able to out maneuver Intel is when they've gone for the "hail mary pass" so to speak. Think about it, what was the last truly innovative CPU technology that Intel produced on it's own? If you don't count instruction set extensions which can be of dubious use I'd say, hyper threading. The one before that, on die L2. That's it. The L2 would of been pretty obvious to any one. There could be some debate about Hyper threading, but everyone knew eventually multi-core CPUs were coming. Hyper Threading was just a step in between single core and multi-core. On the other hand AMD has produced a lot of innovations that are in common use today and a lot of people (even me) saw dubious worth in them.

When Intel's Netburst was first on the drawing table it was all about clock speed. Amd and Intel had been trading blows and neither really had the upper hand, even with AMD being the first to reach 1GHz. So while Intel pursued their failed Netburst architect (it never scaled in the manner Intel thought it would) and ever climbing FSB speeds, AMD introduced a much more efficient core architect making over all clock speed less important. They then added the following, first an on die memory controller making FSB less of an issue. Secondly a 64bit extension to the X86 instruction set. I'll admit I was even one to say "so what, other then memory limits, why do we need 64bit" Okay and I was wrong, how many people still run a 32bit OS? Fourth they introduced the first true dual core. And lastly they introduced on die GPUs. All innovations that AMD pioneered and Intel later adopted.

AMD became king of the mountain around the time of the 64bit extensions. In fact that's why they first introduced the FX line of processors. Insanely priced, but the best you could get. As it later came out, and was well documented, the only way Intel could even compete was by forcing OEMs to only carry Intel products. Intel all most lost the race except for a strange turn of events. Enthusiasts had started to use the Pentium M processor (part of the Centrino brand) for desktop use. While Intel may not be as innovative as AMD, that doesn't mean their not smart, and they saw the potential the Pentium M had. I'd say that Intel's biggest strengths is it's all most inexhaustible resources and it's ability to turn on a dime and refocus on more promising avenues. Which is exactly what they did. Intel has taken the Pentium M and over time added all of AMD's innovations to the point where they totally dominate the CPU market. In truth AMD's only recourse is to offer deep discounts on their flagship products. It's sad, but it's also true.

So what can AMD do to once more get out of Intel's shadow? It's pretty obvious that no matter what AMD does with their current processors they will always be playing second fiddle to Intel's. They'll never be a threat. In fact as been shown with Intel's last generation Intel doesn't even consider AMD a threat anymore. In stead of increasing CPU performance they seem to be concentrating on reducing power consumption and heat. And that makes sense because of the rise of mobile computing and how ARM has risen to become a major player. Intel has it's sights set on taking on ARM, not AMD. But this gives AMD a advantage they haven't had since the Athalon 64 days, room to breath and maneuver.

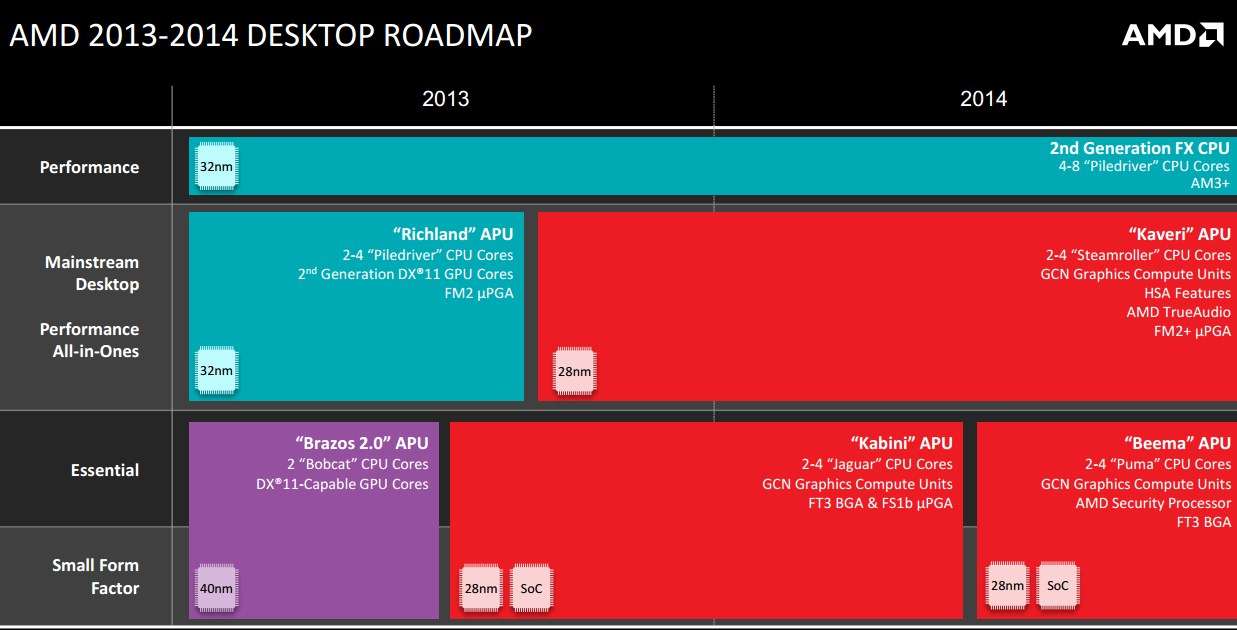

So what can they do with this breathing room? Well they could keep trying to improve traditional processor performance, but we all know where that will lead. No AMD needs to do what AMD does best. Come out of left field with a new technology that no one thinks is viable, just like they did in the past. So what does AMD have that Intel just can't touch? GPU performance on their APU dies. While Intel has made great leaps in this area, they'll never be able to touch AMD, they simple have to much of a lead. So AMD needs to leverage that advantage in the best way it can. How can they? While it's true that more and more applications are taking advantage of mulit-core CPUs, simply adding even more and more cores is eventually a dead end solution. Instead why not throw that "hail mary pass"? Produce an entirely different approach something like a heterogeneous CPU.

http://en.wikipedia.org/wiki/Heterogeneous_computing

AMD already has many of the parts already in place on the CPU die, and if their Heterogeneous initiative succeeds.

http://hsafoundation.com/ They might just pull it off. All they really need once it's up and running is a "killer app" and AMD will quite likely make an end run around Intel.

With all that in mind AMD can't afford to be split into a APU and CPU company. This is an all or nothing play. A true "hail mary pass". So eventually AMD was going to have to go this route any way. I'm just hoping that it means AMD's heterogeneous system architect isn't that far away. It just might be one of the biggest game changers for desktop computing we've seen yet, and save AMD's bacon in the process.