Monday, January 6th 2014

Philips Unveils 27-Inch Monitor with NVIDIA G-SYNC

The Philips 27" Gaming Monitor with G-SYNC debuts today bringing a stunning visual experience and ultra-smooth play to gamers looking for a serious competitive edge. This advanced Philips gaming display (model 272G5DYEB) delivers revolutionary performance through NVIDIA G-SYNC, a new technology that Synchronizes display refresh rates to the PC's GPU, eliminating screen tearing and minimizing display stutter and input lag. With G-SYNC, images display the moment they are rendered, scenes appear instantly, objects are sharper, and game play is smoother.

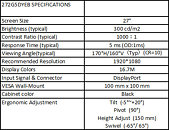

The Philips 27" Gaming Monitor with G-SYNC will be showcased at the 2014 International CES in Las Vegas at both Pepcom's Digital Experience! event and the NVIDIA booth, which is located at LVCC, South Hall 3 - 30207. The display will be available for purchase in spring 2014 for $649 MSRP.In addition to delivering consistently smooth frame rates and ultrafast response through G-SYNC display technology, the Philips 27" Gaming Monitor offers 144 Hz refresh rate with 1 ms response time for fast action, 300 cd/cm2 brightness and a 1000:1 typical contrast ratio, and displays up to 16.7M colors. The slim black monitor is both wall mountable and height adjustable.

"This 27-inch Philips monitor is a perfect gaming partner, with G-SYNC technology bringing breakthrough display performance and giving major competitive edge," said Chris Brown, TPV Global Product Marketing. "We believe anyone who really cares about their gaming experience is going to want a G-SYNC-enabled display."

The Philips 27" Gaming Monitor with G-SYNC will be showcased at the 2014 International CES in Las Vegas at both Pepcom's Digital Experience! event and the NVIDIA booth, which is located at LVCC, South Hall 3 - 30207. The display will be available for purchase in spring 2014 for $649 MSRP.In addition to delivering consistently smooth frame rates and ultrafast response through G-SYNC display technology, the Philips 27" Gaming Monitor offers 144 Hz refresh rate with 1 ms response time for fast action, 300 cd/cm2 brightness and a 1000:1 typical contrast ratio, and displays up to 16.7M colors. The slim black monitor is both wall mountable and height adjustable.

"This 27-inch Philips monitor is a perfect gaming partner, with G-SYNC technology bringing breakthrough display performance and giving major competitive edge," said Chris Brown, TPV Global Product Marketing. "We believe anyone who really cares about their gaming experience is going to want a G-SYNC-enabled display."

19 Comments on Philips Unveils 27-Inch Monitor with NVIDIA G-SYNC

Show me 1 video card that can play the latest games on 1440p with min 120fps and we'll talk ;)

awesome indeed

It doesn't say anything about supporting LightBoost though and that's critical for removing motion blur.

G-Sync apparently supports something better than LightBoost. ULMB (Ultra Low Motion Blur).

I still want to see G-sync IRL. Here's hoping that computer store nearby has a demo on it some day.

I'm not gonna invest in such a monitor in the year 2014.

So from the title alone without having clicked on the link it should have been obvious what the resolution was going to be.

At this point, no matter what, any monitor is going to be a compromise in some way. I have two 27" 2560x1440 monitors and three 27" 1920x1080 monitors. They are all great IMO and I'll stick with them for the foreseeable future. However, none have G-Sync. G-Sync isn't necessarily IMO a must have feature but it does fix a problem that has plagued games and gamers for some time.

Having said that my next monitor is going to be a 4K monitor and thus won't likely have G-Sync.

People just have to prioritize what is important to them.

I'm glad to see more G-Sync products trickling in either way.

True for LightBoost. (unofficial hack)

False for ULMB / Turbo240 / BENQ Blur Reduction. (official pushbutton strobe backlights)

More specifically, in the newer next-generation strobe backlights (pushbutton activated ON/OFF on monitor). You could technically be right that there are minor calibration differences, but in many cases, sometimes less than the color difference between 120Hz and 144Hz (which both require different color calibration).

You still do lose a lot of brightness but the good news is that all the next-generation strobe backlights are more adjustable (via monitor adjustments), without resorting to complicated tweaks/hacks that was required of LightBoost. The main change that occurs is loss of brightness, but the colors no longer massively distort like they did with LightBoost. There are half a dozen different brands of strobe backlights other than LightBoost now, let's not forget that manufacturers recently realized people wanted LightBoost, and are starting to introduce equivalents, often with fewer disadvantages. Don't forget I said "pushbutton". Easy to turn ON/OFF. :)

Two citations:

(1) Almost all "1ms" monitors now include some form of a strobe backlight.

Brands include: LightBoost, ULMB (Ultra Low Motion Blur), Turbo240, and BENQ Blur Reduction

(2) All G-SYNC monitors include a strobe backlight, according to information leaked last October:

www.blurbusters.com/confirmed-nvidia-g-sync-includes-a-strobe-backlight-upgrade/

Recently, users who have received their G-SYNC upgrade kits early, have revealed this mode is called ULMB (Ultra Low Motion Blur).

They're freaking insane. I'd rather get (another) 27" IPS screen at that price. Which will be 1440p.

Get me a 1440P, IPS, with G-Sync at that price and we'll begin the conversation. As for those that are saying "well can it run at 120FPS?", it won't matter with G-Sync as you're outputting frames sync'd with the monitor.

anyway, if you play a game with very big framerate variances, chances are that your monitor will also change average brightness over time, being low in low fps areas and being high in high framerate areas (cumulative brightness effects for low latency pixels), unless they have compensation for such effect in the technology.

tearing is a minor inconvenience for me,.....

With G-Sync, you're displaying every frame as it is output to the monitor. Yes it's currently limited to 30-144FPS/Hz, but you forget that with the framerate locked to the refresh rate of the monitor, your experience will still be perceived SMOOTHER with a G-Sync monitor vs. one that is using standard V-sync.