Wednesday, December 5th 2007

NVIDIA GeForce 8800 GT Gets a New Stock Cooler, Quieter and Cooler

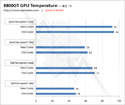

For a high performance card like the GeForce 8800 GT, it seems a single-slot cooling solution is not enough. With reports of the card running over 90°C under load and the loud fan, NVIDIA has decided to upgrade the standard 8800 GT with a new cooler. The new cooler will be equipped with a larger fan (75x10mm compared to the old 65x10mm) running at a lower RPM (1664RPM compared to the old 4333RPM) resulting in a quieter and more efficient cooling solution.

Source:

Expreview

23 Comments on NVIDIA GeForce 8800 GT Gets a New Stock Cooler, Quieter and Cooler

those idle/load temps are taken when the ambient temperature is what???

i have my pc in the basement and it is like 65 degrees farenheight down there. im sure my 8800gt will be fine even with the "old" cooler.

Edit: I mean... EPIC FAIL!

Now.. if only NVIDIA includes a bigger fan with the same RPM as the old fan, I'm sure there would have been a bigger difference in temperatures :p

I'm sure they measured temps in the labs before shipment. D'you expect me to believe that they didn't say, "Hey, this is hot and noisy, how about putting a bigger fan in?" before establishing the stock cooler?

Something like this included picture. Sorry for it being ugly, but the idea is obvious. I think that would be awesome. It could even be some cheap plastic piece that will do nothing but guide the air out the back (maybe even UV reactive for show).

price of 8800GT 256MB

www.newegg.com/Product/ProductList.aspx?Submit=Property&N=2010380048&PropertyCodeValue=679%3A32704%2C679%3A28976&bop=And&Order=STOCK

performance 8800GT 256MB

en.expreview.com/?p=64 (not sure if these are ligit either)

but thats wat i thk, bye bye 512 GT hello 512 GTS and 256MB gt

On the other hand, I think that nVidia is trying to get people off the fact that is almost impossible to get a 8800 GT these days by getting "new and improved" versions out, like the new cooler or the 1GB version...

The truth is that the 8800 GT is not so economically profitable for nVidia so the lack of stock, coupled with the huge popularity of this card is a huge marketing tool for the new nVidia 8800 GTS. They "sell" 8800GTs, people buy "the next best thing", the 8800GTS as the GT is not in stock, nVidia makes 100 bucks extra! I LOVE corporate thinking... ;)