- Joined

- Jul 14, 2006

- Messages

- 2,640 (0.38/day)

- Location

- People's Republic of America

| System Name | It's just a computer |

|---|---|

| Processor | i9-14900K Direct Die |

| Motherboard | MSI Z790 ACE MAX |

| Cooling | 4X D5T Vario, 2X HK Res, 3X Nemesis GTR560, NF-A14-iPPC3000PWM, NF-A14-iPPC2000PWM, IceMan DD |

| Memory | TEAMGROUP FFXD548G8000HC38EDC01 w/Alphacool Apex RAM X4 Water Cooler and Core DDR5-RAM Module |

| Video Card(s) | MSI Suprim SOC w/Alphacool Core Geforce RTX 5080 Suprim + Vanguard with Backplate |

| Storage | Samsung 990 PRO 1TB M.2 |

| Display(s) | MSI 321URX |

| Case | Custom open frame chassis |

| Audio Device(s) | CREATIVE AE-9/Nakamichi Shockwafe Ultra 9.2.4 |

| Power Supply | Seasonic Prime PX-1300 |

| Mouse | Logitech MX700 |

| Keyboard | Logitech LX700 |

| Software | Win11PRO |

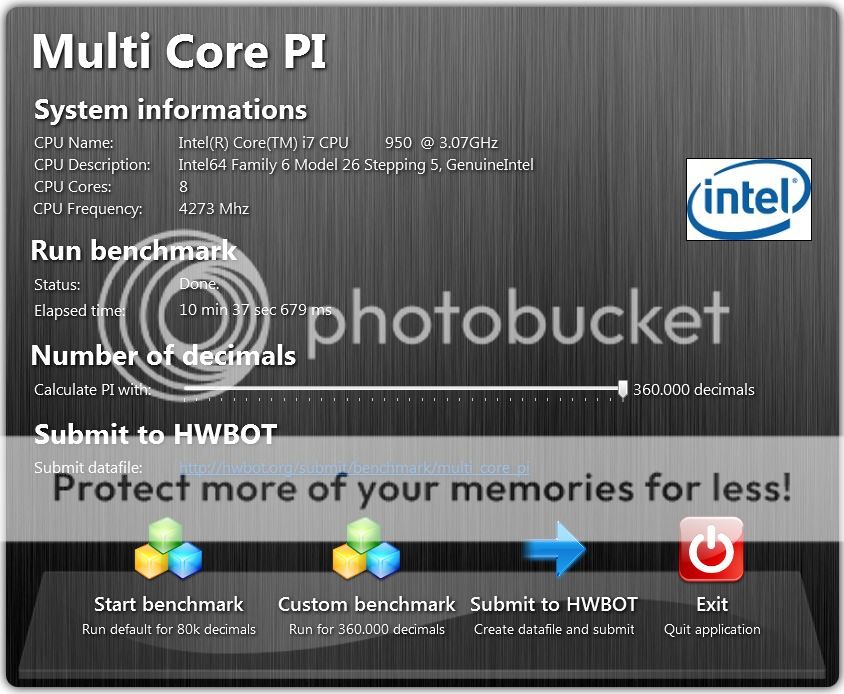

It's going to take a very very long time to complete with 360.000 decimals. It will complete at one time, just leave the benchmark running. It's exponential complexity. For 10k decimals its takes in 0 sec, 800ms, for 20k decimals 2 sec 900ms... and for 80k decimals 54 sec. CPU: i5 3330 @ 3Ghz, 4 cores.

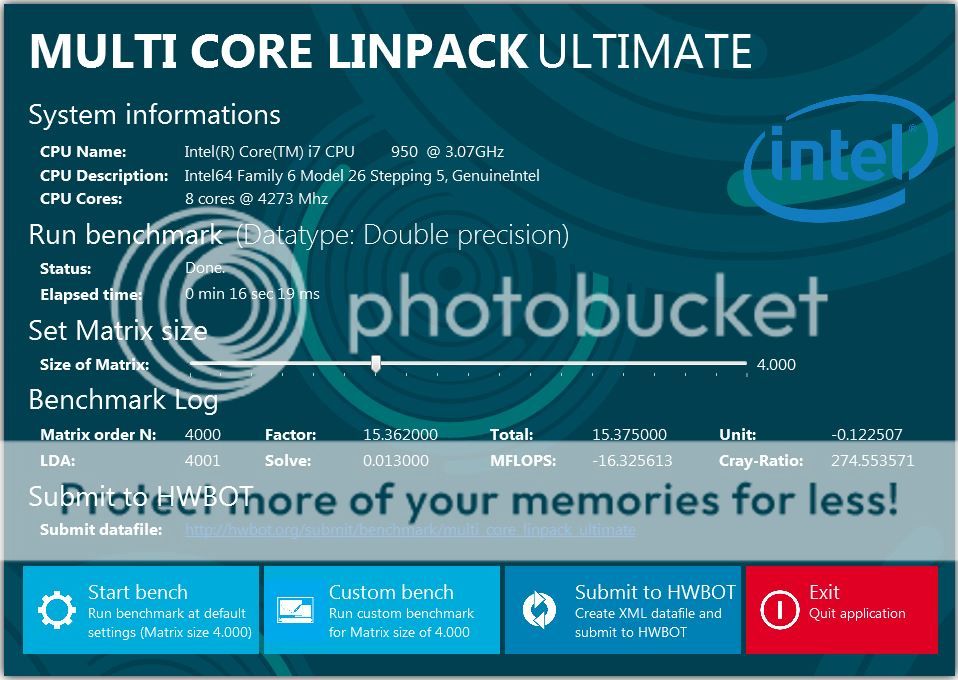

I got 28 seconds, 30 ms for 80K on the previous version.

What would you estimate my time should be for 360K on the new version?

I let it run for approximately 10 minutes with no result.

EDIT:

I feel rather sheepish, I should let it run a few more seconds rather than being impatient:

Last edited: