The League of Extraordinary (Wealthy) Gentlemen

This surmarizes my feelings when reading these comments.

To be honest, I'll say this from the start. I like AMD better than Intel or NVIDIA - but it never stopped me from buying the a clearly better product, when it makes sense. Secondly, my machine - on which I do games (though mostly ones that I really like and spending many hours, often hundreds, with) - is getting old, almost 3 years now. Even when bought, it was of average 'strength' - though I do tend to build a lots of system, for my friends who need it and have less experience than I do (I own different PCs from 1987th, after all

)

But, an average comment poster seems to have something like this:

- at least i7 of the newer generation, looking for an upgrade

- Titan X or, at least 980x (preferably in SLI)

- 4k monitor (at least one), or some multi-monitor configuration

Same poster is:

- highly environment-aware, though PSU is >800+W, and every 10-30W of extra usage counts as unacceptable

- liking and needing Iris Pro

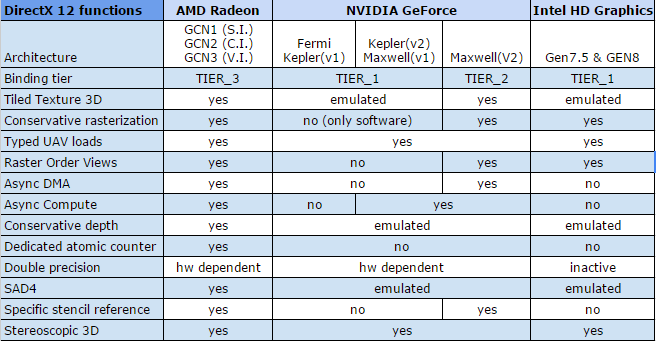

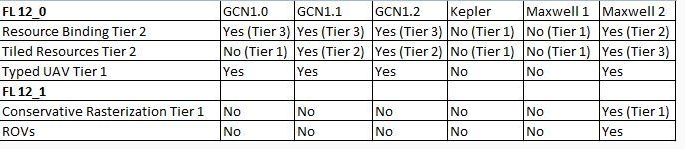

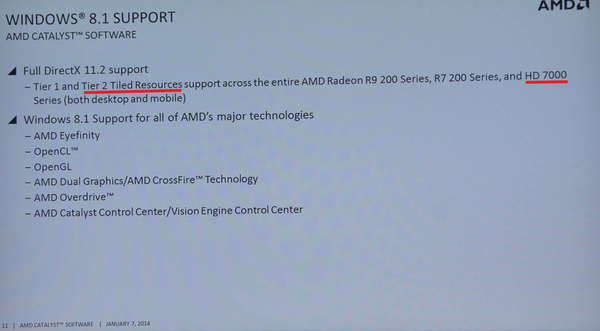

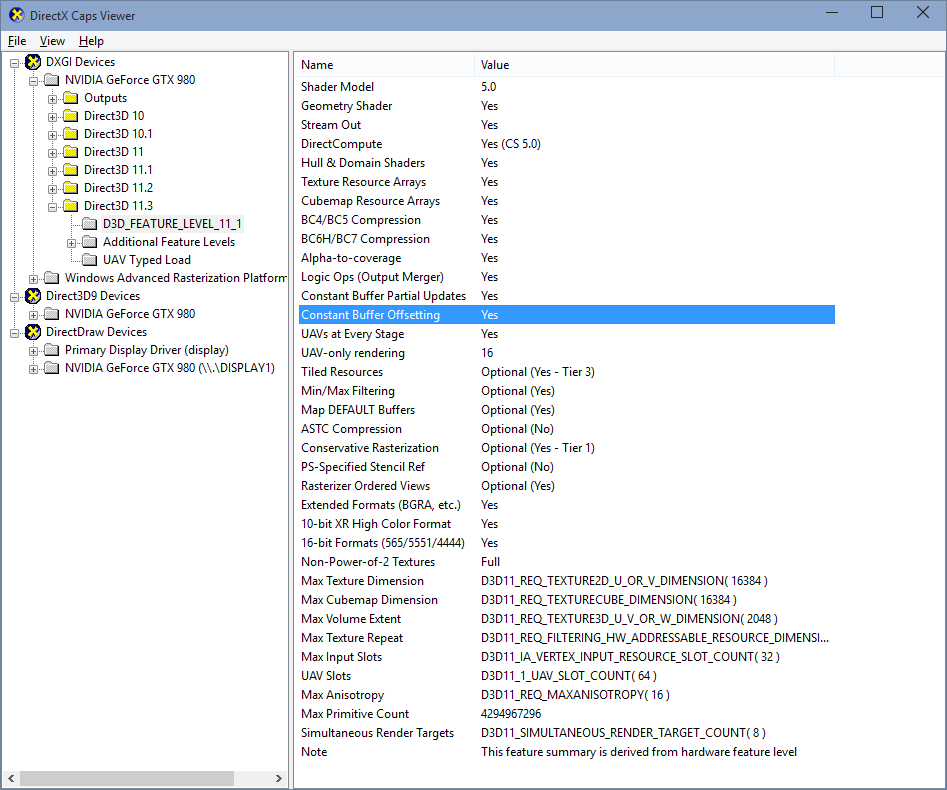

- is highly dependant on yet unreleased DirectX 12, even to a point where some small inability of the graphic card to use hardware acceleration of the feature means life or death, NOW

Configuration mentioned easily surpasses 2000$, and may need replacement immediately, or very soon, because, well, some of the games released after famous June 29th won't have every every hardware optimization of feature which would be questionably used in that game(s) -softwere emulation is out of the question, naturally

Well, MY old system is very capable to support games I play, and it is not Soltaire - a number of AAA titles are there.

- 4K - though I've seen enough gaming on those, I've decided that I don't need one yet

- Multi-monitor gaming - it looks... bad, in my book (though I do have a second monitor, I use it for a different purposes entirely)

- Iris Pro is inherently... unneeded, a person spending as much for a CPU and is a passionate gamer buys a REAL graphic card, even if it's just an average one, and gets like 5-10 better results of it (minimum - whole concept of having Iris Pro eludes me, it's suitable for a budget CPU and budget gaming, yet it bears premium price)

Main features of DX12 are, as my understanding goes, to better utilize CPU cores and GPU, existing ones as yet unreleased. Other than this, there are bunch of new features - but then again, there are PsysX (and Flex and Enviromental and so on), Fur, God Rays, HairFX - also TrueAudio, Tressfx, Mantle... Neither green or red using games profited hugely out of having, or missing those - yes, they are noticeable, especially in demos, and probably moreso than some cryptic "Feature Level 12_x".

That being said, I wouldn't worry too much of it for the time being - and neither should others. Buying a new GPU cause it supports something as vague as "Feature Level 12_x" as primary reason is pure waste of money. You can always do the same when and IF this starts to affect your experience. When it happens, all the chances are that prices will be lower, products more mature and better overall, to the point of having the whole new generation.

I plan to upgrade my computer in 2016, if something important doesn't change - this goes both ways.

(Oh, and I did had a privilege to see several expensive components and how they affect my configuration - for me, they weren't worth buying, but mileage may vary - those who need them, probably have them already)

Sorry for a wall of text, but I felt that I have to write this, since the whole discussion regarding replacing GPU or profiting over having "Feature Level 12_x" seems so pointless to me.