63

63

Call of Duty Modern Warfare Benchmark Test, RTX & Performance Analysis

Conclusion »Test System

| Test System | |

|---|---|

| Processor: | Intel Core i9-9900K @ 5.0 GHz (Coffee Lake, 16 MB Cache) |

| Motherboard: | EVGA Z390 DARK Intel Z390 |

| Memory: | 16 GB DDR4 @ 3867 MHz 18-19-19-39 |

| Storage: | 2x 960 GB SSD |

| Power Supply: | Seasonic Prime Ultra Titanium 850 W |

| Cooler: | Cryorig R1 Universal 2x 140 mm fan |

| Software: | Windows 10 Professional 64-bit Version 1903 (May 2019 Update) |

| Drivers: | AMD: Radeon Software 19.11.1 Beta NVIDIA: GeForce 441.12 WHQL |

| Display: | Acer CB240HYKbmjdpr 24" 3840x2160 |

We tested the public Battle.net release version of Call of Duty Modern Warfare (not a press pre-release). We also installed the latest drivers from AMD and NVIDIA, which both have game-ready support for the game.

Graphics Memory Usage

Using a GeForce RTX 2080 Ti, which has 11 GB of VRAM, we measured the game's memory usage at the highest setting.

Just like in previous Call of Duty titles, the game seems very memory hungry at first, but the performance results from our weaker cards show that all these memory allocations happen preemptively. The philosophy here seems to be "fill up the VRAM as much as possible, maybe we need the data later".

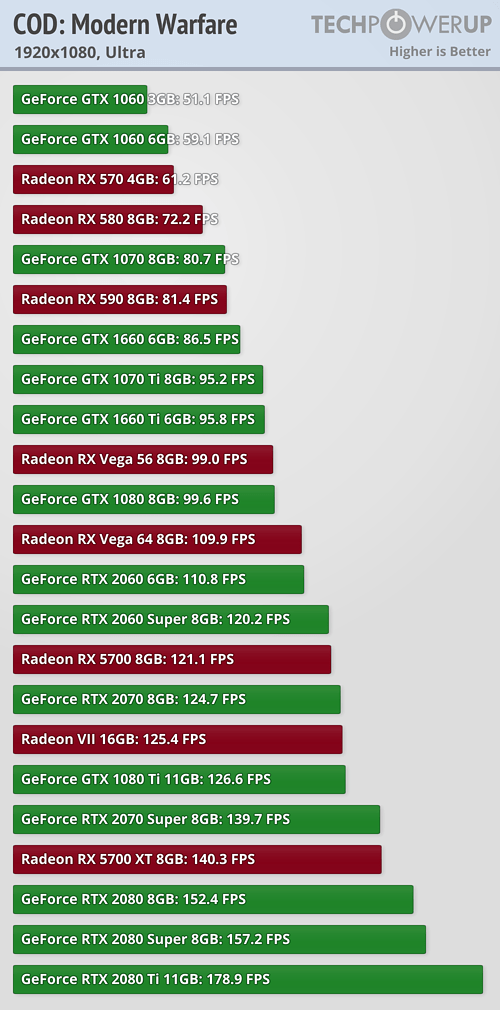

GPU Performance

FPS Analysis

In this new section we're comparing each card's performance against the average FPS measured in our graphics card reviews, which is based on a mix of 22 games. That should provide a realistic "average", covering a wide range of APIs, engines and genres.

We can clearly see that NVIDIA's Pascal generation of GPUs is at a large disadvantage here, while Turing does much better. It seems that NVIDIA focused their optimization efforts on improving performance for Turing, possibly through the use of Turing's concurrent FP+Int execution capabilities.

On the AMD side, the improvements look fairly constant across generations, showing that AMD took a more general approach. Only Radeon VII falls behind, no idea why.

Apr 18th, 2024 16:31 EDT

change timezone

Latest GPU Drivers

New Forum Posts

- Gigabyte gpu model differences? (54)

- Is it possible that the atmosphere is losing less of its "shield" capabilities due to more and more jets/rockets puncturing it daily? (15)

- Last game you purchased? (244)

- XFX RX560 1024 shaders 16 CU 4GB from Aliexpress (3)

- What are you playing? (20464)

- [GPU-Z Test Build] Resizable BAR shows as "Yes" when Supported but Disabled (24)

- Black Holes (702)

- I9 13890HX undervolting Suggestions (0)

- cooling vrm on cheap motherboard for 5950x (18)

- FINAL FANTASY XIV: Dawntrail Official Benchmark (54)

Popular Reviews

- Horizon Forbidden West Performance Benchmark Review - 30 GPUs Tested

- PowerColor Radeon RX 7900 GRE Hellhound Review

- Fractal Design Terra Review

- Corsair 2000D Airflow Review

- Minisforum EliteMini UM780 XTX (AMD Ryzen 7 7840HS) Review

- Creative Pebble X Plus Review

- Thermalright Phantom Spirit 120 EVO Review

- FiiO KB3 HiFi Mechanical Keyboard Review - Integrated DAC/Amp!

- ASUS GeForce RTX 4090 STRIX OC Review

- NVIDIA GeForce RTX 4090 Founders Edition Review - Impressive Performance

Controversial News Posts

- Sony PlayStation 5 Pro Specifications Confirmed, Console Arrives Before Holidays (108)

- NVIDIA Points Intel Raptor Lake CPU Users to Get Help from Intel Amid System Instability Issues (102)

- US Government Wants Nuclear Plants to Offload AI Data Center Expansion (98)

- Windows 10 Security Updates to Cost $61 After 2025, $427 by 2028 (82)

- Developers of Outpost Infinity Siege Recommend Underclocking i9-13900K and i9-14900K for Stability on Machines with RTX 4090 (82)

- TechPowerUp Hiring: Reviewers Wanted for Motherboards, Laptops, Gaming Handhelds and Prebuilt Desktops (71)

- Intel Realizes the Only Way to Save x86 is to Democratize it, Reopens x86 IP Licensing (70)

- AMD Zen 5 Execution Engine Leaked, Features True 512-bit FPU (63)