91

91

Denuvo Performance Cost & FPS Loss Tested

Conclusion »Performance Testing

For testing, I used my VGA review test system with the same specs, drivers, and settings as in our Devil May Cry 5 Performance Analysis article.I paid very close attention to achieving high accuracy during testing. For example, graphics cards are always heated up properly before the test run to ensure Boost Clock (which is tied to temperature) doesn't play a role in the performance results. Also, since two installations of the game are used (on the same SSD), prior to every measured test run, I did a non-measured warmup run, which prepares disk cache and ensures results do not get skewed due to random disk latency. Still, it's impossible to reach perfect accuracy as some random variation between runs is to be expected.

NVIDA RTX 2080 Ti Performance

The first round of results is for the GeForce RTX 2080 Ti, to minimize the GPU performance bottleneck and ensure the system drives as many frames per second as possible.

We begin with our default CPU speed of 4.8 GHz and scale downward to 1.00 GHz to ideally show if the performance gap widens as the CPU gets slower. At 4.8 GHz, there is virtually no difference between "Denuvo on" and "Denuvo off" (252.8 FPS vs 252.0 FPS = 0.32%). Expecting much bigger differences, I dialed down CPU frequency in several steps for both "Denuvo on" and "Denuvo off", all the way down to 1.00 GHz.

At around 3.50 GHz, we begin to see some noteworthy performance differences that, surprisingly, stay fairly constant (around 3-4%). I would have expected that with significantly less CPU performance available, the impact of Denuvo would become much bigger, but that's not the case.

In contrast, take a look at how much the frame rate tanks; we're talking over 100 FPS lost due to the processor getting weaker and weaker (from CPU frequency adjustments), yet the performance hit caused by Denuvo doesn't get bigger.

Another interesting observation is that Denuvo's performance cost isn't correlated to higher framerates either. This is strong evidence that the Denuvo checks are not executed for each frame the game renders. Rather, it looks like the checks are executed on a timer or tied to a part of the engine that's running at a fixed speed, like physics or AI.

Next, I wanted to test the impact on higher resolutions, where the game is more GPU-bound. At 1440p, we see the same results as on the 1080p chart. In GPU-bound scenarios, the performance loss is close to zero—only when the bottleneck moves to the CPU do the FPS values begin to differ by around 3% in this test, too.

Moving on to 4K, the results match the previous tests, except that the GPU bottleneck shifts on to the CPU much later, which is as expected because the GPU is having a much harder time churning out those high-res frames.

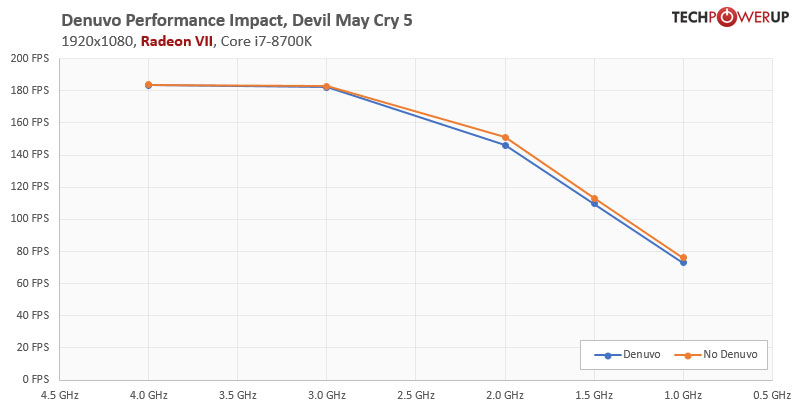

AMD Radeon RX 580 & Radeon VII

Not everybody uses a super-high-end GeForce RTX 2080 Ti, so I wanted to get some data on a more mid-range card. For this test, I picked the Radeon RX 580, which is one of the most popular cards on the market and comes at very affordable pricing. Also, it's a card from AMD, so the other camp gets tested, too. Beyond that, we have seen some anecdotal evidence that AMD's driver requires more CPU time than its NVIDIA counterpart, which could make it more susceptible to performance losses caused by Denuvo.

However, the results look nearly identical to the 4K RTX 2080 Ti chart, which isn't that surprising if you think about it. The RX 580 setup will be GPU limited most of the time, even at 1080p, because the GPU's performance is lower. Performance differences match the RTX 2080 Ti results, too, with around 3% at the 1.00 GHz data point, which is the only one that's CPU limited—there's close to no difference when GPU limited.

Since the RX 580 didn't give us any new insights, and I still was curious about the driver CPU usage, I did another test run, this time with AMD's Radeon VII flagship product because it will definitely give us a much higher framerate, which could reveal some potential issues.

Here again, no surprises, but this test run confirms once more: negligible performance loss when GPU-bound, 3-4% when CPU-bound.

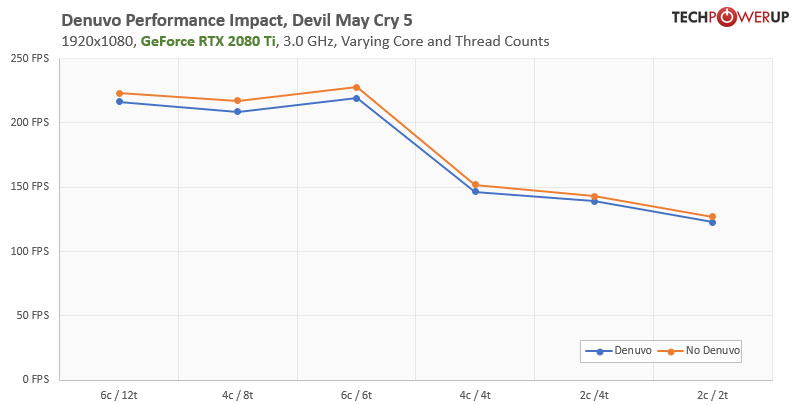

Various CPU Core and Thread Counts

Last but not least, I wanted to look at CPU core counts. The Core i7-8700K I'm using has 6 cores and 12 threads—hardly a typical entry-level configuration for daily use. For all following tests, I've reduced the CPU clock to 3.00 GHz to match lower-end CPUs not only in terms of core configuration, but also in clock speed.

- Starting with the native 6c / 12t configuration sets a baseline (2.96% difference).

- Next, we move on to 4c / 8t, which is an extremely common scenario, as it's the configuration of highly popular CPUs, like the Core i7-7700K from early 2017, Core i7-4790K, or Core i7-2700K (4.07% difference).

- The next test result is for 6c / 6t, which matches the i7-8700K, i5-8400, i7-7800X, i7-6850K, i7-6800K, and other famous six-core SKUs (3.88%).

- Diving further into the lower end of the performance spectrum, testing at 4c / 4t represents the typical "quad-core" processor, which is seen by many as the entry level requirement into serious gaming. Popular SKUs are the i5-7600K, i3-8350K, i7-4670K, i7-3570K, i5-6500K, i7-2600 and many more. Here we see a 3.76% difference.

- For science, we added test results with two cores and four threads, which matches entry-level CPUs like the Pentium Gold G5400, i3-7100, i3-4160, i5-660 and many more from half a decade ago (2.73% difference).

- Last but not least, I wanted to see what happens with a CPU that has just two cores, which of course is completely overwhelmed with the modern game. This data point represents the most basic Atoms, Celerons, and Pentiums that really nobody uses anymore. Still, with 3.75%, the performance loss because of Denuvo isn't increasing.

Jul 24th, 2025 16:12 CDT

change timezone

Latest GPU Drivers

New Forum Posts

- Corsair RM850x (2021) 12V Rail Dropping — Causing Crashes While Gaming (3)

- [USA] [Newegg] PCCOOLER CPS RZ620 Dual Tower CPU Air Cooler $23 (regular $70) same cooler I own currently (it even beats 360 AIO water coolers) (8)

- Lexar NM790 (4TB) made my PC go back to Windows XP days, since it caused my PC to be SO slow and laggy! (21)

- Which Linux flavor? (54)

- AI Job Losses: let's count the losses up, total losses to AI so far 94,000 and counting (60)

- RX 9000 series GPU Owners Club (1193)

- Share your CPUZ Benchmarks! (2533)

- What are these keycaps? (1)

- Need some help finding correct VBIOS for my RX580 (8)

- What's your latest tech purchase? (24356)

Popular Reviews

- Noctua NF-A12x25 G2 PWM Fan Review

- MSI MPG B850I Edge Ti Wi-Fi Review

- Cougar OmnyX Review

- Thermal Grizzly WireView Pro Review

- UPERFECT UMax 24 Review

- TerraMaster F4-424 Max Review - The fastest NAS we've tested so far

- Sharkoon OfficePal C10 Review - Affordable and Decent

- Razer Blade 16 (2025) Review - Thin, Light, Punchy, and Efficient

- Upcoming Hardware Launches 2025 (Updated May 2025)

- VAXEE XE V2 Wireless Review

TPU on YouTube

Controversial News Posts

- Some Intel Nova Lake CPUs Rumored to Challenge AMD's 3D V-Cache in Desktop Gaming (140)

- AMD Radeon RX 9070 XT Gains 9% Performance at 1440p with Latest Driver, Beats RTX 5070 Ti (131)

- AMD's Upcoming UDNA / RDNA 5 GPU Could Feature 96 CUs and 384-bit Memory Bus (116)

- NVIDIA GeForce RTX 5080 SUPER Could Feature 24 GB Memory, Increased Power Limits (115)

- NVIDIA DLSS Transformer Cuts VRAM Usage by 20% (99)

- AMD Sampling Next-Gen Ryzen Desktop "Medusa Ridge," Sees Incremental IPC Upgrade, New cIOD (97)

- NVIDIA Becomes First Company Ever to Hit $4 Trillion Market-Cap (94)

- Windows 12 Delayed as Microsoft Prepares Windows 11 25H2 Update (92)