Friday, December 4th 2020

PSA: AMD's Graphics Driver will Eat One CPU Core when No Radeon Installed

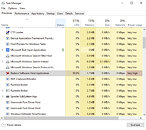

While I was messing around with an older SSD test system (not benchmarking anything) I wondered why the machine's performance was SO sluggish with the NVIDIA card I just installed. Windows startup, desktop, Internet, everything in Windows would just be incredibly slow. This is an old dual-core machine, but it ran perfectly fine with the AMD Radeon card I used before.At first I blamed NVIDIA, but when I opened Task Manager I noticed one of my cores sitting at 100%—that can't be right.Digging a bit further into this, it looks like RadeonSettings.exe is using one processor core at maximum 100% CPU load. Ugh, but there is no AMD graphics card installed right now.

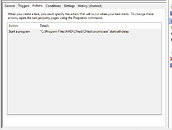

Once that process was terminated manually (right click, select "End task"), performance was restored to expected levels and CPU load was normal again. This confirms that the AMD driver is the reason for the high CPU load. Ideally, before changing graphics card, you should uninstall the current graphics card driver, change hardware, then install the new driver, in that order. But for a quick test that's not what most people do, and others are simply not aware of the fact that a thing called "graphics card driver" exists, and what it does. Windows is smart enough to not load any drivers for devices that aren't present physically.Looks like AMD is doing things differently and just pre-loads Radeon Settings in the background every time your system is booted and a user logs in, no matter if AMD graphics hardware is installed or not. It would be trivial to add a check "If no AMD hardware found, then exit immediately", but ok. Also, do we really need six entries in Task Scheduler?

I got curious and wondered how it is possible in the first place that an utility software like the Radeon Settings control panel uses 100% CPU load constantly—something that might happen when a mining virus gets installed, to use your electricity to mine cryptocurrency, without you knowing. By the way, all this was verified to be happening on Radeon 20.11.2 WHQL driver, 20.11.3 Beta and the press driver for an upcoming Radeon review.

Unless you're a computer geek you'll probably want to skip over the following paragraphs, I still found the details interesting enough to share with you.

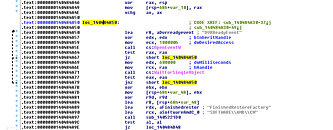

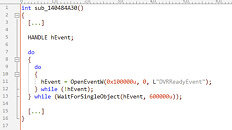

I attached my debugger, looked for the thread that's causing all the CPU load and found this:Hard to read, translated it into C code it might make more sense:If you're a programmer you'd have /facepalm'd by now, let me explain. In a multi-threaded program, Events are often used to synchronize concurrently running threads. Events are a core feature of the Windows operating system, once created, they can be set to "signaled", which will notify every other piece of code that is watching the status of this event—instantly and even across process boundaries. In this case the Radeon Settings program will wait for an event called "DVRReadyEvent" to get created, before it continues with initialization. This event gets created by a separate, independent, driver component, that's supposed to get loaded on startup, too, but apparently never does. The Task Scheduler entries in the screenshot above do show "StartDVR". The naming suggests it's related to the ReLive recording feature that lets you capture and stream gameplay. I guess that part of the driver does indeed check if Radeon hardware is present, and will not start otherwise. Since Windows has no WaitForEventToGetCreated() function, the usual approach is to try to open the event until it can be opened, at which point you know that it does exist.

You're probably asking now, "what if the event never gets created?" Exactly, your program will be hung, forever, caught in an infinite loop. The correct way to implement this code is to either set a time limit for how long the loop should run, or count the number of runs and give up after 100, 1000, 1 million, you pick a number—but it's important to set a reasonable limit.

A more subtle effect of this kind of busy waiting is that it will run as fast as the processor can, loading one core to 100%. While that might be desirable if you have to be able to react VERY quickly to something, there's no reason to do that here. The typical approach is to add a short bit of delay inside the loop, which tells the operating system and processor "hey, I'm waiting on something and don't need CPU time, you may run another application now or reduce power". Modern processors will adjust their frequency when lightly loaded, and even power down cores completely, to conserve energy and reduce heat output. Even a delay of one millisecond will make a huge difference here.

This is especially important during system startup, where a lot of things are happening at the same time, that need processor time to complete—it's why you feel you're waiting forever for your desktop to become usable when you start the computer. With Radeon Settings taking over one core completely, there's obviously less performance left for other startup programs to complete.

I did some quick and dirty performance testing in actual gameplay on a 8-core/16-thread CPU and found a small FPS loss, especially in CPU limited scenarios, around 1%, in the order of 150 FPS vs 151 FPS. This confirms that this can be an issue on modern systems, too, even though just 5% of CPU power is lost (one core out of 16). The differences will be minimal though, and it's unlikely you'll subjectively notice the difference.

Waiting on synchronization signals is very basic programming skills, most midterm students would be able to implement it correctly. That's why I'm so surprised to see such low quality code in a graphics driver component that get installed on hundreds of millions of computers. Modern software development techniques avoid these mistakes by code reviews—one or multiple colleagues read your source code and point out potential issues. There's also "unit testing", which requires developers to write testing code that's separate from the main code. These unit tests can then be executed automatically to measure "code coverage"—how many percent of the program code are verified to be correct through the use of unit tests. Let's just hope AMD fixes this bug, it should be trivial.

If you are affected by this issue, just uninstall the AMD driver from Windows Settings - Apps and Features. If that doesn't work, use DDU. It's not a big deal anyway, what's most important is that you are aware, in case your system feels sluggish after a graphics hardware change.

Once that process was terminated manually (right click, select "End task"), performance was restored to expected levels and CPU load was normal again. This confirms that the AMD driver is the reason for the high CPU load. Ideally, before changing graphics card, you should uninstall the current graphics card driver, change hardware, then install the new driver, in that order. But for a quick test that's not what most people do, and others are simply not aware of the fact that a thing called "graphics card driver" exists, and what it does. Windows is smart enough to not load any drivers for devices that aren't present physically.Looks like AMD is doing things differently and just pre-loads Radeon Settings in the background every time your system is booted and a user logs in, no matter if AMD graphics hardware is installed or not. It would be trivial to add a check "If no AMD hardware found, then exit immediately", but ok. Also, do we really need six entries in Task Scheduler?

I got curious and wondered how it is possible in the first place that an utility software like the Radeon Settings control panel uses 100% CPU load constantly—something that might happen when a mining virus gets installed, to use your electricity to mine cryptocurrency, without you knowing. By the way, all this was verified to be happening on Radeon 20.11.2 WHQL driver, 20.11.3 Beta and the press driver for an upcoming Radeon review.

Unless you're a computer geek you'll probably want to skip over the following paragraphs, I still found the details interesting enough to share with you.

I attached my debugger, looked for the thread that's causing all the CPU load and found this:Hard to read, translated it into C code it might make more sense:If you're a programmer you'd have /facepalm'd by now, let me explain. In a multi-threaded program, Events are often used to synchronize concurrently running threads. Events are a core feature of the Windows operating system, once created, they can be set to "signaled", which will notify every other piece of code that is watching the status of this event—instantly and even across process boundaries. In this case the Radeon Settings program will wait for an event called "DVRReadyEvent" to get created, before it continues with initialization. This event gets created by a separate, independent, driver component, that's supposed to get loaded on startup, too, but apparently never does. The Task Scheduler entries in the screenshot above do show "StartDVR". The naming suggests it's related to the ReLive recording feature that lets you capture and stream gameplay. I guess that part of the driver does indeed check if Radeon hardware is present, and will not start otherwise. Since Windows has no WaitForEventToGetCreated() function, the usual approach is to try to open the event until it can be opened, at which point you know that it does exist.

You're probably asking now, "what if the event never gets created?" Exactly, your program will be hung, forever, caught in an infinite loop. The correct way to implement this code is to either set a time limit for how long the loop should run, or count the number of runs and give up after 100, 1000, 1 million, you pick a number—but it's important to set a reasonable limit.

A more subtle effect of this kind of busy waiting is that it will run as fast as the processor can, loading one core to 100%. While that might be desirable if you have to be able to react VERY quickly to something, there's no reason to do that here. The typical approach is to add a short bit of delay inside the loop, which tells the operating system and processor "hey, I'm waiting on something and don't need CPU time, you may run another application now or reduce power". Modern processors will adjust their frequency when lightly loaded, and even power down cores completely, to conserve energy and reduce heat output. Even a delay of one millisecond will make a huge difference here.

This is especially important during system startup, where a lot of things are happening at the same time, that need processor time to complete—it's why you feel you're waiting forever for your desktop to become usable when you start the computer. With Radeon Settings taking over one core completely, there's obviously less performance left for other startup programs to complete.

I did some quick and dirty performance testing in actual gameplay on a 8-core/16-thread CPU and found a small FPS loss, especially in CPU limited scenarios, around 1%, in the order of 150 FPS vs 151 FPS. This confirms that this can be an issue on modern systems, too, even though just 5% of CPU power is lost (one core out of 16). The differences will be minimal though, and it's unlikely you'll subjectively notice the difference.

Waiting on synchronization signals is very basic programming skills, most midterm students would be able to implement it correctly. That's why I'm so surprised to see such low quality code in a graphics driver component that get installed on hundreds of millions of computers. Modern software development techniques avoid these mistakes by code reviews—one or multiple colleagues read your source code and point out potential issues. There's also "unit testing", which requires developers to write testing code that's separate from the main code. These unit tests can then be executed automatically to measure "code coverage"—how many percent of the program code are verified to be correct through the use of unit tests. Let's just hope AMD fixes this bug, it should be trivial.

If you are affected by this issue, just uninstall the AMD driver from Windows Settings - Apps and Features. If that doesn't work, use DDU. It's not a big deal anyway, what's most important is that you are aware, in case your system feels sluggish after a graphics hardware change.

277 Comments on PSA: AMD's Graphics Driver will Eat One CPU Core when No Radeon Installed

Alot programming gurus in this comment section, maybe you should apply for a job at AMD. Explain to them, how you're going to save them from themselves.

People keep making drama about this, but there's effectively no issue. Prattling on and beating a dead horse over nothing is just childish behavior. TPU should strive to be better than to devolve to such pettiness, in any event. This is making me really miss HardOCP.

You can clearly see I can use Radeon settings just fine and it even picks up GPU but it still takes one thread for itself. I just DDU'ed system again and it didn't change anything. I have to kill Radeon Settings process every time I use it.

Might as well downgrade to like 19.something version, at least it was nice and stable and not bloated.

I can tell you from experience helping people, regular people don't know squat and do this kind of thing all the time, and don't know how to fix it. This post will explain it and tell them what to do, since it will now like be a search engine result.

Well I had some small bugs with Nvidia driver too but they only last for a very short time, like a week or two before a hotfix come out, didn't have to send email to Nvidia or anything.

Breaking news: Software installed on computer, does stuff on computer, news at 8.

oops.

edit: i tried clicking the tray icon only for it to vanish and stop wasting CPU power, somethings definitely weird on this one.

This is not going to have a job tomarrow bad if they find who ever signed off on this (assuming anybody at AMD cares about code quality which we know they don't ) =\

nobody mentioned Nvidia you did so you can stop with the bias crap

if I was AMD I would hire a reputable code auditing service and have them check there entire code for errors like this odds are there is more

1. coders are lazy and,

2. remove software for hardware no longer in the system.

in other news, the sky is blue, grass is green and rain is wet :lol:

before the flames start, i think amd not doing their job is just as bad as leaving software on your system for hardware you removed. both to blame like.

Yesterday I put all OC settings directly in to the bios of my card so don't need use it anymore. Tried using afterburner but it was messing stock bios fan profile up.

Bit extreme maybe but feel much better just using the driver.

But things escalate quickly.

(*) I started with C64 basic and asm. Then moved on to PCs and Pascal. After that Delphi and AVR asm. It was very hard to learn Windows programming and while i loved coding, every now and then i needed something new that i had to learn. And part of me hated that. Haven't coded a line like in 8 or 10 years, and now, if i want to start again, i must learn a new language, like C...

"When removing a gfx card remember to always uninstall the driver & associated application, if that doesn't work, use DDU. "

Like.... it's a PSA not a call to arms.

I disagree about AMD just fixing similar crap and getting extraordinary results. Don't get me wrong, every bug should be fixed, but the inconsistent reliability issues I've seen over many years with AMD drivers tells me there is probably some larger "design flaw". If this was easily fixable, AMD would have fixed it a long time ago.Perhaps the overhead is "absurd" if you make an isolated test case, but it's not absurd in practice.

Nevertheless, AMD could easily do what Nvidia did, by bringing most of the driver side improvements of DirectX 12 to 11, but that would ruin the image of AMD being better at DirectX 12 though.Really?

Have you worked at code bases of 100.000s or millions of lines of code, possibly with an awful complex structure?

Keep in mind that we are talking about a minor "glitch" here, which could be either a careless mistake or even the result of a bad merge. All programmers do small mistakes, and I'll be the first one to admit doing some embarrassing ones, but what really shows programming skills (or lack thereof) is how problems are solved, not a tiny mistake. And I mean no disrespect here, but having such attitudes as an engineer is not healthy.

One of the bigger problems I've had in development teams over the years is that lesser coders don't dare to challenge my work, even when I've strongly encouraged them to try to break it. So getting good QA can sometimes be challenging.Mostly true, yes.

But regarding anticipating issues; all such software projects should have routines designed to validate that a release is working reasonably well. While I don't expect anyone to never make a bug, it is astonishing that they didn't test if the driver behaved erratically in a system with a different GPU present, this should certainly be in their test suite.

Edit: Let me take another example; some years ago AMD managed to ship two drivers in a row, both failing to compile most GLSL shaders, even basic ones. I still don't understand how it's "possible" to ship a driver without validating basic stuff like this.This nonsense has been debunked several times, there are many reasons to have different GPUs present, such as;

APU + GPU

Developers or other engineers having multiple GPUs for various compute and simulations

Even APIs like DirectX 12 and Vulkan is designed to work with multiple GPUs from different makes. There is simply no excuse when a driver suite don't handle this.

If your software can't handle other hardware being present, then your software is broken.

And as I said, both DirectX 12 and Vulkan is designed to have a mix of GPUs, so they should be aware of this and test it before shipping a new driver.

Has anyone here independently reproduced the issue?

That's a Op reading fail right there.

The problem occurs when hardware ISN'T there but the software for it IS.

Who uses Mgpu with multiple cards missing and how?.

To test for this AMD's test department would have had to do similar, remove their GPU and use Dgpu or fit a competition GPU while leaving their software on, I have done application testing ,you stick to thing's you expect to happen not such outliers typically.

Now a code review could find these issues and really is required at this point but some of your expectations for testing are ridiculous IMHO.

Second, the only reason this is a “farce” to you is because you’re obviously acting defensive. It was a clear, in depth investigation into a problem, using coding skills, and published as a bug report and advisory. I’m sorry you live in such a perfect world that no one should ever learn anything from other’s mistakes (W1zzard’s and AMD’s both). There, now THAT was condescending.

In the sense that it is in actual cpu usage vs the competition (and that will hurt cpubound games), yes, it most certainly is. I've looked into this a lot. dxvk outperforms their dx11 driver in cpu overhead.