48

48

NVIDIA GeForce RTX 2080 Ti PCI-Express Scaling

(48 Comments) »Introduction

NVIDIA recently launched its GeForce RTX 20-series graphics cards based on its new "Turing" architecture with two high-end parts: the GeForce RTX 2080 Ti and RTX 2080. Despite a long list of innovations, NVIDIA chose to give these chips a PCI-Express 3.0 x16 bus interface, even though the PCI-Express gen 4.0 specification has been published over a year ago and could be implemented in desktop platforms in the next few months. Rival AMD is rumored to be implementing PCI-Express gen 4.0 in its next-generation GPU.

The PCI-Express bus interface has endured close to two decades of market dominance thanks to its scalable design, backwards compatibility, and near-doubling in data bandwidth every five years or so. PCI-Express generation 3, introduced in 2011, has seen NVIDIA and AMD launch four generations of GPUs on it, with each failing to saturate it at full x16 bus width. That, coupled with the decline in multi-GPU beyond two graphics cards, has blunted the overall connectivity edge the high-end desktop (HEDT) platform had over mainstream-desktop.

We have a tradition of testing PCI-Express bus utilization and scaling each time a new graphics architecture from either company is launched. We do so by testing a new generation graphics card's performance on various configurations of the PCI-Express bus by narrowing its bus width (number of lanes) and limiting its bandwidth to that of older generations, giving us valuable data to draw inferences on a number of things. It lets us know if the new GeForce RTX 2080 Ti can be bottlenecked in multi-GPU setups on mainstream desktop platforms and whether it's time you changed your old motherboard that uses older generations of PCI-Express. It will also help answer questions like "Will my graphics card run slower when using PCIe x8?" and "Do I need to run my SLI in x16, buying the more expensive X299 platform, for the best performance?"

In this review, we are taking the fastest graphics card from NVIDIA, the GeForce RTX 2080 Ti Founders Edition, and test it across PCI-Express 3.0 x16 (the most common configuration for single-GPU builds), PCI-Express 3.0 x8 (bandwidth comparable to PCI-Express 2.0 x16), PCI-Express 3.0 x4 (comparable to PCI-Express 2.0 x8), and for purely academic reasons, PCI-Express 2.0 x4 (what would happen if you installed your card in the bottom-most slot of your motherboard). The table below gives you an idea of the theoretical maximum bandwidths of the common PCI-Express configurations:

For all our PCI-Express bandwidth testing, we limit the bus-width by physically blocking the slot wiring for lanes using insulating tape. The modular design of PCI-Express allows for this. The motherboard BIOS lets us limit the PCI-Express feature set to that of older generations, too. We put the card in its various PCI-Express configurations through our entire battery of graphics card benchmarks, all of which are real-world game tests.

Our exhaustive coverage of the NVIDIA GeForce RTX 20-series "Turing" debut also includes the following reviews: NVIDIA GeForce RTX 2080 Ti Founders Edition 11 GB | NVIDIA GeForce RTX 2080 Founders Edition 8 GB | ASUS GeForce RTX 2080 Ti STRIX OC 11 GB | ASUS GeForce RTX 2080 STRIX OC 8 GB | Palit GeForce RTX 2080 Gaming Pro OC 8 GB | MSI GeForce RTX 2080 Gaming X Trio 8 GB | MSI GeForce RTX 2080 Ti Gaming X Trio 11 GB | MSI GeForce RTX 2080 Ti Duke 11 GB | NVIDIA RTX and Turing Architecture Deep-dive

Test System

| Test System - VGA Rev. 2018.2 | |

|---|---|

| Processor: | Intel Core i7-8700K @ 4.8 GHz (Coffee Lake, 12 MB Cache) |

| Motherboard: | ASUS Maximus X Hero Intel Z370 |

| Memory: | G.SKILL 16 GB Trident-Z DDR4 @ 3867 MHz 18-19-19-39 |

| Storage: | 2x Patriot Ignite 960 GB SSD |

| Power Supply: | Seasonic Prime Ultra Titanium 850 W |

| Cooler: | Cryorig R1 Universal 2x 140 mm fan |

| Software: | Windows 10 64-bit April 2018 Update |

| Drivers: | GeForce 411.51 Press Driver |

| Display: | Acer CB240HYKbmjdpr 24" 3840x2160 |

- All games and cards were tested with the drivers listed above, and no performance results were recycled between test systems. Only this exact system with exactly the same configuration was used.

- All games are set to their highest quality setting unless indicated otherwise.

- AA and AF are applied via in-game settings, not via the driver's control panel.

- 1920x1080: Most common monitor (22" - 26").

- 2560x1440: Highest possible 16:9 resolution for commonly available displays (27"-32").

- 3840x2160: 4K Ultra HD resolution, available on the latest high-end monitors.

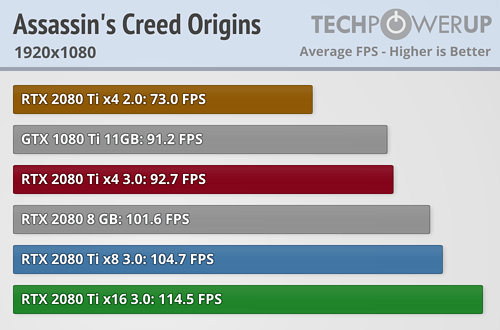

Assassin's Creed Origins

Our Patreon Silver Supporters can read articles in single-page format.

Jul 30th, 2025 05:53 CDT

change timezone

Latest GPU Drivers

New Forum Posts

- RX6800XT Gigabyte Gaming OC not giving image while being on "OC" switch and even sometimes while being on "silent" switch. (16)

- Slow textures problems and Pop in objects on new system. RTX 5080 (34)

- I stop using Windows as my main OS for like 4+ years (9)

- AMD EXPO Memory issue Suddenly After 7months usage (42)

- AMD Radeon 6900 XT Limited Black Edition Bios Problems - Boost Problems (1)

- What antivirus do you use? (52)

- LCD IPS display (12)

- What's your latest tech purchase? (24397)

- AI Job Losses: let's count the losses up, total losses to AI so far 94,000 and counting (80)

- 3DMARK "LEGENDARY" (346)

Popular Reviews

- Herman Miller Logitech G Embody Review - No Pain, No Gain

- MSI Claw 8 AI+ A2VM Review

- Lenovo Legion 5i (15IRX10) Review - Feature-Rich and Wallet Friendly

- Lian Li O11 Dynamic Mini V2 Review

- Upcoming Hardware Launches 2025 (Updated May 2025)

- Noctua NF-A12x25 G2 PWM Fan Review

- Sapphire Radeon RX 9060 XT Pulse OC 16 GB Review - An Excellent Choice

- AMD Ryzen 7 9800X3D Review - The Best Gaming Processor

- AQIRYS Sirius Pro Review

- NVIDIA GeForce RTX 5050 8 GB Review

TPU on YouTube

Controversial News Posts

- AMD's Upcoming UDNA / RDNA 5 GPU Could Feature 96 CUs and 384-bit Memory Bus (134)

- AMD Radeon RX 9070 XT Gains 9% Performance at 1440p with Latest Driver, Beats RTX 5070 Ti (131)

- Intel "Nova Lake-S" Core Ultra 3, Ultra 5, Ultra 7, and Ultra 9 Core Configurations Surface (110)

- DDR6 Memory Arrives in 2027 with 8,800-17,600 MT/s Speeds (101)

- AMD Sampling Next-Gen Ryzen Desktop "Medusa Ridge," Sees Incremental IPC Upgrade, New cIOD (97)

- Intel CEO Confirms SMT To Return to Future CPUs (95)

- NVIDIA Becomes First Company Ever to Hit $4 Trillion Market-Cap (94)

- Windows 12 Delayed as Microsoft Prepares Windows 11 25H2 Update (92)