Monday, November 6th 2017

Intel, AMD MCM Core i7 Design Specs, Benchmarks Leaked

Following today's surprise announcement of an Intel-AMD collaboration (of which NVIDIA seems to be the only company left in a somewhat more fragile position), there have already been a number of benchmark leaks for the new Intel + AMD devices. While Intel's original announcement was cryptic enough - to be expected, given the nature of the product and the ETA before its arrival to market - some details are already pouring out into the world wide web.

The new Intel products are expected to carry the "Kaby Lake G" codename, where the G goes hand in hand with the much increased graphics power of these solutions compared to other less exotic ones - meaning, not packing AMD Radeon graphics. For now, the known product names point to one Intel Core i7-8705G and Intel Core i7-8809G. Board names for these are 694E:C0 and 694C:C0, respectively.The discrete GPUs on these multi-chip modules (MCMs) are both being reported as packing 24 compute units with a total of 1536 stream processors. Clock rates vary between 1000 MHz and 1190 MHz: the 694E is the lower performance part at 1000 MHz, a 20% reduction from the 1190 MHz for the 694C version (which equates to a graphics performance of around 3.3 TFLOPs, or half that of the much-talked about Xbox One X). Both solutions come with 4 GB of HBM2 memory, and the CPUs are 4-core, 8-thread Kaby Lake parts running at 3.1 GHz and 4.1 GHz Turbo, with the 694C version having its HBM2 memory clocked at 800 MHz versus 700 MHz on the 694E one.

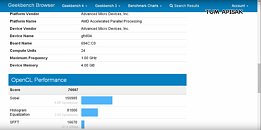

Some benchmarks include GFXBench, where we can see that the Intel Core i7-8809G enjoys an almost 50% performance advantage compared to the Core i7-8705G. This can probably be laid at the feet of the lower GPU core and HBM2 clocks, though it's likely there's some sort of power management features at play here as well - perhaps different TDP configurations, considering the products these SKUs are expected to power? It's also very possible that the Core i7-8705G (694E) features a cut-down version of the AMD GPU as well.Moving on to GeekBench, it seems that the Intel Core i7-8809G is scaling its clocks to stay within its power budget, since the reported max clock speed is now lower - 1 GHz against the 1190 MHz previously seen on GFXBench. Here we have a confirmation of the 24 compute units present on the chip, and see a total OpenCL score of 76,607 points.Next up are some 3D Mark scores and hardware data on the 3D Mark 11 Benchmark (Performance preset), taken from user Tum Apisak's YouTube channel, which shed some more light on the expected performance of the Core i7-8809G compared to the Core i7-8705G, where the former is around 30% better in graphics tests than the latter. However, the fact that the Core i7-8705G just manages to eek-out a win against the Core i7-8809G on the CPU tests (while being virtually trounced on the GPU tests) apparently does confirm the presence of a cut-down GPU. Lower power draw from the GPU likely means that the CPU has more thermal and power headroom to achieve its Turbo clocks for longer, which may help explain the higher CPU score.There's still a benchmark utility to go over; SiSoftware Sandra's, though this one is a mess, likely due to the lack of full support for the parts involved. Average capacity (CUs) for the Core i7-8705G (694E:C0) is reported as both 1403 and the previously known 1536, clockspeeds are now reported as 550 MHz top with an average of 539 MHz, and HBM2 clock is pegged at 500 MHz. This page doesn't really give us much information, and raises more questions than answers, but nevertheless, it's here for your perusal and consideration alongside the other specs and details.Wrapping up, and moving on to a real benchmark, we have the ubiquitous Ashes of the Singularity benchmark rearing its head again. Here, we see the 694E:C0 part, Core i7-8705G's MCM delivering both a 3,900 and 4,800 score (again, power targets and performance profiles playing havoc with the benchmark scores and GPU performance?) Settings were at Low preset, 1080p, where the MCM managed to achieve a 62.9 average framerate on its best run.So there you have it. Performance leaks and benchmarks for the latest "unbelievable" development in out tech industry. Intel and AMD teaming up, in what is an interesting and expected move in a world ruled by reason. Although we really are all forgiven to think, considering our recent experiences, that the world wasn't such a place. All in all, our collective gasp at the Intel-AMD announcement is perfectly understandable. The rumor mill did manage to mill this one out.

Sources:

Tum Apisak's YouTube Channel, Tum Apisak's youTube Channel Video #2, GFXBench, GFXBench

The new Intel products are expected to carry the "Kaby Lake G" codename, where the G goes hand in hand with the much increased graphics power of these solutions compared to other less exotic ones - meaning, not packing AMD Radeon graphics. For now, the known product names point to one Intel Core i7-8705G and Intel Core i7-8809G. Board names for these are 694E:C0 and 694C:C0, respectively.The discrete GPUs on these multi-chip modules (MCMs) are both being reported as packing 24 compute units with a total of 1536 stream processors. Clock rates vary between 1000 MHz and 1190 MHz: the 694E is the lower performance part at 1000 MHz, a 20% reduction from the 1190 MHz for the 694C version (which equates to a graphics performance of around 3.3 TFLOPs, or half that of the much-talked about Xbox One X). Both solutions come with 4 GB of HBM2 memory, and the CPUs are 4-core, 8-thread Kaby Lake parts running at 3.1 GHz and 4.1 GHz Turbo, with the 694C version having its HBM2 memory clocked at 800 MHz versus 700 MHz on the 694E one.

Some benchmarks include GFXBench, where we can see that the Intel Core i7-8809G enjoys an almost 50% performance advantage compared to the Core i7-8705G. This can probably be laid at the feet of the lower GPU core and HBM2 clocks, though it's likely there's some sort of power management features at play here as well - perhaps different TDP configurations, considering the products these SKUs are expected to power? It's also very possible that the Core i7-8705G (694E) features a cut-down version of the AMD GPU as well.Moving on to GeekBench, it seems that the Intel Core i7-8809G is scaling its clocks to stay within its power budget, since the reported max clock speed is now lower - 1 GHz against the 1190 MHz previously seen on GFXBench. Here we have a confirmation of the 24 compute units present on the chip, and see a total OpenCL score of 76,607 points.Next up are some 3D Mark scores and hardware data on the 3D Mark 11 Benchmark (Performance preset), taken from user Tum Apisak's YouTube channel, which shed some more light on the expected performance of the Core i7-8809G compared to the Core i7-8705G, where the former is around 30% better in graphics tests than the latter. However, the fact that the Core i7-8705G just manages to eek-out a win against the Core i7-8809G on the CPU tests (while being virtually trounced on the GPU tests) apparently does confirm the presence of a cut-down GPU. Lower power draw from the GPU likely means that the CPU has more thermal and power headroom to achieve its Turbo clocks for longer, which may help explain the higher CPU score.There's still a benchmark utility to go over; SiSoftware Sandra's, though this one is a mess, likely due to the lack of full support for the parts involved. Average capacity (CUs) for the Core i7-8705G (694E:C0) is reported as both 1403 and the previously known 1536, clockspeeds are now reported as 550 MHz top with an average of 539 MHz, and HBM2 clock is pegged at 500 MHz. This page doesn't really give us much information, and raises more questions than answers, but nevertheless, it's here for your perusal and consideration alongside the other specs and details.Wrapping up, and moving on to a real benchmark, we have the ubiquitous Ashes of the Singularity benchmark rearing its head again. Here, we see the 694E:C0 part, Core i7-8705G's MCM delivering both a 3,900 and 4,800 score (again, power targets and performance profiles playing havoc with the benchmark scores and GPU performance?) Settings were at Low preset, 1080p, where the MCM managed to achieve a 62.9 average framerate on its best run.So there you have it. Performance leaks and benchmarks for the latest "unbelievable" development in out tech industry. Intel and AMD teaming up, in what is an interesting and expected move in a world ruled by reason. Although we really are all forgiven to think, considering our recent experiences, that the world wasn't such a place. All in all, our collective gasp at the Intel-AMD announcement is perfectly understandable. The rumor mill did manage to mill this one out.

48 Comments on Intel, AMD MCM Core i7 Design Specs, Benchmarks Leaked

I wonder if Intel's cores are connected to the HBCC and the HBCC serves as the memory controller (uses HBM as L4 cache and DDR4 as overflow). If it does, the CPU itself could see a serious boost from the L4 cache.

I'm thinking the switch 2.0 :P

Again unlikely, AMD would have to share some closely guarded secrets of their GPU if they're doing this, from what we know there is no IP sharing in place.Intel don't get by wafer thin margins, unless they're doing contra revenues & all that stuff. The next console chip will still be from AMD, or Nvidia if we're talking handhelds, since Intel can't match either in terms of their GPU prowess.

The 694C is the slow one and 694E is the fast one.

As such, Geekbench is reporting the correct frequency because it's the 694C being tested.

Sometimes, to reach 25% higher performance, you have to sacrifice 50% of your efficiency. Vega seems to behave very well when used in lower power scenarios actually and i won't be suprised if it actually looks great compered to Pascal on the mobile GPU side

wonder if it can be overclocked, hihi

edit: if it can go beyond 1200mhz then great...

PS. already used HD 7870/50 before, both worked flawlessly at 1200mhz

Really this is what MacBooks are going to get and, for compute and limited gaming, this will be great. :)

On the other hand its just about the voltage and overall config. AMDs GPUs and CPUs can use lower voltage and be really efficient. Its just that AMD tends to pump them up with voltage. probably to make the most of the yield.its going to be great if it works well for the ultrathin notebooks. those are always on intel iGPUs AFAIK. this is a whole new world for them and I think they are very popular.

Not sure about macbooks. Apple should be wanting to use AMD CPUs right about now. Maybe this will help intel keep apple on board since apple is bent on AMD GPUs.

Polaris on mobile is something like 75W while only clocking in slightly under the desktop parts.

10~12W saved by using HBM2 as well. The GPU can probably fall under 35W TDP.

What was the point of AMD and Intel teaming up for this.

The top end gaming laptops are oversized, bulky pieces of plastic that basically are useless as a portable gaming or computing machine. This is no threat to that super ultra niche.

The processor speed is 3.1ghz, that is the same speed of the i7 6700hq on laptops. The 6700hq top out at 3.1ghz on 4 cores.

Intel announced the cooperation with AMD this morning and will work together to create an integrated processor in the future, which will be based on Intel's eighth-generation Core Duo processor and AMD's Vega GPU. It is reported that full blood version Vega GPU has 24 processing units, 1536 stream processors, the highest frequency of 1100MHz, using a 4GB HBM2 memory.

[size = 1pc]

[size = 1pc] In this morning's 3DMark 11 ran exposure points, P points only 4000 points, which is obviously not normal, and later found that its operating frequency is only 300MHz. Now pcper has exposed a new integrated processor 3DMark 11 Performance run points, it seems that 1100MHz run full of blood.

[size = 1pc]

According to Pcper's latest table data, Intel / AMD integrated processor 3DMark 11 P-mode graphics points can reach 14127 points, has exceeded 31% of the GTX 1050 Ti, while the GTX 1060 scores 16235 points, that is to say This core shows the performance of GTX 1060 87% of the performance. Running points in "Singing ashes" also reached 3300 points.

Of course, other foreign media said the Intel / AMD integrated processor has multiple versions of the core display, low-end performance and the GTX 950 is almost high-end fight Radeon R9 380.

[size = 1pc]

Of course, due to the high cost of HBM2 memory costs, it is expected that high-end notebooks such as the Apple MacBook will be equipped with this seemingly very violent processor, and Intel's own NUC will also be available. Of course, this CPU is still in the engineering state of the sample, the future what performance is not easy to say.