Friday, January 17th 2020

AMD Allegedly Bolstering Radeon RX 5600 XT in Response to RTX 2060 Price Cut

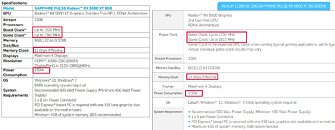

AMD has allegedly changed the specifications of its Radeon RX 5600 XT graphics card through a BIOS update being pushed to manufacturers, according to an HKEPC report. According to the report, AMD has increased clock-speeds of the RX 5600 XT to 1615 MHz gaming and 1750 MHz boost, versus 1375 MHz gaming and 1560 MHz on AMD's CES press-event slides detailing the card. Confirmation of this comes from the product page of Sapphire's RX 5600 XT Pulse graphics card, which doesn't bear any "OC" marking in either the product name or box art, but yet has an updated specs tab, referencing the new clock speed.

Increased GPU (engine) clocks isn't all, Sapphire also increased memory clock speeds from 12 Gbps to 14 Gbps (a 15% increase in memory bandwidth). Also, the typical board power ("power consumption") value has gone up from 150 W to 160 W, indicating a possible power-limit increase. These last-minute changes could probably significantly change the performance numbers of the RX 5600 XT in a bid to make it more competitive to the GeForce RTX 2060. Earlier today, it was reported that NVIDIA formally cut prices of the RTX 2060 down to $299, which would put it within $20 of the RX 5600 XT with its launch price of $279.

Sources:

HKEPC, VideoCardz

Increased GPU (engine) clocks isn't all, Sapphire also increased memory clock speeds from 12 Gbps to 14 Gbps (a 15% increase in memory bandwidth). Also, the typical board power ("power consumption") value has gone up from 150 W to 160 W, indicating a possible power-limit increase. These last-minute changes could probably significantly change the performance numbers of the RX 5600 XT in a bid to make it more competitive to the GeForce RTX 2060. Earlier today, it was reported that NVIDIA formally cut prices of the RTX 2060 down to $299, which would put it within $20 of the RX 5600 XT with its launch price of $279.

98 Comments on AMD Allegedly Bolstering Radeon RX 5600 XT in Response to RTX 2060 Price Cut

5600xt has 12gbps memory.

and 5600 ?

This is a shit thing to do but in the last few years, Nvidia have set up a standpoint where they are the market leader in GPU sales and are very Very aggressive in trying to monopolize their situation by (as in the supers) releasing price or performance adjusted slight upgrades to its product line to diminish the saleability ie supers but they have done this for a long time now, ti Gs Gt GTx, etc.

How would you compete with a company that waits for your release then strives to marginalize your efforts to sell it?

AMD's hand has been forced, do this shit or get beat in sales quarter after quarter.

They also have to contend with some sites reviewer biases, I saw many reviewers extolling RTX with two games at that time like it even mattered, to a lot it didn't yet was a major detractor for some reviewers.

AND FINALLY, I would much rather my chosen GPU company upgrade for free my GPU purchase by bios, then wait six months then bring out the actual full working version of my card and add super to it personally, I don't swap GPU's every year even and I don't want my GPU looking like the sad-sack slight let down version within six months.

And 5600 12GBps

I wonder how switching from 12 to 14 gbps is possible just before the launch.I mean they have to use different memory parts.or is it 12gbps factory overclocked ?

well,if it is,it's the same card,just higher stock clocks and lower oc.it's not really bolstering,it's factory oc.

;)

they were just afraid this 280 card can be flashed and tuned to match 5700xt

I'm sure this GPU will match the 5700 at 1080p but at higher res the 5700 will pull ahead due to the bandwidth

Clearly you don't understand how the free market is supposed to work. We (consumers) want multiple actors to react to each other and provide better deals to us. AMD's failure to produce competitive GPUs in the mid-range and high-end the past 5+ years is only on them.

This BS complaining was the very same back when GTX 980 Ti launched, or when GTX 1080 Ti launched and GTX 980 dropped in price, or when Turing launched, or when Turing refresh launched…

And no, Nvidia don't want a monopoly, that would result in many anti-trust cases.Review bias is clearly in favor of AMD in most cases. Just look at all those review that claimed that GCN will be faster DirectX 12 and Vulkan. Or all the reviews claiming that "AMD gets better over time", without any evidence to support it.

if reviewers weren't testing for the "it's new so we gotta include it" factor,it'd be a pretty bad day at amd.

Reviews should contain a good sample of representative games, regardless of which API they may use. If it turns out that 80% of these games scale better on hardware from one maker then that's just a representation of reality, provided you have made a representative selection.

Second I do understand, what I said was happening IS happening, and yes I fully agree it is a reasonable corporate strategy and not per se something to lambast Nvidia for.

My point is that it is unfair to lambast AMD for using reasonable corporate tactics to avoid Nvidias tactics, that is all.

Cleary you DO NOT UNDERSTAND, corporations like NVIDIA INTEL AMD do not want a competitive landscape at ALL, they don't mind competition but they don't want it to have much of a chance of success, so if they can affect that then that's a plus for them and a reasonable corporate strategy.

AND YES NVIDIA'S ACTIONS these last few years have been massively corrosive to any idea of sharing the GPU market bordering on gorilla market warfare, AMD release, Nvidia reposition, and repeat.

This is just AMD's way of staying relevant and no less OK then Nvidias, no better, but no worse, though I personally as I said prefer the card I bought not to be superseded so quick(not an odd or unusual attitude, in fact, the norm).

oh and im not getting pulled into some BS tit for tat AMD v NVidia shit they both have examples where they have shined or failed big, go figure.

It wont reach XT speeds...its short quite a bit of stream processors and memory bandwidth. 5700...maybe...but even overclocked I doubt it could.

Also, the 5600 is cut down further and only an oem part.

cause apparently they were fine with 230w on rx590 but 160w on 5600xt was too much :rolleyes:

let's just admit the obvious,amd is trying to become nvidia,just with inferior products

AMD had this coming ever since they decided to stall GCN iterations, and jump into new hardware advances to keep selling us archaic old junk. Vega, Fury, and even Radeon VII are evidence of that. Its no coincidence that RDNA suddenly does manage to provide... And none of them were profitable for AMD and rightly so. So what they have now: their focus on midrange turned from choice into necessity and they have even more catching up to do. And more market share to recover. Go figure.. even now, a full year past Turing they still cannot quite match it: not in top (or sub-top) end perf, not in efficiency (though close) and has no RT. Painful. But they are happy 'baiting' with a fifty dollar price cut?! Last minute spec bumps?! WTF kind of business is that anyway? And why the hell would we care? These arent even baby steps, neither for red or green.

Its just yet another horrible business move played out in front of us. Stop blaming the winner for how the loser stopped playing ball. AMD doesnt need that kind of fanbase. I also remember an AMD that had an HD7970 and a panicking Nvidia trying to make its equivalent look better, even though it was more expensive and had less to offer. We didn't see any of this nonsense back then. What we did see was a full gen (7xx) that quickly followed Kepler and pretty much made the entire AMD stack obsolete with major price cuts and a small, but very noticeable perf bump at every midrange>high end price point. And AMD then countered with Hawaii, Titan became a GTX card and more reasonably priced... See, that's what we need. Thát is competition. The current exercise is just well played and timed marketing - in both camps. Avoid this gen, its that simple; if you want competition neither camp deserves a reward this round.

They clearly mentioned that was my observation , they didn't attack Nvidia but you see what you see eh.

I would also point to my statement of this is a shit move but about the same as their competitors are doing too.

No red tint here ,calm yourself.