Monday, November 2nd 2020

AMD Releases Even More RX 6900 XT and RX 6800 XT Benchmarks Tested on Ryzen 9 5900X

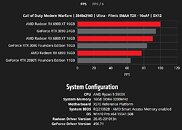

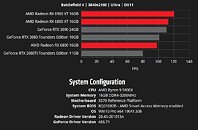

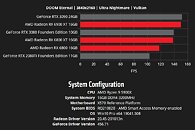

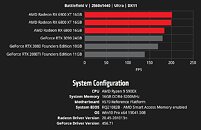

AMD sent ripples in its late-October even launching the Radeon RX 6000 series RDNA2 "Big Navi" graphics cards, when it claimed that the top RX 6000 series parts compete with the very fastest GeForce "Ampere" RTX 30-series graphics cards, marking the company's return to the high-end graphics market. In its announcement press-deck, AMD had shown the $579 RX 6800 beating the RTX 2080 Ti (essentially the RTX 3070), the $649 RX 6800 XT trading blows with the $699 RTX 3080, and the top $999 RX 6900 XT performing in the same league as the $1,499 RTX 3090. Over the weekend, the company released even more benchmarks, with the RX 6000 series GPUs and their competition from NVIDIA being tested by AMD on a platform powered by the Ryzen 9 5900X "Zen 3" 12-core processor.

AMD released its benchmark numbers as interactive bar graphs, on its website. You can select from ten real-world games, two resolutions (1440p and 4K UHD), and even game settings presets, and 3D API for certain tests. Among the games are Battlefield V, Call of Duty Modern Warfare (2019), Tom Clancy's The Division 2, Borderlands 3, DOOM Eternal, Forza Horizon 4, Gears 5, Resident Evil 3, Shadow of the Tomb Raider, and Wolfenstein Youngblood. In several of these tests, the RX 6800 XT and RX 6900 XT are shown taking the fight to NVIDIA's high-end RTX 3080 and RTX 3090, while the RX 6800 is being shown significantly faster than the RTX 2080 Ti (roughly RTX 3070 scores). The Ryzen 9 5900X itself is claimed to be a faster gaming processor than Intel's Core i9-10900K, and features PCI-Express 4.0 interface for these next-gen GPUs. Find more results and the interactive graphs in the source link below.

Source:

AMD Gaming Benchmarks

AMD released its benchmark numbers as interactive bar graphs, on its website. You can select from ten real-world games, two resolutions (1440p and 4K UHD), and even game settings presets, and 3D API for certain tests. Among the games are Battlefield V, Call of Duty Modern Warfare (2019), Tom Clancy's The Division 2, Borderlands 3, DOOM Eternal, Forza Horizon 4, Gears 5, Resident Evil 3, Shadow of the Tomb Raider, and Wolfenstein Youngblood. In several of these tests, the RX 6800 XT and RX 6900 XT are shown taking the fight to NVIDIA's high-end RTX 3080 and RTX 3090, while the RX 6800 is being shown significantly faster than the RTX 2080 Ti (roughly RTX 3070 scores). The Ryzen 9 5900X itself is claimed to be a faster gaming processor than Intel's Core i9-10900K, and features PCI-Express 4.0 interface for these next-gen GPUs. Find more results and the interactive graphs in the source link below.

147 Comments on AMD Releases Even More RX 6900 XT and RX 6800 XT Benchmarks Tested on Ryzen 9 5900X

Why say that 60 FPS is enough for single player games, there is absolutely no logic to that. You think everyone who has a higher refresh display will think to themselves that they need the highest possible frame rate in a multiplayer title but when switching to a singe player one suddenly they no longer need more than precisely 60 ? How the hell does that work, truly mind boggling.

I bought the very first 144hz 1440p display, but I played The Witcher 3 at 60fps with the best visuals, no point in downgrading visual just to get >60FPS.

Same with Metro Exodus, Control, why would I sacrifice visual when I have ~60FPS already ?

Now switching to competitive games like PUBG, Modern Warfare, Overwatch and I lower every setting just to get the highest FPS, why wouldn't I ?

Because I can give you many editorials who would target 60FPS gaming

Just be glad for the fricking competetion , its a win for all us customers. Nvidia and AMD really dont care about us , they just wanna make as much money as possible.

The RTX you refer to is nVidia's proprietary implementation of Microsoft's DirectX raytracing. How (and why) do you expect AMD hardware to perform better than nvidia hardware in ®RTX games? It makes no sense for AMD to even attempt that. Especially since, going forward, most games will implement AMD's (proprietary or open) version of raytracing, and only "some" of them will ship with ®RTX support along the console version of raytracing.

And based on how well AMD's raytracing looks and performs, nvidia's ®RTX may (in time) become a niche feature for select sponsored games... To keep ®RTX relevant, nvidia will have to invest more than it's worth on hardware and software (game dev partners) development, and given nvidia's new focus on enterprise, it may be a hard sell (to investors).

However, buying a high refresh monitor and then trying to convince yourself or others that you should actually play at 60 because "there is no point" sounds like a really intelligent conclusion, I gotta say. Because that's why most people buy a high refresh monitor, to then play at 60hz, right ?

Different players have different preferences when gaming.

Almost everyone recommends turning down visuals in AAA games to hit 144hz 1440p ? Yeah I really need some confirmation on that. No one would want to play AAA games with Low settings just to hit 144hz, that I'm sure of.

I didn't say anyone should play at 60FPS, if you have already max out all the graphical settings and can still getting >60FPS, then play at >60FPS, although capping the framerate really help with input latency with Nvidia Low Latency and AMD Anti Lag in certain games.

From 2016: "Given its old-gen nature, Dragon’s Dogma: Dark Arisen is not really a demanding title."

www.tweaktown.com/guides/7542/dragons-dogma-dark-arisen-gaming-graphics-performance-tweak-guide/index.html

Just saying. ;)Its nothing like it, really. AMD, like NV uses DXR. They're both using the same API for RT.

RTX is hardware on the card. NV cards use DXR API for RT just as AMD will.

It's just fanboys shouting at fanboys at this point. These same people would have been the ones mocking Geforce 256 back in the day about HWT&L, pay them no heed.

We're just in that awkward phase now where DXR is still an unknown for most people and we still dont know for sure if this years or maybe the next cycle is the one that will bring mainstream acceptance/performance to RT. I personally am not aware of any non DXR games tho i do believe those nvidia developed ones like Quake RTX are probably going to be nvidia HW only. I doubt games like Control aren't going to work on AMD, I suspect it's just AMD's software side of things being still not ready enough. I would expect a lot of growing pains for the first half of 2021 and AMD DXR. Hopefully I'm wrong, but they are going into this dealing with a 2 year handicap.

New games will have cross brand hw to work with soon, and as someone with a 2070super all i can say is the DXR game library is veeery small still, and its only going to really grow now with the new consoles since more or less all cross platform AAA titles will be coming with some form of RT once this first cross platform year of releases is over. (and already some of those cross plats are coming with RT anyway) So it bodes well overall for us mid to long term, regardless of hardware brand choices or.. god forbid loyalties.

Like I said for competitive game, like the multiplayer version of Div2, then I would use Low Settings to get the highest FPS I can get.

Now tell me which do you prefer with your current GPU:

RDR2 High setting ~60fps or 144fps with low settings

AC O High Setting ~60 fps or 144fps with low settings

Horizon Zero Dawn High Setting ~60fps or 144fps with low settings

Well to be clear when I said 60FPS, it's for the minimum FPS.

Yeah sure if you count auto-overclocking and proprietary feature (SAM) that make 6900XT as being equal to 3090, see the hypocrisy there ? Also I can find higher benchmark numbers for 3080/3090 online, so trust AMD numbers with a grain of salt.

Oh! and CUDA is no good for gaming - Whilst Ray Tracing kills performance without resorting to DLSS.

Raytracing is todays equivalent of Hairworks or Physx.

The leather jacket openly lied to Nvidia's consumer base, claiming the 3090 was "Titan-like" when it clearly isn't, and promising plenty of stock for buyers. The reality is that abysmal yields are the reason

the Ampere series are almost impossible to come by.

This is fraud and reviewer sites channels should warn people and condemn nvidia for this but very few does.