Tuesday, December 3rd 2013

"Vishera" End Of The Line for AMD FX CPUs: Roadmap

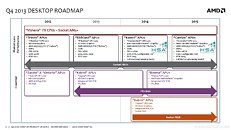

We'd feared something like this would happen for some time now, but leaked AMD product roadmaps confirmed it that AMD FX "Vishera" is the last line of CPUs from AMD. The company will only focus on APUs from here onward, and at the very most, one could expect CPU core counts to go up from their current quad-core stale-meat since A-series "Llano," which will continue into the 2014 A-Series "Kaveri," too.

The alleged AMD roadmap slide leaked to the web by ProHardver.hu points out that socket AM3+ "Vishera" will exist on AMD's product stack for as far as AMD's eye can see - looking deep into even 2015. Unless AMD is planning on hanging its towel with AM3+, it wouldn't mark its roadmap slide out in this way. 2015 will see the introduction of "Carrizo," an APU that succeeds "Kaveri," which will be based on future-generation "Excavator" CPU micro-architecture, and a future-generation GPU architecture, along with full HSA programming model implementation. "Kabini" will have its spell running into mid-2014, at which point "Beema" will succeed it.Unless AMD is planning on 6-core, and 8-core APUs with "Carrizo," (we know that "Kaveri" is neither,) the roadmap reveals that AMD has given up on making processors that are pricier than $150. The company could focus its client products division onto APUs and GPUs, while multi-core processors could be kept alive by the enterprise products division under the Opteron banner, although we've not seen roadmaps to back that theory.

Sources:

ProHardver.hu, SweClockers

The alleged AMD roadmap slide leaked to the web by ProHardver.hu points out that socket AM3+ "Vishera" will exist on AMD's product stack for as far as AMD's eye can see - looking deep into even 2015. Unless AMD is planning on hanging its towel with AM3+, it wouldn't mark its roadmap slide out in this way. 2015 will see the introduction of "Carrizo," an APU that succeeds "Kaveri," which will be based on future-generation "Excavator" CPU micro-architecture, and a future-generation GPU architecture, along with full HSA programming model implementation. "Kabini" will have its spell running into mid-2014, at which point "Beema" will succeed it.Unless AMD is planning on 6-core, and 8-core APUs with "Carrizo," (we know that "Kaveri" is neither,) the roadmap reveals that AMD has given up on making processors that are pricier than $150. The company could focus its client products division onto APUs and GPUs, while multi-core processors could be kept alive by the enterprise products division under the Opteron banner, although we've not seen roadmaps to back that theory.

133 Comments on "Vishera" End Of The Line for AMD FX CPUs: Roadmap

Although saying this if AMD used there ARM license and made an ARM CPU and motherboard with 4 or more sata ports many people would start using them. Hint hint ... low power high performance and loads of sata ports would make a great nas. Since mine and many other peoples server / nas doesn't run windows.

The billion dollar fine they were hit with was a slap on the wrist for basically crippling their competition.

ARM might be interesting, but I suspect that it will take forever for developers to switch over from x86. It could be a good thing if it happens though, a clean break for the next gen of computing.

Even before Intel bribed Dell et al AMD had issues with fabrication capacity. Under the cross lease agreement, AMD could outsource up to ~20% of their production to other foundries. Even with markets denied AMD, they could not satisfy the demands of the customersthat they had. It wasn't until the situation became acute that AMD approached Chartered Semi, and even thendid not utilize the full 20% outsource allocationavailable ( ~7% IIRC). Why the reluctance in using non-AMD foundries at the expense of market share ? Answer: W. Jerry "real men have fabs" Sanders. It is no coincidence that AMD only explored the use of third-party foundries to add capacity when Sanders stepped down.

That is likely the primary reason that Intel settled with AMD for a relatively paltry $1bn (remember that Nvidia's settlement was $1.25bn, and the EU antitrust fine was $1.45bn by way of comparison). The secondary reason was just as likely AMD's desperate need to pay for debt servicing (see below) which is why the low-ball $1bn was accepted.

So, Intel is cause #1, Sanders hubris is cause #2, And of course, AMD own lack of strategic planning is cause #3....What other company overpays by 100% for an acquisition ( $5.4bn total paid for ATI - $1.7bn in cash from AMD, $2.5bn borrowed from lending institutions, $1.2bn in AMD shares) only to write down $1.77bnless than a year later, and another $880 millionsix months after that? Note that the money borrowed for the ATI buyout (and has served as a millstone around AMD's neck ever since) is actually less than the write down associated with the AMD's initial overvaluation of ATI. Also note that AMD was the only company interested in buying ATI in 2006.That is why AMD acquired SeaMicro. Investing in a company that has an existing knowledge base of ARM and it's implementation is easier and less resource hungry than bootstrapping AMD into the ARM environment

A stock speed 8320 runs quite cool, like 50c cool.

@HumanSmoke Also note that AMD approached Nvidia first to buy them out before they turned to ATi, which would of cost at the time another 6billlion dollars.

A failed buyout/merger attempt does not mitigate the fact that AMD overpaid for ATI, and that overpayment resulted in the company pouring income into debt servicing rather than R&D and maintaining its foundry business. Nor does it mitigate that fact that AMD were slow to realize that the market for x86 was increasing substantially faster than their own estimates.

AMD have always been a reactive company that has allowed the current state of the market to dictate their product lines, rather than think strategically and actually shape or create the market. Hardly surprising when you consider that AMD was formed by salesmen as opposed to Intel and Nvidia being formed by engineers.

Secondly, if you're gonna pull facts out of your arse:

On July 21st 2006 the market evaluated NVIDA as worth $6.2 billion, on the day AMD announced they were going to buy ATI NVIDIA market cap increased to $6.9 billion.....and AMD sat at around $10.5 billion

Given that AMD (over)paid twice ATI's effective value, I could see how you'd also think that Hector would also overpay for NvidiaWhy am I not surprised. AMD's market cap is a quarter of its FY 2006 value, and they've lost market share in x86 and GPU (discrete and overall) since the ATI acquisition. Are you Hector Ruin's biographer by any chance?

Secondly im not pulling anything out my arse, I remember very clearly reading all about it back then on this very forum about the whole AMD might buy Nvidia but didn't and bought ATi bla bla bla only part i did get wrong was what AMD was worth, I got it confused with Market share at the time.

Here are three articals that also show what nvidia was worth at the time AMD was thinking about buying. If these are wrong then dont blame the reader blame the site for posting false information.

www.tomsguide.com/us/amd-nvidia-merger,review-1061-4.html

www.forbes.com/sites/briancaulfield/2012/02/22/amd-talked-with-nvidia-about-acquisition-before-grabbing-ati/

www.neowin.net/news/rumor-amd-tried-to-buy-nvidia-before-buying-ati

Not from what I read on this forum once again seeing that AMD is now closer to 40-50% market share, yes it has lost in its CPU division but no way have they lost in the GPU division, if any body thinks that there totally mad. And how can they lose market share in GPU since 2006? that's impossible as they never owned any GPU division till after they bought ATI? :S Nope Im not, what gives you that stupid idea?

Now we all play Intel's monopoly tune on CPU upgrades. What fucking joy. :rolleyes:

www.chw.net/2013/12/amd-alista-futuros-cpus-fx-series-con-controlador-de-memoria-ddr4/

:D

However, in terms of value for the money in the class of machines I've been building, AMD has been the winner. Especially since I don't have to spend $250+ just to get a processor I can overclock. I like that I can still buy a cheap processor and get a few more horsepower out of it by overclocking with and AMD.In the official slide released in the other thread on this topic, they confirmed that the AM3+ socket processors would stay on 32nm through at least 2014. I was kind of hoping for a 28/22nm refresh too, but it doesn't look hopeful.

AMD eventually went the value route with their CPUs and do give you a lot of CPU for your money, but it looks like this isn't profitable enough for them as they need to invest the money back into R&D and these things aren't cheap to make.

I think there's no getting around the fact that each generation of products from one manufacturer should generally always leapfrog the performance of the competition in order to stay in business by keeping your market fresh and vibrant and your customers dissatisfied with their current systems and hence want to upgrade. Clearly that's not been happening.

With all this so-called "good enough" performance, it becomes a race to the bottom on prices that can't be sustained forever. The fact we're also approaching the end of "moore's law" really isn't helping, either.

If clock speeds had continued to scale past the Pentium 4's clock speeds of 3GHz+ back in 2003, to say around 20GHz+ now, along with architectural improvements, I reckon our PCs would be capable of many more fancy and importantly, useful, functions that we're not seeing today.

Raw clockspeed = branch misprediction increases, increased heat and power.

More numerous shorter pipelines forsaking absolute speed for an actual increase in throughput in a lower power envelope has proven to be the way to go. If NetBurst taught anyone anything, it's that straight line speed from a deep pipeline has limited growth potential.

There doesn't seem to be a paradigm shift in material usage in the offing in the short term that made the "Gigahertz race" a spectator sport. Moving from aluminium to copper interconnects was a huge leap. Moving to more esoteric materials (Indium/Gallium compounds) doesn't look like it will net the same revolutionary jump.

This would have quite likely enabled new functions and features that we can't even think of now, because we're effectively in a "box" that we can't see out of. Raw speed has enabled many things we take for granted today, so revving it right up would have likely given us many more things like this than we have now. Better artificial intelligence would have probably been one of them.

If you're musing on what might have been, there are plenty of "what if" scenarios that actually could have happened and would have substantially more impact on the industry:

1. Bob Noyce doesn't invest and supply start up capital for W. Jerry Sanders III. Without Noyce's investment, other backers shy away (as it was, Sanders only made the investor deadline with five minutes and $5K to spare). AMD kaput before it starts, IBM's second source for 8088 processors likely falls to National Semi, Motorola, or Zilog.

2. Gary Kildall actually gives a shit about running a company and keeps his appointment with IBM's reps rather than disappear to fly his plane. IBM choose CP/M for the Model 5150...Bill gates and MS-DOS don't get a look in.

3. Jim Harris, Bill Murto, and Rod Canion decide to go with the option of sinking their money into a Mexican restaurant. Compaq doesn't happen, the IBM ROM-BIOS isn't reverse engineered, and the IBM PC clone business either doesn't happen or is stalled past the tipping point where anyone can undercut big blue. More to the point, IBM would have then realized that personal computing's growth warranted more attention/budget that was being lavished on it's mainframe and minicomputer business.

These things all could have happened quite easily, just as Hewlett-Packard could have listened to Steve Wozniak when he approached them about building a personal computerActual intelligence (i.e. the brain) uses parallelization. Speed is pretty much a constant AFAIK limited by chemical and electromagnetic action. Boosting the latter seems to lead to erratic behaviour (analogous to cache misses ?), losing parallelization (lowering core count ?) leads to Alzheimer's and a new found love of reality TV.