Wednesday, March 10th 2021

Intel Core i9 and Core i7 "Rocket Lake" Lineup Leaked, Claims Beating Ryzen 9 5900X

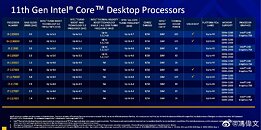

Intel is planning to debut its 11th Generation Core "Rocket Lake-S" desktop processor family with a fairly large selection of SKUs, according to leaked company slides shared by VideoCardz, which appear to be coming from the same source as an earlier report from today that talk about double-digit percent gaming performance gains over the previous generation. Just the Core i9 and Core i7 series add up to 10 SKUs between them. These include unlocked- and iGPU-enabled "K" SKUs, unlocked but iGPU-disabled "KF," locked but iGPU-enabled parts, and locked and iGPU-disabled "F" parts.

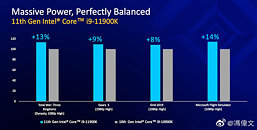

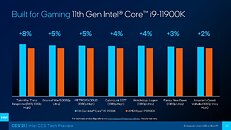

With "Rocket Lake-S," Intel appears to have hit a ceiling with the number of CPU cores it can cram onto a die alongside an iGPU, on the 75 mm x 75 mm LGA package, while retaining its 14 nm silicon fabrication node. Both the Core i9-11900 series and the Core i7-10700 series are 8-core/16-thread parts, with an identical amount of cache. They are differentiated on the basis of clock speeds as tabled below, and the lack of the Thermal Velocity Boost feature on the Core i7 parts. The Core i5 series "Rocket Lake-S" parts are reportedly 6-core/12-thread.Some additional game performance slides were leaked to the web. The first one below (also posted earlier today), deals with comparisons between the i9-11900K and the previous-generation flagship, the 10-core i9-10900K. The second slide deals with i9-11900K compared to the AMD Ryzen 9 5900X 12-core processor, where it's claiming anywhere between 2% to 8% performance gains, across a broader selection of games than the comparison to the i9-10900K. The performance lead gets higher with multi-threaded strategy games like "Total War," but slims down to 2% with first-person/third-person games such as "Far Cry: New Dawn" and "Assassin's Creed Valhalla."

Sources:

VideoCardz, HXL (Twitter)

With "Rocket Lake-S," Intel appears to have hit a ceiling with the number of CPU cores it can cram onto a die alongside an iGPU, on the 75 mm x 75 mm LGA package, while retaining its 14 nm silicon fabrication node. Both the Core i9-11900 series and the Core i7-10700 series are 8-core/16-thread parts, with an identical amount of cache. They are differentiated on the basis of clock speeds as tabled below, and the lack of the Thermal Velocity Boost feature on the Core i7 parts. The Core i5 series "Rocket Lake-S" parts are reportedly 6-core/12-thread.Some additional game performance slides were leaked to the web. The first one below (also posted earlier today), deals with comparisons between the i9-11900K and the previous-generation flagship, the 10-core i9-10900K. The second slide deals with i9-11900K compared to the AMD Ryzen 9 5900X 12-core processor, where it's claiming anywhere between 2% to 8% performance gains, across a broader selection of games than the comparison to the i9-10900K. The performance lead gets higher with multi-threaded strategy games like "Total War," but slims down to 2% with first-person/third-person games such as "Far Cry: New Dawn" and "Assassin's Creed Valhalla."

99 Comments on Intel Core i9 and Core i7 "Rocket Lake" Lineup Leaked, Claims Beating Ryzen 9 5900X

That's without considering any real professional uses, which are far more than just POV-RAY. As a software developer I both fully utilize CPUs/GPUs myself and deal with demanding programs others write, also including the IDEs that can be surprisingly taxing those days.

I'm not too impressed with a developer who runs 40 tabs on Chrome, Plex, an image editor (why, are you also a visual artist?), some 3rd party file sync app, and then removes virus scan from their PC where they do development work.. with that file sync running and automating virus spread... That's *dumb*. You'd get fired quick in most organizations.

There are edge cases and typically they are visual design and media artists. Most developers are neither. Your scenario is not credible.What's crazy with Microcenter, none - zero - of the GPUs for sale can be reserved. You have to go to the store, and the on hand quantities are low.

But with those prices, what's the point of getting a new CPU? Who's gonna go buy a $300 5600X so they can pair it with a $350 RX 570?

What's even crazier about such a pair up, there's this review site called Vortez, that has like the oldest test components on the planet. All his reviews he uses an RX 480, some old Corsair LPX 3Ghz DDR4, an an old 128GB Intel SSD I've never heard of

On that setup, guess which CPU is the fastest at Tomb Raider?

10900K? nope. 5800X? Nope. 5600X? Nope.

Zen 1.5 2600X.

And an 8700K beats everything at Total War:Warhammer at 1080p with that setup - beats comet lake, and Zen 3.

So, entirely possible, someone getting a 5600X for gaming but stuck with one of those old GPUs, may well actually get lower FPS than the guy that went cheap on the CPU.

If you are well-versed in this area as you claim, you should know that AVs are mostly another closed source program to consume more resources with potential privacy issues. Good downloading habits, encryption, authentication, VMs, etc are much more important. And you should know that file sync won't spread virus more unless it's also executed (or at leat opened if document processor has vulnerability) elsewhere.

I have to note that this is my personal computer not the corporate one. I basically don't even care even if this computer is infected. Everything is backed up with snapshots and multiple copies, and everything important is encrypted with AES and randomly generated passwords. If you are talking about corporate computers, the companies I worked in don't deploy commerical AV solutions (maybe due to privacy concerns. But do use related technologies including checksum databases). Your 30-year experience might differ.

Also an ameteur photographer with recent focus on astro (DeepSkyStacker etc). No idea why it's not credible but up to you to decide. :)

But having a little margin over sustained load is good anyways, as the PSU lasts longer then, so the difference in PSU recommendations are usually not changed because of a little higher peak power.Well, of course there is little difference during idle, the question is the characteristics during a realistic load. And the reality is that even for most power users, it's generally "medium threaded" at most, because synchronized workloads don't scale perfectly. And "running many things at once" is not a good excuse either, because it usually runs into other bottlenecks long before core count. Even when I try to stress out my 6-core with a real workload; with a couple of VMs, compiling a decent sized project and running a bunch of tabs in a browser, it rarely go much over 50% load, and runs into scheduling bottlenecks long before maxing out the cores.Chrome spawns an incredible amount of threads, up to one per CPU thread for each tab it seems, that quickly adds up to hundreds of threads. This will often "overload" the OS scheduler, and can cause serious stutter (on the desktop) even though there is barely any load on the CPU. Unless those threads are all videos or something, you are more likely to experience this kind of problems before running out of CPU cores from web browsers. And having many more CPU cores means Chrome will spawn even more worker threads for each tab, bothering your scheduler just even more.There are certainly many IDEs and text editors being very heavy these days, but very few if any of them get much better with more threads. Especially the non-native ones are horrible, like Eclipse has always been painfully sluggish, but even the JavaScript text-editors like Atom and VS Code which are so popular these days are incredibly laggy and unreliable, to the point where it can miss/misinterpret key presses. These problems are mostly due to cache misses, and there is little to do about that other than writing native cache optimized code. It's sad that these tools are worse than the tools we had back in the 90s.

I'm personally "allergic" to lag when typing code, there is little that bugs me more than an unresponsive text editor (or IDE). I can deal with operations being slow, but unresponsive typing and button clicks becomes a constant annoyance, and leads to misinterpretation of input plus loss of focus. I did in fact write most of my code (at work and home) in Gedit from ~2009-2015, because I'll choose a plain text editor over sluggish "IDE features" any day, I only eventually switched to Sublime due to newer Gedit being buggy. Still today I stick to a "plain" text editor whenever possible, that plus a dropdown terminal, tmux, grep and a few other specific tools and I'll "beat" an IDE in productivity any day, and I'm saying that as someone who was a "big IDE guy" in the 90s and early 2000s.While I have no reason to doubt your evaluation of your fellow developer, I seriously doubt his/her lack of professionalism has much to do with the specifics you mentioned, or at least it depends on how he/she uses the tools. So more context is needed to justify such claims.

While it may be less common that programmers don't possess graphical skills, it can certainly be a useful asset. I consider myself an old school programmer, yet have basic image editing and drawing skills, which has been useful quite often, for mockups, documentation or graphical elements in software.

And don't you dare judge people based on tab usage :pIt is very unlikely that an old game will scale better with an old CPU. There are no ISA differences or fundamental scaling characteristics between these CPUs to exploit in "optimizations".

This is probably just a bad test with poor testing procedures. :rolleyes:

Which gets back to the original point, if you're having to buy older hardware, you may not get the expected results with new CPUs.

Check this out as example. There are some instructions where a Phenom II has 4X the "IPC" of Skylake:

github.com/TheRainDoodle/Phenom-II-Benchmark

Your further feedbacks, such as regarding lack of professionalism, are certainly welcomed.

As for warhammer, well yeah, warhammer is single thread limited and loves the GHz, so intel still has an advantage here. Comet lake has trouble maintaining its higher boost clocks and has higher core latency then coffee lake had, which is likely holding something else back.

But the vast majority of games will favor the newest hardware.

Having good security policies is useful, but making ridiculous and pointless policies results in people not respecting them. I've seen a lot in this category from previous employers; including requiring everyone to change passwords every three months, yet having a common password for every critical internal service. Or a "security expert" suddenly wanting their Linux workstations to install AV… And some even more ridiculous ones with possible exploitations, which I'm not going to mention specifics about because they are likely still in place.I know about it, and it's a curiosity, but beyond that not applicable to implementing whole applications to run faster on older hardware.Multiple editors or IDEs is certainly common, I do it all the time.

But do they cause serious load on many cores simultainiously though?But does any of these tools scale significantly beyond 6-8 core though?I'm sorry, perhaps we miscommunicated? I thought RandallFlagg was referring to a college, perhaps I mixed two things together, my head is too tired, I certainly wasn't referring to you. Anyway, I meant to say those things mentioned were not signs lack of professionalism. :)

(And I do agree about AV)

I had numerous occasions when each VS Code window consumes a full core or so. So like 3 cores and not saturating 6-core or more but nowhere idle.Ha. RF basically declared my scenario as fake because I removed AVs and do graphics related work in addition to dev :). Whatever. Not worth it to keep arguing with him/her.

They should have know that, I'd say they are either willfully ignorant to get clicks or just plain incompetent.

No reviews exist with a release BIOS yet, but here is an example of the difference it is making just in *newer* Beta BIOS versions.

This is AIDA memory read/write - the newer BIOS has a 50% increase in write speeds :

Y-Cruncher 500M - with updated BIOS its faster than 5800X, with older BIOS it's slower than a 3600X :

Enough to take it from a loss to a win compiling Firefox vs 5800X (+3.5% faster compile time improvement just from the newer BIOS) :

Pre-release reviews can be quite a bit off, even if they got their hands on final hardware, as the release firmware may still not be included, since the CPU was probably made months ago. Early firmware may lack optimal boosting and voltage adjustments. I see no reason to rush to judgement yet, let's wait at least for launch day reviews with the release BIOS and firmware. People are still asking us to cut some slack for Zen 3 BIOS and firmware (which released >4 months ago), I think we at least can give Intel until launch day. :rolleyes:

They also completely missed the bus (pun intended) on the 2:1 vs 1:1 memory configuration on Rocket Lake. This would be the same as crippling a Zen chip by making its infinity fabric run at odd ratios aka asynchronously.

See this article.

AT didn't even reveal what motherboard they used.

Basically, that AT review is worthless.

Regardless, I don't put much faith in reviews from Anandtech, there are too many obvious tells that some of their reviewers have no idea of what they are doing. I looked at this workstation board review the other day, look at the forth paragraph under "Memory Testing: Limited QVL". The reviewer clearly don't understand what JEDEC SPD is, and assumes XMP will work on workstation boards. Such glaring incompetence questions the entire review.

edc.intel.com/content/www/us/en/products/performance/benchmarks/events/

Pre production rocket lake, chip, board roms. Probably less than ideal performance.

1 version old bios on the amd board, but current agesa, no performance benefits till Jan.

Slow ram on both sides, 3200, 4x 8gb, would like to see cas details on these disclosures, while it could be the same kit, its a pretty big target for optimizations.

Looks like an honest representation of performance as it stood in Dec. With a bit stricter power capping deployed on the AMD system.

I look forward to the mixed reviews in 15 days.

As for Anand running things at 3200, intel did as well.

Very interesting that default at 3200 is 1:2 thats... bad. AMD can run 1:1 3600 without issue and on good chips 4000.

If the config is noted, the config is noted, though it does seem a bit of a sloppy review to not note that as the reason it was lagging behind as well as test 2933 1:1.

Intel can undoubtedly run 1:1 on most RKL chips as well, and the Intel IMC in general has been able to greatly outperform AMDs Infinity fabric, which is in no small way responsible for the longevity of Skylake.

In fact, the 11900K reportedly is by default 1:1 at 3200. The lower SKUs are the ones that will reportedly run 1:1 only at 2933.

As far as AT, they could have improved their RKL results by simply running 2933 at 1:1. That is fully supported on all the RKL SKUs.

It looks like 3200 at 2:1 is an artificial limit, maybe Intel will get smart and remove that in the final BIOS updates, but we'll have to see when real reviews with final production BIOS start to come out. Also, reviews where their motherboard and complete config is known. Anand's secret benchmark platforms in this case and general lack of configuration information for their tests leave a bad taste.

www.anandtech.com/show/16549/rocket-lake-redux-0x34-microcode-offers-small-performance-gains-on-core-i711700k

"In our CPU performance tests, we are seeing an average +1.8% performance gain across all workloads. This varies between a -4.3% loss in some workloads (Handbrake) up to a +9.7% gain (Compilation). SPEC2017 single thread saw a bigger than average +3.4% gain, however SPEC2017 multi thread saw a -2.1% adjustment, making it slower."

They also had a 10% decrease in max power consumption.

Those variations in those workloads are huge. They are all over the board on performance just from a BIOS revision. It basically means their original "review" was meaningless, and their revised numbers using a newer BETA BIOS are also meaningless.

AT's approach to reviews is basically to use OEM settings on DIY hardware, their logic being that the majority of PC buyers buy OEM.

However if you are looking at an OEM setup you should be looking at PCWorld / CNET and so on - they have comprehensive reviews and the performance of an OEM system (surprise) has little to do with what brand of CPU you buy. It has to do with how the OEM set their rig up. Single channel RAM? Soldered RAM? Nerfed \ low power GPU? Nerfed BIOS? Super slow DDR4-2400 or 2133? They do all of these things and it affects OEM setups far far more than any specific hardware selection.

In contradiction to AT, and more relevant for DIY / Enthusiasts, we've also got stuff like this - which gives some idea of a typical enthusiast / DIY build can do. This is not OC, this is memory tweaked on both platforms with DDR4-3600 / 3800 CL 14:

Microcenter:

That 10700K at $249 is very tempting though, as is the 10900KF at $329.