Wednesday, December 12th 2018

Intel Xe Kicks the Door Open to Challenge the GeForce-Radeon Duopoly

Intel's discrete graphics card for PC enthusiasts is real. Intel won't just address the pro-graphics and accelerated-compute markets, but also consumer graphics, challenging the duopoly of NVIDIA GeForce and AMD Radeon. Scheduled for 2020, the new Intel Xᵉ is a family of discrete GPUs targeting client-segment (consumer graphics) as well as enterprise (pro-graphics and compute).

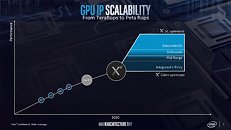

As for performance, we speculate that the first Xᵉ products could span a vast lineup of ASICs starting single-digit TFLOP/s range for the client-segment GPU, looking purely at a nondescript performance-time graph presented by Intel. This graph depicts performance double linearly over time up to Gen9, and increase to Intel's own state 1 TFLOP/s for the Gen11 iGPU core in 2019 (a full four years following Gen9). There are a spectrum of GPUs going from the entry-level client-segment all the way up to mid-range and enthusiast segment (Intel finally used the E-word).For the enterprise-segment GPU, however, we see a sharp rise in performance over Gen11, which could well be in the double-thru-triple digit TFLOP/s range. Intel is targeting the compute data-center market dominated by NVIDIA Tesla and AMD Radeon Instinct. This graph perfectly explains why Intel wants a discrete GPU now: it wants to go after the lucrative compute data-center segment, but wants to give the dividends of its R&D to gamers, too.

Source:

AnandTech

As for performance, we speculate that the first Xᵉ products could span a vast lineup of ASICs starting single-digit TFLOP/s range for the client-segment GPU, looking purely at a nondescript performance-time graph presented by Intel. This graph depicts performance double linearly over time up to Gen9, and increase to Intel's own state 1 TFLOP/s for the Gen11 iGPU core in 2019 (a full four years following Gen9). There are a spectrum of GPUs going from the entry-level client-segment all the way up to mid-range and enthusiast segment (Intel finally used the E-word).For the enterprise-segment GPU, however, we see a sharp rise in performance over Gen11, which could well be in the double-thru-triple digit TFLOP/s range. Intel is targeting the compute data-center market dominated by NVIDIA Tesla and AMD Radeon Instinct. This graph perfectly explains why Intel wants a discrete GPU now: it wants to go after the lucrative compute data-center segment, but wants to give the dividends of its R&D to gamers, too.

72 Comments on Intel Xe Kicks the Door Open to Challenge the GeForce-Radeon Duopoly

More power to them! We need more competitors. I might just fall into their camp myself.

Since I'm already eyeing a NUC, it'd be great to see it in one of those eventually.

We all know how well Tflops translate to performance between different architectures... (NOT). This tells us just about nothing. Still, nice to see the confirmation that they're pushing discrete GPU now. Never thought it'd come to this honestly, and a lot needs to happen before I trust Intel to provide a solid high end GPU...

Or.... Intel being intel, they follow NVIDIA's lead and set the pricing stupidly high, too. (they do with their CPUs so why not GPUs?) Shrug.

Either way I don't think im going to replace my Vega 64 any time soon

forum.wordreference.com/threads/deja-moo.661730/

en.wikipedia.org/wiki/Larrabee_(microarchitecture)

Why would anything good ever happen?

this will no doubt turn into backend for your subscription cloud based gaming service so you can play the latest console ports on you nuc (there you go consumer) with your thin client os

The rest... :confused:

I'm not sure what you're saying, please elaborate?

LOL

And let's not kid ourselves, a three player market is no better than a two player one. We just get to be a bit more picky.

Quick! Intel! Put me on your marketing team. I'm going to lead you down the path that rocks!