Monday, August 31st 2020

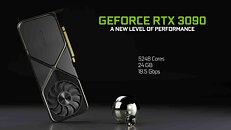

Performance Slide of RTX 3090 Ampere Leaks, 100% RTX Performance Gain Over Turing

NVIDIA's performance expectations from the upcoming GeForce RTX 3090 "Ampere" flagship graphics card underline a massive RTX performance gain generation-over-generation. Measured at 4K UHD with DLSS enabled on both cards, the RTX 3090 is shown offering a 100% performance gain over the RTX 2080 Ti in "Minecraft RTX," greater than 100% gain in "Control," and close to 80% gain in "Wolfenstein: Young Blood." NVIDIA's GeForce "Ampere" architecture introduces second generation RTX, according to leaked Gainward specs sheets. This could entail not just higher numbers of ray-tracing machinery, but also higher IPC for the RT cores. The specs sheets also refer to third generation tensor cores, which could enhance DLSS performance.

Source:

yuten0x (Twitter)

131 Comments on Performance Slide of RTX 3090 Ampere Leaks, 100% RTX Performance Gain Over Turing

Its weird because consoles don't have a DDR4 vs GDDR6 split. But when a game gets ported over to the PC, the developers will have to make a decision over what stays in DDR4, and what stays in VRAM. Most people will have 8GB of DDR4, maybe 16GBs, in addition to the 8GB+s of VRAM on a typical PC.

Your assumption that everything will be stored in VRAM and VRAM only is... strange and wacky. I don't really know how to argue against it aside from saying "no. It doesn't work like that".

NDA is in effect, but it stands to reason that most reviewers(many are hinting at this) have samples with retail ready(or very near ready) drivers. I would be willing to bet @W1zzard has at least one that will be reviewed on release.You mean RTRT? And almost everyone does, which is why AMD has jumped on the RTRT bandwagon with their new GPUs are said to have full RTRT support for both consoles and PC.Opinion that most disagree with. Don't like it? Don't buy it.That would seem logical. NVidia likes to play with their naming conventions. They always have.Fully disagree with this. My 2080 has been worth the money spent. The non-RTRT performance has been exceptional over the 1080 I had before. The RTRT features have been a sight to behold. Quake2RTX was just amazing. Everything else has been beautiful. Even if non-RTRT performance is only 50% better on a per-tier basis, as long as the prices are reasonable, the upgrade will be worth it.

As for "lesser" models, I believe you mean the likes of RTX xx70 and xx60 series. If so, it may be too premature to make that conclusion. In fact, I feel it is usually the mid end range that gets the bigger bump in performance because this is where it gets very competitive and sells the most. Consider the last gen where the RTX 2070 had a huge jump in performance over the GTX 1070 that it is replacing. The jump in performance is significant enough to bring the RTX 2070 close to the performance of a GTX 1080 Ti. The subsequent Super refresh basically allow it to outperform the GTX 1080 Ti in almost all games. So if Nvidia is to introduce the RTX 2070 with around the same CUDA cores as the RTX 2080, the improved clockspeed and IPC should give it a significant boost in performance, along with the improved RT and Tensor cores.

I don't own an NVidia GPU, but the DLSS samples I've been seeing weren't as impressive as VRS samples. NVidia, and Intel (iGPUs) implement VRS, so it'd be a much more widespread, and widely acceptable technique to reduce the resolution (ish) and minimize GPU compute, while still providing a sharper image where the player is likely to look.

AMD will likely provide VRS in the near future. Its implemented in PS5 and XBox SeX (XBSX? What's the correct shortcut for this console?? I don't want to be saying "Series X" all the time, that's too long)

-----------

In any case, methods like VRS will make all computations (including raytracing) easier for GPUs to calculate. As long as the 2x2, or 4x4 regions are carefully selected, the player won't even notice. (Ex: fast moving objects can be shaded at 4x4 and probably be off the screen before the player notices that they were rendered at a lower graphical setting, especially if everything else in the world was at full 4k or 1x1 rate).Many game developers are pushing VRS, due to PS5 / XBox support.

It seems highly unlikely that tensor cores for DLSS would be widely implemented in anything aside from NVidia's machine-learning based GPUs. DLSS is a solution looking for a problem: NVidia knows they have tensor cores and they want the GPU to use them somehow. But they don't really make the image look much better, or save much compute. (Those deep learning cores need a heck of a lot of multiplications to work, ... and these special FP16 multiplications that only are accelerated on special tensor cores).

EPIC not using RT in Unreal 5 is quite telling.

I bet that very few have memories from the era of Nvidia TNT and the TNT2 and so on and on and on, and today GTX and soon RTX .

How soon we are going to see the VTX ? :p

For DLSS 1.0, Nvidia had to train on a per-title basis. Past a certain point that was no longer necessary and now we have DLSS 2.0. I've even heard DLSS 3.0 may do away with the API specific calls, but I'll believe that when I see it (I don't doubt that would be the ideal implementation, but I have no idea how close/far Nvidia is from it).

The fact that they didn't need any "RTRT" yet deliver light effects that impressive was what I was referring to.