Thursday, October 13th 2016

Industry Leaders Join Forces to Promote New High-Performance Interconnect

A group of leading technology companies today announced the Gen-Z Consortium, an industry alliance working to create and commercialize a new scalable computing interconnect and protocol. This flexible, high-performance memory semantic fabric provides a peer-to-peer interconnect that easily accesses large volumes of data while lowering costs and avoiding today's bottlenecks. The alliance members include AMD, ARM, Cavium Inc., Cray, Dell EMC, Hewlett Packard Enterprise (HPE), Huawei, IBM, IDT, Lenovo, Mellanox Technologies, Micron, Microsemi, Red Hat, Samsung, Seagate, SK hynix, Western Digital Corporation, and Xilinx.

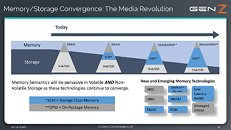

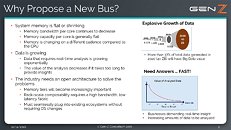

Modern computer systems have been built around the assumption that storage is slow, persistent and reliable, while data in memory is fast but volatile. As new storage class memory technologies emerge that drive the convergence of storage and memory attributes, the programmatic and architectural assumptions that have worked in the past are no longer optimal. The challenges associated with explosive data growth, real-time application demands, the emergence of low latency storage class memory, and demand for rack scale resource pools require a new approach to data access.Gen-Z provides the following benefits:

For more information, visit this page.

Modern computer systems have been built around the assumption that storage is slow, persistent and reliable, while data in memory is fast but volatile. As new storage class memory technologies emerge that drive the convergence of storage and memory attributes, the programmatic and architectural assumptions that have worked in the past are no longer optimal. The challenges associated with explosive data growth, real-time application demands, the emergence of low latency storage class memory, and demand for rack scale resource pools require a new approach to data access.Gen-Z provides the following benefits:

- High Bandwidth, Low Latency: Simplified interface based on memory semantics, scalable from tens to several hundred GB/s of bandwidth, with sub-100 ns load-to-use memory latency.

- Advanced Workloads and Technologies: Enables data centric computing with scalable memory pools and resources for real-time analytics and in-memory applications. Accelerates new memory and storage innovation.

- Compatible and Economical: Highly software compatible with no required changes to the operating system. Scales from simple, low cost connectivity to highly capable, rack scale interconnect.

For more information, visit this page.

13 Comments on Industry Leaders Join Forces to Promote New High-Performance Interconnect

This basically automatically means no Intel or NVIDIA lolz XD

Intel is trying to take over networking and interconnects... to control every subsystem.

the top end xeons have 102 GB/s memory bandwidth, a mid/high end server connected to a san might have 4 16Gb fiber ports for roughly 9GB/s storage and that is not cheap.

in local storage terms. to achieve memory like speeds you'd need ~95 sas12 ssds, or ~40 m2 drives, and enough pcie lanes to support this.