Monday, December 28th 2020

Intel Core i7-11700K "Rocket Lake" CPU Outperforms AMD Ryzen 9 5950X in Single-Core Tests

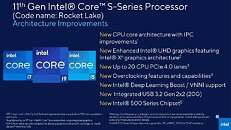

Intel's Rocket Lake-S platform is scheduled to arrive at the beginning of the following year, which is just a few days away. The Rocket Lake lineup of processors is going to be Intel's 11th generation of Core desktop CPUs and the platform is expected to make a debut with Intel's newest Cypress Cove core design. Thanks to the Geekbench 5 submission, we have the latest information about the performance of the upcoming Intel Core i7-11700K 8C/16T processor. Based on the Cypress Cove core, the CPU is allegedly bringing a double-digit IPC increase, according to Intel.

In the single-core result, the CPU has managed to score 1807 points, while the multi-core score is 10673 points. The CPU ran at the base clock of 3.6 GHz, while the boost frequency is fixed at 5.0 GHz. Compared to the previous, 10th generation, Intel Core i7-10700K which scores 1349 single-core score and 8973 points multi-core score, the Rocket Lake CPU has managed to put out 34% higher single-core and 19% higher multi-core score. When it comes to the comparison to AMD offerings, the highest-end Ryzen 9 5950X is about 7.5% slower in single-core result, and of course much faster in multi-core result thanks to double the number of cores.

Sources:

Leakbench, via VideoCardz

In the single-core result, the CPU has managed to score 1807 points, while the multi-core score is 10673 points. The CPU ran at the base clock of 3.6 GHz, while the boost frequency is fixed at 5.0 GHz. Compared to the previous, 10th generation, Intel Core i7-10700K which scores 1349 single-core score and 8973 points multi-core score, the Rocket Lake CPU has managed to put out 34% higher single-core and 19% higher multi-core score. When it comes to the comparison to AMD offerings, the highest-end Ryzen 9 5950X is about 7.5% slower in single-core result, and of course much faster in multi-core result thanks to double the number of cores.

114 Comments on Intel Core i7-11700K "Rocket Lake" CPU Outperforms AMD Ryzen 9 5950X in Single-Core Tests

This is unacceptable, requirements of 600W to 1000W power supplies and water cooling while apple makes a silicon ( apple M1 ) that consumes 5 to 20W of power, passively cooled in some laptop that can edit even 8k video files.

I am a video editor and i never thought this would be possible but i might ditch Windows for Mac if the trend is water cooling 200W cpu's and paying close to 1000$ for a video card ( this is the price for 3060ti in my country).

I don't understand how apple made that chip so powerful and consume so little power, and that 8k editing from canon R5 is mindblowing, you would need threadripper and rtx 2080 ti to work with those files on PC.

1st one is my job, 2nd personal car, 3rd is my boat.

I'll pass thank you very much.

My wallet isn't into getting raped by Intel every chance they get to for a piece that, in the end still does the same basic thing. I've owned both and there is nothing about Intel that would make me want to go exclusive with them or AMD for that fact aside from price vs what you get from each make.

Architectural advancements are certainly possible today.

All current microarchitectures supporting x86 translates it into microoperations. There is still no faster generic ISA than x86.

Yes, it's impressive what they've accomplished, but it's unlike we'll ever see anything quite like it using any other OS or at least not one that has support for a much wider hardware ecosystem.

iOS has outperformed Android for years, despite technically inferior hardware, so nothing really new here.

However, Apple's new hardware isn't likely to perform well in a lot of tasks. Luckily for Apple, there are so far no means of testing this, as the platform is too new and there aren't enough benchmarks or even enough software out there to show this.

X86/x64 CPUs are actually quite inefficient at what they do, but they are also capable of doing things other processors can't, due to the way they were designed. There are tradeoffs depending on what your needs are and this is something a lot of people don't seem to quite understand.

Let's see how well Apple's new SoCs will handle new file formats when it comes to video. And old fashioned x64 chip will be able to work with it, albeit, it'll be slow, unless you have a very price, hot, multi-core chip as you pointed out. I bet Apple will tell you to buy their new computer that will support the new file format, as that's how ARM based hardware works, as it needs dedicated co-processors that recognises the new file format to some degree to allow you to use it. This obviously doesn't apply to everything, but very much to video files.Too old? I don't think you understand the difference here. Please see above.

I don’t know about new zeland, but here in Europe it is very difficult to find one at a decent price.

Case in point: AMD Zen 3, Intel Skylake, Intel Atom, PS4, PS5, XBox One X, and XBox Series X all share the same ISA. They all have different levels of performance: with the newer chips performing better and better. Even with the same ISA, CPU-engineers are able to make better CPUs.

-----

And before someone mentions decoder width... sure... I admit that might be a problem. But that's "might be a problem", and not "proven to be a problem" yet. POWER9 pushed 6-uops / clock tick and still lost to Skylake's 4-uops/clock tick in typical code. Apple M1 is 8-uops/clock tick, so that's what starts to bring up the decoder width issue again.

Decades have passed, nothing yet have proven to be more versatile and performant than x86, even though many have tried to replace it, like Itanium.

The same happens in the programming world too; there is still nothing that can match good old C, yet people try to overengineer replacements… (cough)Rust(ahem) :rolleyes:

Virtually all code will scale towards cache misses and branch predictions, unless it relies heavily on SIMD. No ISA that I'm aware of have been able to solve this so far.I have no issue with the possibility of increasing the decoder width or even adding more execution ports. But I question how likely it is, if Cypress Cove is basically a backport of Sunny Cove, since these kinds of changes usually require a total overhaul of the cache, register files and everything on the front-end.

Wouldn't it be more likely to backport something from the execution side from e.g. Sapphire Rapids, or to simply add more execution units on existing execution ports? (like one extra MUL unit?)

x86 requires a byte-by-byte decoder, because you have 2-byte, 3-byte, 4-byte... 15-byte instructions (some of which are macro-op fused and/or micro-op split). ARM standardized upon 4-byte instructions with an occasional 8-byte macro-op fused. That means if you want to perform 4-wide decoding (and assume an average of 4-bytes per instruction), you need 64-parallel decoders: one for every byte (byte0, byte1, byte2) of the cache line.

ARM on the other hand is always 4-bytes or 8-bytes at a time (in the case of macro-op fused operations). Which means for a 64-byte decoder, ARM only need 16-parallel decoders: knowing there's no 2-byte or 3-byte instructions that could be "in-between". Just hypothetically speaking of course, I dunno really how these things are organized.

Anyway: Apple M1 shot a broadside at the x86 camp with their 8-wide decoder. I do think its relevant to bring up. However, ARM Neoverse is still only 4-wide decoding. It hasn't really been proven yet that an ultra-wide decoder (like Apple's M1) is really the best path forward.