Wednesday, June 12th 2019

NVIDIA's SUPER Tease Rumored to Translate Into an Entire Lineup Shift Upwards for Turing

NVIDIA's SUPER teaser hasn't crystallized into something physical as of now, but we know it's coming - NVIDIA themselves saw to it that our (singularly) collective minds would be buzzing about what that teaser meant, looking to steal some thunder from AMD's E3 showing. Now, that teaser seems to be coalescing into something amongst the industry: an entire lineup upgrade for Turing products, with NVIDIA pulling their chips up one rung of the performance chair across their entire lineup.

Apparently, NVIDIA will be looking to increase performance across the board, by shuffling their chips in a downward manner whilst keeping the current pricing structure. This means that NVIDIA's TU106 chip, which powered their RTX 2070 graphics card, will now be powering the RTX 2060 SUPER (with a reported core count of 2176 CUDA cores). The TU104 chip, which power the current RTX 2080, will in the meantime be powering the SUPER version of the RTX 2070 (a reported 2560 CUDA cores are expected to be onboard), and the TU102 chip which powered their top-of-the-line RTX 2080 Ti will be brought down to the RTX 2080 SUPER (specs place this at 8 GB GDDR6 VRAM and 3072 CUDA cores). This carves the way for an even more powerful SKU in the RTX 2080 Ti SUPER, which should be launched at a later date. Salty waters say the RTX 2080 Ti SUPER will feature and unlocked chip which could be allowed to convert up to 300 W into graphics horsepower, so that's something to keep an eye - and a power meter on - for sure. Less defined talks suggest that NVIDIA will be introducing an RTX 2070 Ti SUPER equivalent with a new chip as well.This means that NVIDIA will be increasing performance by an entire tier across their Turing lineup, thus bringing improved RTX performance to lower pricing brackets than could be achieved with their original 20-series lineup. Industry sources (independently verified) have put it forward that NVIDIA plans to announce - and perhaps introduce - some of its SUPER GPUs as soon as next week.

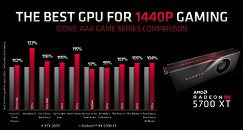

Should these new SKUs dethrone NVIDIA's current Turing series from their current pricing positions, and increase performance across the board, AMD's Navi may find themselves thrown into a chaotic market that they were never meant to be in - the RT 5700 XT for $449 features performance that's on par or slightly higher than NVIDIA's current RTX 2070 chip, but the SUPER version seems to pack in just enough more cores to offset that performance difference and then some, whilst also offering raytracing.Granted, NVIDIA's TU104 chip powering the RTX 2080 does feature a grand 545 mm² area, whilst AMD's RT 5700 XT makes do with less than half that at 251 mm² - barring different wafer pricing for the newer 7 nm technology employed by AMD's Navi, this means that AMD's dies are cheaper to produce than NVIDIA's, and a price correction for AMD's lineup should be pretty straightforward whilst allowing AMD to keep healthy margins.

Sources:

WCCFTech, Videocardz

Apparently, NVIDIA will be looking to increase performance across the board, by shuffling their chips in a downward manner whilst keeping the current pricing structure. This means that NVIDIA's TU106 chip, which powered their RTX 2070 graphics card, will now be powering the RTX 2060 SUPER (with a reported core count of 2176 CUDA cores). The TU104 chip, which power the current RTX 2080, will in the meantime be powering the SUPER version of the RTX 2070 (a reported 2560 CUDA cores are expected to be onboard), and the TU102 chip which powered their top-of-the-line RTX 2080 Ti will be brought down to the RTX 2080 SUPER (specs place this at 8 GB GDDR6 VRAM and 3072 CUDA cores). This carves the way for an even more powerful SKU in the RTX 2080 Ti SUPER, which should be launched at a later date. Salty waters say the RTX 2080 Ti SUPER will feature and unlocked chip which could be allowed to convert up to 300 W into graphics horsepower, so that's something to keep an eye - and a power meter on - for sure. Less defined talks suggest that NVIDIA will be introducing an RTX 2070 Ti SUPER equivalent with a new chip as well.This means that NVIDIA will be increasing performance by an entire tier across their Turing lineup, thus bringing improved RTX performance to lower pricing brackets than could be achieved with their original 20-series lineup. Industry sources (independently verified) have put it forward that NVIDIA plans to announce - and perhaps introduce - some of its SUPER GPUs as soon as next week.

Should these new SKUs dethrone NVIDIA's current Turing series from their current pricing positions, and increase performance across the board, AMD's Navi may find themselves thrown into a chaotic market that they were never meant to be in - the RT 5700 XT for $449 features performance that's on par or slightly higher than NVIDIA's current RTX 2070 chip, but the SUPER version seems to pack in just enough more cores to offset that performance difference and then some, whilst also offering raytracing.Granted, NVIDIA's TU104 chip powering the RTX 2080 does feature a grand 545 mm² area, whilst AMD's RT 5700 XT makes do with less than half that at 251 mm² - barring different wafer pricing for the newer 7 nm technology employed by AMD's Navi, this means that AMD's dies are cheaper to produce than NVIDIA's, and a price correction for AMD's lineup should be pretty straightforward whilst allowing AMD to keep healthy margins.

126 Comments on NVIDIA's SUPER Tease Rumored to Translate Into an Entire Lineup Shift Upwards for Turing

If it is the first one, then I'm sorry. If it was the second one, then whether or not something else came out later doesn't effect your usage ability of the card. You still get the same "value" out of the card as you got before the new one came out.

I really hope Nvidia is flushing their non-SUPER chips out of the market, because 12 GPUs is just ridiculous.

EDIT: Forgot about the rumored 1650 Ti, so that's at least 13 GPUs to keep a hold of! Pray for @W1zzard, those GPU tests are not going to be fun...

So the 'premium' product is still up there on its number one halo spot. The rest is not 'premium' in the stack, an x80 is nothing special in the usual line of things. The price is special, sure .... :D

The value of every frame per second the card can deliver is now cut in half because the card is now worth half as much as it was worth before.

The value (ie what the card could be sold to someone else for) has now decreased and their "investment" is going to return half as much as it could have if they had sold it before these cards existed.

That's the "value" most would be upset about losing.Sorry but for many its actually a bit of both we buy the card to play games or what not but we also take into account the resale value of the card and how long the card can last without losing too much of its value for resale.

The process has allowed me to upgrade almost yearly and lose very little value in my parts over the years.

This move will be the first time I will most likely have to spend double what I normally pay to upgrade due to the quick de-value of the current 20 series.

It is a big deal to some of us for more than "clout" reasons.

If you buy a new card at x dollars every year, and sell the old for 67% of the price (which is optimistic), after three years you've spent 1.67x the original card price(probably >2x if we account for shipping), for very minimal upgrades. In general you would be much better off buying one or two tiers up and keeping it for three years.

My last investment was about 1500 for my two 1080 ti's and I sold them for 1250 2 years later took that 1250 got a base 2080ti and watwecooled it.

All in all I only "spent" about 250 dollars for 2 years worth of top end gaming.

Now I'll be lucky to clear 700 and then I'll be reinvesting another 1200 or more meaning I'll have spent over 500 for 6 months or less of similar top end gaming.

You see the issue I'm facing? If I were to upgrade not saying I would but the "value" of my setup is absolutely trashed by this.

Also, by purchasing a top end GPU yearly, you should never expect any sort of return and always expect to lose your ass.

The only thing that will force my hand to upgrade at this point is hdmi 2.1 support so if these cards have it I will upgrade if not I'll get to hold out for the next Gen, but as someone with a new oled tv that has hdmi 2.1 and no sources that support it currently. The new Gen consoles and a video card that offers it are the only things I'm looking forward to right now.

A whole tier bump for the same price, when was the last time you've seen that from greedy green?

Something is going on... hm... what could it be, hehehe...It MUST BE. Else we'd need to call BS on the whole "pretty cool margins".

Thats going to be:

GeForce RTX2080 Super Super JetStream !? :D:laugh:

www.palit.com/palit/vgapro.php?id=3048&lang=en

Yields were initially a little "sub-optimal" leading Nvidia to create the "A" and "non-A" versions of the chips.

Regardless, we're getting cheaper Turing now.

Hell, till I see benchmarks I'm not even sure we're back into sane territory.