Friday, December 13th 2019

Ray Tracing and Variable-Rate Shading Design Goals for AMD RDNA2

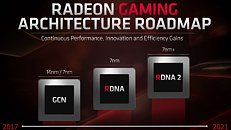

Hardware-accelerated ray tracing and variable-rate shading will be the design focal points for AMD's next-generation RDNA2 graphics architecture. Microsoft's reveal of its Xbox Series X console attributed both features to AMD's "next generation RDNA" architecture (which logically happens to be RDNA2). The Xbox Series X uses a semi-custom SoC that features CPU cores based on the "Zen 2" microarchitecture and a GPU based on RDNA2. It's highly likely that the SoC could be fabricated on TSMC's 7 nm EUV node, as the RDNA2 graphics architecture is optimized for that. This would mean an optical shrink of "Zen 2" to 7 nm EUV. Besides the SoC that powers Xbox Series X, AMD is expected to leverage 7 nm EUV for its RDNA2 discrete GPUs and CPU chiplets based on its "Zen 3" microarchitecture in 2020.

Variable-rate shading (VRS) is an API-level feature that lets GPUs conserve resources by shading certain areas of a scene at a lower rate than the other, without perceptible difference to the viewer. Microsoft developed two tiers of VRS for its DirectX 12 API, tier-1 is currently supported by NVIDIA "Turing" and Intel Gen11 architectures, while tier-2 is supported by "Turing." The current RDNA architecture doesn't support either tiers. Hardware-accelerated ray-tracing is the cornerstone of NVIDIA's "Turing" RTX 20-series graphics cards, and AMD is catching up to it. Microsoft already standardized it on the software-side with the DXR (DirectX Raytracing) API. A combination of VRS and dynamic render-resolution will be crucial for next-gen consoles to achieve playability at 4K, and to even boast of being 8K-capable.

Variable-rate shading (VRS) is an API-level feature that lets GPUs conserve resources by shading certain areas of a scene at a lower rate than the other, without perceptible difference to the viewer. Microsoft developed two tiers of VRS for its DirectX 12 API, tier-1 is currently supported by NVIDIA "Turing" and Intel Gen11 architectures, while tier-2 is supported by "Turing." The current RDNA architecture doesn't support either tiers. Hardware-accelerated ray-tracing is the cornerstone of NVIDIA's "Turing" RTX 20-series graphics cards, and AMD is catching up to it. Microsoft already standardized it on the software-side with the DXR (DirectX Raytracing) API. A combination of VRS and dynamic render-resolution will be crucial for next-gen consoles to achieve playability at 4K, and to even boast of being 8K-capable.

119 Comments on Ray Tracing and Variable-Rate Shading Design Goals for AMD RDNA2

Wolfenstein II was the first game to implement it with minor but measurable performance boost.

UL has VRS feature test out as part of 3DMark: www.techpowerup.com/261825/ul-benchmarks-outs-3dmark-feature-test-for-variable-rate-shading-tier-2

There are some documents and videos that have pretty good explanation of how this works:

software.intel.com/en-us/videos/use-variable-rate-shading-vrs-to-improve-the-user-experience-in-real-time-game-engines

developer.nvidia.com/vrworks/graphics/variablerateshading

—-certain fanbois

-braindead logic

RDNA2 is more likely to be a next generation thing, RX 6000-series or whatever its eventual name will be.

Variable rate shading and raytracing are things i do not care at all. First one reduces image quality second one done properly, not faked, as it is with for example RTX library (yes, rtx is simplified and faked in many aspects form of "raytracing" and still killing performance too much), requires drastic changes to graphics rendering overall and still tons of performance which we will not reach in many decades.

...won't be a mainstream thing until it's offered on "all ranges [of GPUs] from low-end to high-end..

...RT cores wasting die space.

:roll:

Also don't forget that RX 5700 was renamed "last minute", even some official photos displayed "RX 690".

Hope it makes sense I am all tired and dizzy :)

and why would that be blurry ? it's not an image reconstruction tehcnique.

I'm running on a (admittedly top of the line) card from a generation ago (1080TI) it's 2 years old. I would upgrade if I were to get a reliable, significant, increase in framerates. A new feature that drops framerates? No.

Now, if you were to tell me I could have both, I'd consider it, but I'm not going to buy a card which is a 10-25% framerate improvement, for about 2x the price, of a 2 year old card.

*I'm almost convinced that VRS is the key piece I am hungry for. I've taken a hard look at a couple different samples, and if it works like I've seen, I'd certainly accept the image quality 'drop' for part of the screen, for the framerate improvements they have been touting.

And if that came with RR, meh. I wouldn't complain, but it wouldn't be the reason I buy a new card. Besides. HOW many games support hardware RayTracing so far? 7? 8? of which I'd actually think about paying for 2 or so of?

Hardware support with compressed vector tables to reduce the computational overhead of real time is one. Allow a CPU core or two to work out basic angle dependant setup info then hand that off the same way we got angle independent anisotropic filtering.

How many games support Ray tracing again? Physx hardware accelerated fluff still in the news? Overburden of Tesselation? Hair works?

Nvidia deserves the flack for what they do, just like AMD deserves so much shit it would take a bulldozer to move it, except it overheated with it's "real men" cores.

"Hey, want to one up ourselves with the dumbest naming since Xbox One and Xbox X?"

"Sure, let me hear it, Brain Dead Idiot Employee # 2!"

"Xbox Series X!"

"You did it. You crazy son of a bitch, you did it."

You know how they came up with the hardware specs? They just copied Sony leaks and rumors.

those features even don't change the way you games.

GIMMICKS! :nutkick:

:roll:

Let's say you have a scene with a nice landscape in the lower half of the screen, and a sky (just a skydome or skybox) in the upper half. You might think that rendering the upper half in much fewer samples might be a good way to optimize away wasteful samples. But the truth is that low detail areas like skies are very simple to render in the first place, so you will probably end up with a very blurry area and marginal performance savings.

To make matters worse, this will probably only increase the frame rate variance (if not applied very carefully). If you have a first-person game walking a landscape, looking straight up or down will result in very high frame rates while looking straight forward into an open landscape will give low performance. Even if you don't do any particular fancy LoD algorithms, the GPU is already pretty good at culling off-screen geometry, and I know from experience that trying to optimize away any "unnecessary" detail can actually increase this frame rate variance even more.