Wednesday, March 18th 2020

Sony Reveals PS5 Hardware: RDNA2 Raytracing, 16 GB GDDR6, 6 GB/s SSD, 2304 GPU Cores

Sony in a YouTube stream keynote by PlayStation 5 lead system architect Mark Cerny, detailed the upcoming entertainment system's hardware. There are three key areas where the company has invested heavily in driving forward the platform by "balancing revolutionary and evolutionary" technologies. A key design focus with PlayStation 5 is storage. Cerny elaborated on how past generations of the PlayStation guided game developers' art direction as the low bandwidths and latencies of optical discs and HDDs posed crippling latencies arising out of mechanical seeks, resulting in infinitesimally lower data transfer rates than what the media is capable of in best case scenario (seeking a block of data from its outermost sectors). SSD was the #1 most requested hardware feature by game developers during the development of PS5, and Sony responded with something special.

Each PlayStation 5 ships with a PCI-Express 4.0 x4 SSD with a flash controller that has been designed in-house by Sony. The controller features 12 flash channels, and is capable of at least 5.5 GB/s transfer speeds. When you factor in the exponential gains in access time, Sony expects the SSD to provide a 100x boost in effective storage sub-system performance, resulting in practically no load times.The secret sauce here is that Sony is using its own protocol instead of NVMe, in supporting 6 data priority tiers versus 2 on NVMe. Each PlayStation 5 ships with an 825 GB SSD, which is expandable using external HDDs over USB, or a selection of third-party M.2 NVMe SSDs certified by Sony. PlayStation 4 games can run directly off your external HDD, but PlayStation 5 games have to be transferred from your HDD to the console's main SSD. Past generations of PlayStation implemented ZLib data compression on Blu-ray and HDD media. PlayStation 5 is implementing Kraken, with hardware-accelerated de-compression via fixed-function hardware built directly into the main SoC.

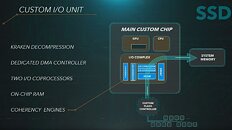

SoC is where Cerny sounded restrained in what he wanted to disclose. The SoC is a semi-custom chip designed by Sony and AMD, possibly on a 7 nm-class silicon fabrication process. Sony won't specify if it is a monolithic silicon or an MCM, but there are three building-blocks to it: CPU, GPU, and I/O complex. The CPU is based on AMD "Zen 2" x86-64 microarchitecture, and the GPU is based on the company's upcoming RDNA2 graphics architecture.

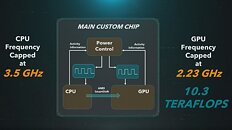

There are eight "Zen 2" CPU cores, although the company didn't mention if SMT is featured. The maximum CPU clock speed is 3.50 GHz. The GPU is a whole different story from the one on the Xbox Series X Velocity Engine semi-custom chip. Sony decided to go with 36 RDNA2 compute units ticking at up to 2.23 GHz engine clock, compared to 52 compute units running at up to 1.825 GHz on the upcoming Xbox. Sony's GPU ends up with up to 10.3 TFLOPs max compute throughput, compared to Microsoft's 12 TFLOPs.

Sony also shed some "light" on the hardware-accelerated real-time ray-tracing approach AMD is taking with RDNA2. Apparently, each compute unit features a hardware component called "Intersection Engine," with roughly the same function as an RT core on NVIDIA "Turing," which is to calculate the intersection of rays with geometry (such as triangles or polygons) in a scene. This combines with a fairly standardized bounding volume hierarchy (BVH) model to achieve a hybrid of ray-traced elements in an otherwise conventional rasterized 3D scene (pretty much where NVIDIA is right now with RTX). On PlayStation 5, RDNA2's ray-tracing hardware is leveraged for positional audio, global illumination, shadows, reflections, and full ray-tracing.

The third key component of the SoC is the I/O complex. This handles all of the chip's I/O, not just with peripherals and video output, but also storage and memory. There are dedicated I/O co-processors on-silicon designed to reduce the various I/O's processing stack on the CPU cores, and reduce latencies at various stages. There's also a certain amount of SRAM that caches transfers between the various components on the I/O complex. The custom chip leverages AMD SmartShift in power-management.

PlayStation 5 uses 16 GB of GDDR6 memory. Sony did not mention the memory clock, bandwidth, or even the memory bus width. It did drop some hints about memory management. It appears like PlayStation 5 does not partition memory the way Xbox Series X does, and possibly sticks to the hUMA model of the PlayStation 4 (using a common pool of physical memory for system- and video memory).

Lastly, a large chunk of Sony's presentation focused on the next frontier for hardware innovation: positional audio. Sony is investing heavily on positional audio that takes into account the gamer's physical HRTF (head-related transfer function). The company is leveraging the vast amounts of CPU power gained from the upgrade to "Zen 2," to achieve this.We still don't know what a PlayStation 5 console will look like.

Source:

Sony Computer Entertainment (YouTube)

Each PlayStation 5 ships with a PCI-Express 4.0 x4 SSD with a flash controller that has been designed in-house by Sony. The controller features 12 flash channels, and is capable of at least 5.5 GB/s transfer speeds. When you factor in the exponential gains in access time, Sony expects the SSD to provide a 100x boost in effective storage sub-system performance, resulting in practically no load times.The secret sauce here is that Sony is using its own protocol instead of NVMe, in supporting 6 data priority tiers versus 2 on NVMe. Each PlayStation 5 ships with an 825 GB SSD, which is expandable using external HDDs over USB, or a selection of third-party M.2 NVMe SSDs certified by Sony. PlayStation 4 games can run directly off your external HDD, but PlayStation 5 games have to be transferred from your HDD to the console's main SSD. Past generations of PlayStation implemented ZLib data compression on Blu-ray and HDD media. PlayStation 5 is implementing Kraken, with hardware-accelerated de-compression via fixed-function hardware built directly into the main SoC.

SoC is where Cerny sounded restrained in what he wanted to disclose. The SoC is a semi-custom chip designed by Sony and AMD, possibly on a 7 nm-class silicon fabrication process. Sony won't specify if it is a monolithic silicon or an MCM, but there are three building-blocks to it: CPU, GPU, and I/O complex. The CPU is based on AMD "Zen 2" x86-64 microarchitecture, and the GPU is based on the company's upcoming RDNA2 graphics architecture.

There are eight "Zen 2" CPU cores, although the company didn't mention if SMT is featured. The maximum CPU clock speed is 3.50 GHz. The GPU is a whole different story from the one on the Xbox Series X Velocity Engine semi-custom chip. Sony decided to go with 36 RDNA2 compute units ticking at up to 2.23 GHz engine clock, compared to 52 compute units running at up to 1.825 GHz on the upcoming Xbox. Sony's GPU ends up with up to 10.3 TFLOPs max compute throughput, compared to Microsoft's 12 TFLOPs.

Sony also shed some "light" on the hardware-accelerated real-time ray-tracing approach AMD is taking with RDNA2. Apparently, each compute unit features a hardware component called "Intersection Engine," with roughly the same function as an RT core on NVIDIA "Turing," which is to calculate the intersection of rays with geometry (such as triangles or polygons) in a scene. This combines with a fairly standardized bounding volume hierarchy (BVH) model to achieve a hybrid of ray-traced elements in an otherwise conventional rasterized 3D scene (pretty much where NVIDIA is right now with RTX). On PlayStation 5, RDNA2's ray-tracing hardware is leveraged for positional audio, global illumination, shadows, reflections, and full ray-tracing.

The third key component of the SoC is the I/O complex. This handles all of the chip's I/O, not just with peripherals and video output, but also storage and memory. There are dedicated I/O co-processors on-silicon designed to reduce the various I/O's processing stack on the CPU cores, and reduce latencies at various stages. There's also a certain amount of SRAM that caches transfers between the various components on the I/O complex. The custom chip leverages AMD SmartShift in power-management.

PlayStation 5 uses 16 GB of GDDR6 memory. Sony did not mention the memory clock, bandwidth, or even the memory bus width. It did drop some hints about memory management. It appears like PlayStation 5 does not partition memory the way Xbox Series X does, and possibly sticks to the hUMA model of the PlayStation 4 (using a common pool of physical memory for system- and video memory).

Lastly, a large chunk of Sony's presentation focused on the next frontier for hardware innovation: positional audio. Sony is investing heavily on positional audio that takes into account the gamer's physical HRTF (head-related transfer function). The company is leveraging the vast amounts of CPU power gained from the upgrade to "Zen 2," to achieve this.We still don't know what a PlayStation 5 console will look like.

178 Comments on Sony Reveals PS5 Hardware: RDNA2 Raytracing, 16 GB GDDR6, 6 GB/s SSD, 2304 GPU Cores

Beyond that, that it doesnt thermal throttle is nice, but "it's to keep cooling noise acceptable" is BS - if that was the case, they wouldn't be pushing GPU clocks to 2.25GHz in the first place. Designing for a fixed power target instead of a fixed clock target is perfectly viable, but it is an approach that sacrifices some performance.

I also find it rather telling that they're using SmartShift - while it's a brilliant little piece of tech, it's first and foremost designed for thermally constrained laptops (where the heat dissipation capability of the cooling system is lower than what the hardware can produce if left to run free), so using it here clearly indicates that they expect there to be a need for balancing power within a fixed total budget. In other words, the hardware could do more if it just had better cooling and power delivery.Well, sure, I didn't mean to say there were no advantages, just that they are mostly tiny and more than counteracted by having a wider GPU with more total resources. Not to mention that the 256-bit PS5 is far more likely to be memory-bound than the 320-bit XSX.The PS5 has less GPU horsepower, and that power is dependent on avoiding power spikes, so developers will (at least until they become intimately familiar with the system) need to cut down their targets a bit to maintain acceptable performance.

I would think the XOX vs. PS4 Pro is a reasonable analogy (even if the difference between them is bigger than the difference here): the PS4 Pro consistently runs cross-platform games at lower resolutions and detail levels, and often still struggles to match the frame rates of the XOX. Just check out pretty much any of Digital Foundry's excellent comparison videos (Jedi: Fallen Order springs to mind as a stand-out that I watched).... GDDR6 is still RAM ...Consoles are generally sold at break-even or at a loss, with profits made on game licencing. Any game sold for PS4 or Xbox One comes with a $10 licence fee to the platform owner (though I believe this is slightly lower for cheap indies etc.). That's why console games are more expensive and the hardware is much cheaper when compared to PC.

As for the PS4 being technologically inferior ... to what? It soundly beat the XBone. PS4 Pro vs. Xbox One X is another story entirely, but you didn't say Pro.No reason to expect consoles with with loads of fast memory, fast 8c16t CPUs, native flash storage and very powerful RT-enabled GPUs to hobble anything for quite a while. Jaguar was crap even back in 2012-13, Zen2 is state of the art - and they haven't even cut clocks much! These consoles are far superior to the average gaming PC in pretty much every respect (remember, the average PC has a 4c8t CPU and a GTX 1060) and will allow for massive growth in the quality of games moving forward, including sorely missed improvements in CPU-bound tasks, audio, physics, AI, etc.Yeah, I'm really looking forward to an increased focus on audio on both consoles. And given that the processing is done on AMD hardware it wouldn't be too big of a stretch of the imagination to see it implemented on the PC through GPU accelerated audio either. True positional and spatial audio will be a massive boon to immersion for sure.Yeah, developers need to work hard to avoid power draw spikes to keep clocks consistent and thus avoid this. Hopefully there's at least some leeway for short-term power spikes in the management system.Care to expand on that? Not that I don't think it will be good, but it will definitely need to be cheaper (even if Sony has a massive mindshare advantage).Flash isn't cheap, the controller likely isn't either, but it won't be that much more expensive than the competition (which has more flash).Flash is flash, it costs what it costs. Prices will come down in time, but slowly. Only way to make the controller cheaper without losing performance is moving to a smaller node, which takes time unless you want to pay a premium (which obviously negates any savings).Judging by what they said they have pushed clocks pretty much as far as they can go - a 10% drop in power for a 2-3% drop in performance was mentioned - so it'll likely still stay quite high even when power limited. 2,1GHz/9,6TFlops is likely entirely sustainable.The odd size is due to the SSD controller having 12 flash channels instead of the "normal" 8 (or 4 for lower end drives). With flash chip capacities being the same you'll then end up with strange total capacities.

The whole gaming ecosystem has been based on AMD hardware since the current console generation launched in 2013, but the difference is that it's now high-end hardware rather than low-end CPUs and mid-range GPUs. As such it'll push games further and promote propagation of advanced tech like spatial audio and RTRT. This is rather exciting, even if the underlying tech is for the most part known.

I have to say the clock rates of this chip makes me rather excited for upcoming AMD GPUs, though. If a console can hit 2.23GHz, PC GPUs should be able to exceed that, at least when OC'd. If the upcoming RDNA 2 GPUs run at 2-2.1GHz stock without being terribly inefficient, that's a big improvement for sure.

And i dont think the PS5 would be too weak or so. Some PS3's and PS4's could be overclocked by a simple firmware push. They did it with some games, raising the clocks. Yes it generated more heat thus more noise but i'm sure there's plenty of headroom left in those things.

The XSX is sporting a super beefy cooling solution, but because of the fixed clocks, it'll probably have to ran up and down accordingly (shouldn't be too loud with a 130mm fan though).

Nvidia had something somewhat similar in laptops, it's called platform boost or something like that, it's basically a way of managing clocks by taking into account the total power output from both CPU and GPU and not independently. Because you can have for instance a situation when the CPU isn't doing much but the GPU is pegged, the total power output will be low so it makes sense to give the GPU more power headroom.

All in all PS5's custom chip seems much more advanced.

I still think this will be a good console, and as last time around I'll be getting both, but the XSX will likele be where I play most console games unless the 3D audio stuff Sony is doing is radically better than MS' competing initiative (it also has dedicated 3D audio hardware, after all).I wasn't talking about combat movement, but general in-game movement - in third-person titles specifically. And the example brought up by the post you responded to was specifically Uncharted, after all. Also, is this when I'm supposed to say "git gud"? Or does that just apply to hardcore PC games? Practice does make perfect, after all, and as I said, after an initial acclimation period I got perfectly adequately used to aiming with a gamepad in the games that required it. But then again, as I said, I don't play competitive shooters, and I definitely don't play them on consoles.

All will depend on the available contents upon launch. They have to push the hardware to its limits and offer the most exciting visual experience ever.

If not.... 7 years of not expected sales will follow.

XBOX will have aprox 20-25% faster GFX

ALSO XBOX can hold 3.8GHz continuously while PS5 boosts up to 3.5 GHz which means you get maybe 3.2GHz in average from PS5

XBOX will have aprox 15-20% faster CPU

Keep in mind what HIDDEN POWER XBOX holds if they decide to unlock boost, then you would see up to a total of 40% faster GFX and 40% faster CPU than PS5

PS5 saved money on silicon and cooling solution, sure maybe it will be a little cheaper but who cares about 50 or 100 bucks difference when you going to have that console for years to come.

This is EZZZZZ, i am going with XBOX

As for "git gud", that's what PCMR douche-bros tell people when they complain something is too difficult or otherwise ought to be tweaked to be more accessible, i.e. telling them that the fault lies not in the game but their lack of skill - it was meant as a joke based on your brash dismissal of controllers seeming to stem from rather little experience with them, and in a worst-case scenario at that.I obviously have that too, but I work researching games, so having access to all relevant current platforms is crucial to being able to do my work properly (including understanding the ecosystems these games exist within).

I agree that real-world examples would be helpful, though a lot of the stuff they're talking about is hard to demonstrate - the 3D audio won't work as a demo since it needs the dedicated hardware and tuning to work, and the SSD... well, they showed that Spiderman demo a long time back, which I guess demonstrates it, but also feels kind of unnecessary. I doubt there'll be much perceptible difference between "instantaneous" fast travel/load times/etc on a PS5 and the couple of seconds the same would take on an XSX with half the SSD speed.Uh, what? Zen has no relation to Jaguar beyond being an X86 CPU. As for "nothing ground-breaking in AMD's x86-64 implementation", you know AMD literally invented X86-64, right? There's nothing ground-breaking in any other implementations of it either, but it works pretty damn well. As for the hardware not being their first choice - why not? Sure, Nvidia currently has faster GPUs, but they don't make CPUs (unless you want low-power, low-performance ARM), and making a console with two different hardware vendors would be momentously more complicated at today's development complexity levels in terms of fine-tuning the OS, firmware, APIs and ultimately software.

As for being excited, the exciting thing here is the baseline spec for multi-platform games (which includes >99% of AAA games) moving from 8 dog-slow single-thread ~2GHz CPU cores to 8 fast dual-threaded CPU cores at 170-180% frequency, with GPU performance jumping 2-5x (depending on whether you measure from base consoles or Pro/X) allowing for massively improved visual fidelity for the next decade of game development. Adding RT and other modern, advanced rendering techniques will also allow for radically more advanced games in the coming years. You're of course right that games will make these platforms, but I don't see that as an issue - current consoles are already struggling heavily with current titles (try playing Jedi: Fallen Order on a base PS4 or XB1 - it's terrible), so giving developers more to play with will inevitably result in great-looking and -feeling titles. There's a lot that can be done with these consoles that can't possibly be done with current ones, so the future of cross-platform game development is suddenly looking dramatically better than it has done for the past year or more.

I just need to use analog because that's the way it is? Well, as a matter of fact I can choose what I want and will stay with a PC with FPS games. I didn't bash anyone I said i prefer KBM instead controller because aiming for me is horrible and you can't compare analog to KBM because accuracy and response is way better on KBM. Now you talk about preference but when I mentioned KBM for me you got somehow offended?I didn't know what "git gud" means and who is using it and why thanks for clarification. Anyway it is not fair to call somebody douche-bro when that person is not agreeing with you.

It's not about difficulty but a matter of preference. If you find PS analog controller better for aiming then fine. I don't. Mouse and keyboard please since for me it's way more accurate.

In fact, CS:GO afair wasn't a big hit on PS3 and wasn't ported to PS4. Yet, it remains one of most popular games on PCs.